From Pixels to Predictions: How AI and Deep Learning Are Revolutionizing Nanocarrier Quantification in Drug Delivery

This article provides a comprehensive guide for researchers on implementing AI-driven deep learning pipelines for nanocarrier quantification.

From Pixels to Predictions: How AI and Deep Learning Are Revolutionizing Nanocarrier Quantification in Drug Delivery

Abstract

This article provides a comprehensive guide for researchers on implementing AI-driven deep learning pipelines for nanocarrier quantification. We explore the foundational concepts, detailing why manual analysis fails and how convolutional neural networks (CNNs) offer a superior solution. A step-by-step methodological walkthrough covers dataset creation, model architecture (e.g., U-Net, Mask R-CNN), and training protocols. Critical troubleshooting sections address common pitfalls like limited data, overfitting, and class imbalance. Finally, we discuss rigorous validation metrics and comparative analyses against traditional methods, highlighting the transformative impact on reproducibility and throughput in nanomedicine research and preclinical development.

The Quantification Challenge: Why AI is the Future of Nanocarrier Analysis

Within the broader thesis on developing an AI deep learning pipeline for nanocarrier quantification, this application note details the critical limitations of traditional manual microscopy analysis. As nanomedicine advances, the accurate quantification of nanoparticles (NPs) in biological samples—essential for assessing drug loading, targeting efficiency, and biodistribution—is hampered by subjective, low-throughput manual methods. This document outlines specific bottlenecks, provides protocols for comparative validation experiments, and presents data that underscore the necessity for automated, AI-driven solutions.

Comparative Analysis of Manual vs. Automated Quantification Bottlenecks

The table below summarizes key performance metrics, highlighting the inefficiencies inherent in traditional manual analysis.

Table 1: Quantitative Comparison of Manual vs. Idealized Automated Analysis for Fluorescent Nanocarrier Quantification

| Performance Metric | Traditional Manual Quantification | Target Automated/AI Pipeline |

|---|---|---|

| Analysis Time per Image | 5 - 15 minutes | < 30 seconds |

| Inter-Analyst Variability | 15% - 25% (Coefficient of Variation) | < 5% (Coefficient of Variation) |

| Throughput (Images per Day) | 30 - 80 | 500+ |

| Object Detection Sensitivity | Prone to miss dim or clustered particles | High, consistent across intensity ranges |

| Quantitative Parameters | Typically limited to count and mean size | Multi-parametric (count, size, shape, intensity, spatial distribution) |

| Subjectivity in Thresholding | High - Influenced by user bias | Standardized, reproducible algorithms |

| Fatigue-Induced Error Rate | Increases significantly after 2 hours | Negligible |

Experimental Protocol: Validating Manual Quantification Limitations

This protocol is designed to empirically demonstrate the bottlenecks listed in Table 1.

Protocol 2.1: Inter-Analyst Variability Test

Objective: To quantify the subjectivity and variability in manual thresholding and counting of fluorescent nanocarriers. Materials: See "The Scientist's Toolkit" below. Procedure:

- Sample Preparation: Seed cells in a 24-well plate. Treat with fluorescently labelled lipid nanoparticles (LNPs) for 4 hours. Fix with 4% PFA for 15 minutes and mount with DAPI-containing medium.

- Image Acquisition: Using a confocal microscope, acquire 10 high-resolution (1024x1024) z-stack images (3 slices) from random fields per well for 3 independent replicates. Use consistent settings (e.g., 60x oil objective, laser power, gain).

- Blinded Manual Analysis: Provide the 30 image sets to 3 trained analysts.

- Each analyst processes images using FIJI/ImageJ.

- Apply a Gaussian Blur (σ=2) to reduce noise.

- Manually set a threshold for the fluorescent channel to segment particles. Critically, each analyst does this independently based on their judgment.

- Use the "Analyze Particles" function to count particles (size: 0.1-5 µm², circularity: 0.5-1.0).

- Record counts and the binary mask used.

- Data Compilation: Compile counts from all analysts. Calculate mean, standard deviation, and coefficient of variation (CV) for each image.

Protocol 2.2: Throughput and Fatigue Assessment

Objective: To measure the decline in accuracy and consistency over a continuous analysis period. Procedure:

- Using one analyst from Protocol 2.1, provide a set of 100 pre-acquired images.

- The analyst processes images in a single session, with a 5-minute break every hour.

- Record the time taken per image and the particle count result.

- Ground Truth Generation: Use a consensus mask from 3 analysts or an advanced semi-automated macro as a reference.

- Calculate error rate (deviation from ground truth) and plot it against time into the session and cumulative image number.

Visualizing the Workflow and Limitations

Title: Manual Analysis Workflow and Key Bottlenecks

Title: From Manual Limitations to AI Solutions in Thesis

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Materials for Manual Quantification Experiments

| Item | Function & Relevance |

|---|---|

| Fluorescently Labelled Lipid Nanoparticles (e.g., DiO-LNPs) | Model nanocarrier system for cellular uptake studies. Fluorescence enables detection by microscopy. |

| Cell Line (e.g., HeLa, HepG2) | Biological model for in vitro assessment of nanocarrier uptake and localization. |

| Confocal Microscope with 60x/100x Oil Objective | Essential for high-resolution imaging of sub-micron nanoparticles within cells. |

| Image Analysis Software (FIJI/ImageJ) | Open-source platform for manual image processing, thresholding, and particle analysis. |

| Cell Culture Plate (24-well/96-well, glass-bottom) | Vessel for cell growth and imaging, glass bottom is optimal for high-resolution microscopy. |

| Paraformaldehyde (4% PFA) | Fixative for preserving cellular architecture and nanoparticle position post-uptake. |

| Mounting Medium with DAPI | Preserves sample and stains nuclei for cell localization reference in images. |

| Standardized Fluorescent Beads (e.g., 0.5 µm) | Critical positive control for validating microscope resolution and quantification settings. |

In the development of AI-driven deep learning pipelines for nanocarrier characterization, precise definition of the target quantifiable parameters is foundational. This document outlines the core physical attributes—size, distribution, concentration, and morphology—that are essential for robust algorithmic training and analysis in therapeutic nanocarrier research.

Core Quantifiable Parameters

The following table summarizes the key parameters, their significance, and standard measurement techniques.

Table 1: Core Quantification Parameters in Nanocarrier Analysis

| Parameter | Definition & Significance | Primary Measurement Techniques |

|---|---|---|

| Size (Hydrodynamic Diameter) | The effective diameter of a particle moving in a fluid, critical for biodistribution, circulation time, and cellular uptake. | Dynamic Light Scattering (DLS), Nanoparticle Tracking Analysis (NTA) |

| Size Distribution (Polydispersity Index - PDI) | A measure of the heterogeneity of particle sizes in a sample. A low PDI (<0.2) indicates a monodisperse population. | DLS, NTA, Electron Microscopy + Image Analysis |

| Concentration | The number of particles per unit volume (particles/mL). Essential for dosing accuracy and in vitro/in vivo correlation. | NTA, Tunable Resistive Pulse Sensing (TRPS), Flow Cytometry |

| Morphology | The shape and structural features (e.g., spherical, rod-like, lamellar) influencing biological interactions and drug loading. | Transmission Electron Microscopy (TEM), Scanning Electron Microscopy (SEM), Atomic Force Microscopy (AFM) |

Detailed Experimental Protocols

Protocol 1: Hydrodynamic Size & PDI Measurement via Dynamic Light Scattering (DLS)

Objective: To determine the intensity-weighted mean hydrodynamic diameter (Z-Average) and polydispersity index (PDI) of a liposomal nanocarrier suspension.

Materials:

- Nanocarrier suspension (e.g., PEGylated liposomes)

- DLS instrument (e.g., Malvern Zetasizer Nano ZS)

- Disposable microcuvettes (low volume, polystyrene)

- 0.1 µm filtered phosphate-buffered saline (PBS) or appropriate buffer

- Micropipettes and tips

Procedure:

- Sample Preparation: Dilute the nanocarrier stock solution in filtered PBS to achieve a recommended concentration within the instrument's sensitivity range (typically 0.1-1 mg/mL for liposomes). Avoid introducing bubbles.

- Instrument Setup: Power on the DLS instrument and software. Allow the laser to warm up for 15-30 minutes.

- Measurement: Load 50 µL of the diluted sample into a clean microcuvette. Place the cuvette in the thermostatted sample holder (set to 25°C). Set the measurement parameters: equilibration time (120 s), number of runs (3-5), run duration (automatic).

- Data Acquisition: Initiate the measurement. The software will report the Z-Average size (in nm) and the PDI.

- Quality Control: Ensure the count rate is stable and within the instrument's optimal range. Examine the correlation function and size distribution plot for artifacts.

- Analysis: Report the Z-Average and PDI as mean ± standard deviation from at least three independent sample preparations.

Protocol 2: Concentration & Size Distribution via Nanoparticle Tracking Analysis (NTA)

Objective: To determine the particle number concentration (particles/mL) and visualize the size distribution profile of extracellular vesicle (EV) samples.

Materials:

- Purified extracellular vesicle sample

- NTA system (e.g., Malvern NanoSight NS300)

- 1 mL sterile syringes

- 0.1 µm filtered PBS

- Laboratory wipes

Procedure:

- System Priming: Flush the instrument's fluidic system with 0.1 µm filtered PBS using a syringe to remove any contaminants.

- Sample Dilution: Serially dilute the EV sample in filtered PBS to achieve an optimal concentration for tracking (typically 10⁷-10⁹ particles/mL). The ideal concentration yields 20-100 particles per frame.

- Loading and Focusing: Inject the diluted sample into the sample chamber using a syringe. Use the software's live view to focus the laser on the particles. Adjust the camera level to clearly visualize particles without saturation.

- Video Capture: Capture five videos of 60 seconds each from different, non-overlapping positions within the sample chamber.

- Processing and Analysis: Process all videos using the same detection threshold and analysis settings (e.g., detection threshold: 5, blur size: auto, max jump distance: auto). The software will generate a mean and mode size (nm) and a concentration (particles/mL) for each video.

- Reporting: Calculate and report the mean concentration and size distribution (mean ± SD) from all captured videos. Export the size distribution histogram for AI pipeline input.

Protocol 3: Morphological Analysis via Transmission Electron Microscopy (TEM)

Objective: To visualize and quantify the morphology and core-shell structure of polymeric nanoparticles (e.g., PLGA NPs).

Materials:

- PLGA nanoparticle suspension

- Carbon-coated copper TEM grids (200 mesh)

| Research Reagent Solutions Toolkit | |

|---|---|

| Reagent/Material | Function in Nanocarrier Quantification |

| Filtered PBS (0.1 µm) | Provides a clean, isotonic suspension medium for dilution and measurement, preventing contamination from dust/aggregates. |

| Uranyl Acetate (2% aqueous) | A common negative stain for TEM; enhances contrast by embedding around particles, revealing surface topography and shape. |

| Phosphotungstic Acid (PTA) | Alternative negative stain for TEM; used particularly for lipid-based systems to improve contrast without disrupting structure. |

| Size Calibration Standards (e.g., 100nm latex beads) | Essential for validating and calibrating DLS, NTA, and TRPS instruments to ensure measurement accuracy. |

| Glow-Discharged TEM Grids | Treatment renders carbon grids hydrophilic, ensuring even sample spread and adhesion of nanoparticles for high-quality TEM imaging. |

- 2% Uranyl acetate stain or 1% Phosphotungstic acid (PTA)

- Parafilm

- Filter paper

- Glow discharge system (optional)

- TEM instrument

Procedure:

- Grid Preparation: (Optional but recommended) Glow-discharge carbon-coated grids for 30-60 seconds to make them hydrophilic.

- Sample Staining (Negative Stain): a. Place a 10 µL droplet of the nanoparticle suspension on a piece of Parafilm. b. Float a TEM grid (carbon side down) on the droplet for 5-10 minutes. c. Blot the grid edge on filter paper to remove excess liquid. d. Immediately float the grid on a 10 µL droplet of uranyl acetate stain for 1-2 minutes. e. Blot excess stain and allow the grid to air-dry completely in a covered petri dish.

- TEM Imaging: Insert the dried grid into the TEM. Using an acceleration voltage of 80-100 kV, image the nanoparticles at various magnifications (e.g., 20,000x to 100,000x). Capture multiple images from different grid squares.

- Image Analysis: Use image analysis software (e.g., ImageJ, proprietary AI tools) to measure particle dimensions (diameter, core diameter), circularity, and assess morphological homogeneity from the TEM micrographs.

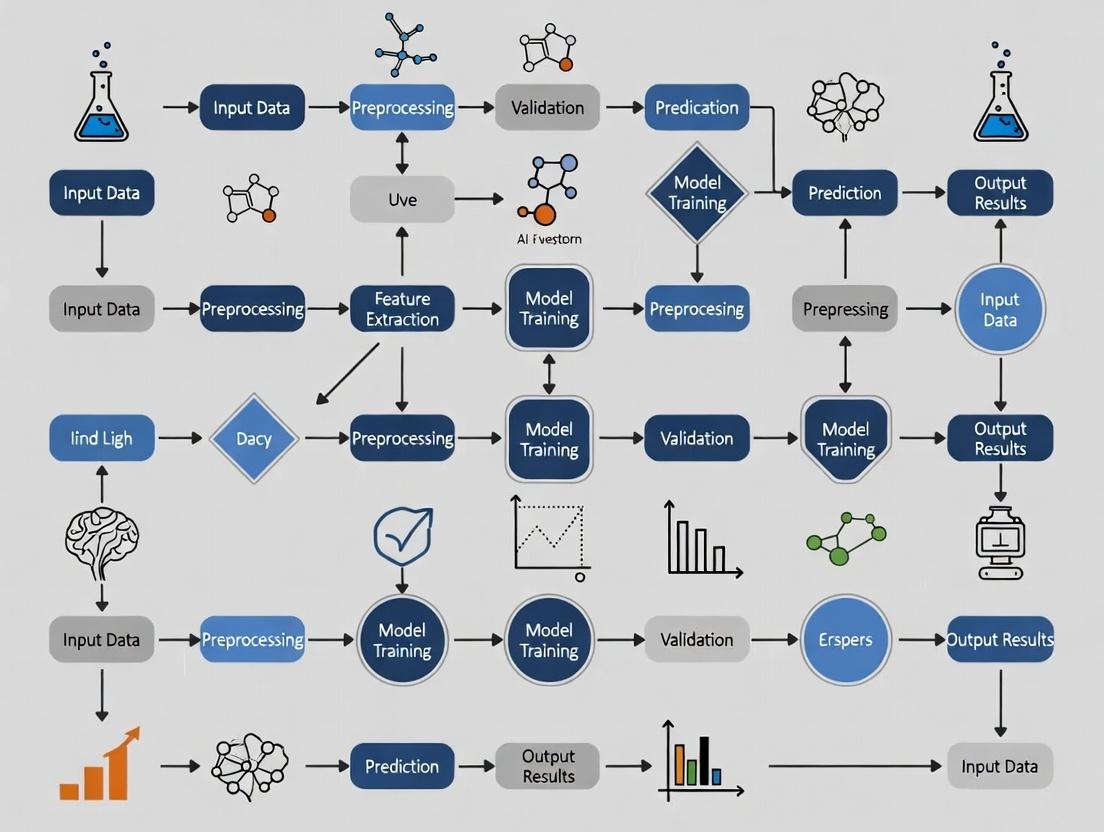

Visualizing the AI-Enabled Quantification Pipeline

AI Pipeline for Nanocarrier Quantification

Logical Flow from Parameter Definition to Insight

Core Concepts in the Context of Nanocarrier Quantification

Convolutional Neural Networks (CNNs) are a specialized class of deep neural networks designed for processing structured grid data, such as images. Their architecture is inspired by the biological visual cortex and is exceptionally effective for analyzing microscopy images central to AI-driven nanocarrier quantification research. This analysis is critical for evaluating drug delivery system efficacy, biodistribution, and targeting efficiency in therapeutic development.

Key Architectural Components:

- Convolutional Layers: Apply learnable filters (kernels) to extract hierarchical features (edges, textures, shapes) from input images. This local connectivity and weight sharing drastically reduce parameters compared to fully connected networks.

- Pooling Layers (e.g., Max Pooling): Downsample feature maps, reducing spatial dimensions and computational complexity while introducing translational invariance.

- Activation Functions (ReLU): Introduce non-linearity, allowing the network to learn complex patterns. Rectified Linear Unit (ReLU) is standard.

- Fully Connected Layers: Located at the network's end, these layers integrate extracted features for final classification or regression tasks (e.g., counting nanoparticles, classifying cellular uptake).

Quantitative Performance Data of CNN Architectures

The following table summarizes key performance metrics for modern CNN architectures relevant to biomedical image analysis, based on benchmarks like ImageNet. Accuracy and parameter efficiency are crucial for deploying models in research settings with limited computational resources.

Table 1: Performance Comparison of CNN Architectures for Image Analysis Tasks

| Architecture | Top-1 Accuracy (ImageNet) | Number of Parameters | Key Innovation | Suitability for Microscopy |

|---|---|---|---|---|

| ResNet-50 | 76.0% | ~25.6 M | Residual connections for training very deep networks | High: Excellent for feature extraction from complex bio-images. |

| VGG-16 | 71.3% | ~138 M | Simple, deep stacks of 3x3 convolutions | Moderate: Good performance but parameter-heavy. |

| EfficientNet-B0 | 77.1% | ~5.3 M | Compound scaling of depth, width, and resolution | Very High: State-of-the-art efficiency/accuracy trade-off. |

| U-Net | N/A (Segmentation) | ~31 M | Encoder-decoder with skip connections for segmentation | Essential: Benchmark for semantic segmentation of nanoparticles/cells. |

| DenseNet-121 | 75.0% | ~8.0 M | Dense connectivity between layers, feature reuse | High: Parameter-efficient, good for limited data. |

Note: Accuracy values are indicative. Performance on specific nanocarrier datasets depends on training data quality and quantity.

Experimental Protocol: CNN-Based Quantification of Cellular Nanoparticle Uptake

Aim: To automatically quantify the intracellular uptake of fluorescently labeled nanocarriers from confocal microscopy images.

Materials: Confocal microscopy images (Z-stacks or maximum projections) of treated cells. Ground truth data (manually annotated particle counts or segmentation masks).

Protocol:

Image Preprocessing:

- Channel Alignment & Extraction: Isolate the fluorescent channel corresponding to the nanocarrier signal.

- Background Subtraction: Apply a rolling-ball or top-hat filter to reduce uneven illumination.

- Normalization: Scale pixel intensities to a standard range (e.g., 0-1).

- Patch Generation: For large images, tile into smaller patches (e.g., 256x256 px) compatible with CNN input.

Data Annotation & Augmentation:

- Annotation: Using tools like LabelBox or Fiji, create binary masks outlining individual nanoparticles or regions of uptake.

- Augmentation: Apply real-time transformations (rotation, flipping, minor intensity shifts) during training to improve model generalization.

Model Training (U-Net Architecture for Segmentation):

- Framework: Utilize PyTorch or TensorFlow/Keras.

- Loss Function: Use a combined loss (e.g., Dice Loss + Binary Cross-Entropy) to handle class imbalance (few foreground pixels).

- Optimizer: Adam optimizer with an initial learning rate of 1e-4.

- Training: Train for 50-100 epochs, monitoring validation loss. Implement early stopping to prevent overfitting.

Inference & Post-processing:

- Prediction: Apply trained model to new images to generate probability maps.

- Thresholding: Apply optimal threshold (e.g., 0.5) to create binary segmentation.

- Connected Component Analysis: Use

skimage.measure.labelto identify and count individual segmented objects (nanocarriers). - Size Filtering: Filter objects by area to exclude debris or noise.

Validation:

- Compare automated counts/manual counts from a blinded expert.

- Metrics: Calculate Pearson correlation coefficient, mean absolute error, and Dice similarity coefficient.

Visualization of Workflow and Architecture

Diagram 1: CNN pipeline for nanocarrier image analysis

Diagram 2: U-Net for nanoparticle segmentation

The Scientist's Toolkit: Essential Reagents & Software

Table 2: Key Research Reagent Solutions & Computational Tools

| Category | Item / Software | Function / Purpose in CNN Workflow |

|---|---|---|

| Wet-Lab Reagents | Fluorescently Labeled Nanocarriers (e.g., Cy5, FITC) | Enable visualization and tracking of nanoparticles in cellular and tissue samples via microscopy. |

| Cell Permeability/ Viability Assay Kits (e.g., MTT, LDH) | Assess biological impact of nanocarrier uptake, correlating quantitative imaging data with functional readouts. | |

| Mounting Media with DAPI | Provides nuclear counterstain for cell segmentation and localization context in multi-channel images. | |

| Imaging Software | Fiji/ImageJ | Open-source platform for initial image preprocessing, manual annotation, and basic analysis. |

| Bitplane Imaris, Leica LAS X | Advanced 3D/4D image visualization, manual object tracking, and generation of ground truth data. | |

| Deep Learning Frameworks | PyTorch, TensorFlow | Core open-source libraries for building, training, and validating custom CNN models. |

| Specialized Libraries | Cellpose, StarDist | Pretrained models for general cell and nucleus segmentation, useful for transfer learning. |

| scikit-image, OpenCV | Provide essential algorithms for image preprocessing and post-processing (filters, thresholding). | |

| Annotation Tools | LabelBox, CVAT | Web-based platforms for efficient collaborative labeling of microscopy images to create training datasets. |

| Computational Hardware | GPU (NVIDIA, CUDA-enabled) | Accelerates CNN training and inference by orders of magnitude compared to CPU-only processing. |

Application Notes for AI-Driven Nanocarrier Quantification

Modality-Specific Data Characteristics

The integration of multimodal imaging data is critical for training robust AI models in nanocarrier research. Each modality provides complementary structural and functional information.

Table 1: Quantitative Comparison of Imaging Modalities for Nanocarrier Analysis

| Modality | Resolution (Typical) | Depth of Field | Sample Preparation | Key Data for AI | Throughput | Live-Cell Capability |

|---|---|---|---|---|---|---|

| TEM | < 1 nm | Very Thin | Fixed, dehydrated, stained (negative/positive) | 2D projection, internal morphology, size distribution | Low | No |

| SEM | 1-10 nm | High | Fixed, dehydrated, conductive coating | 3D surface topology, size, aggregation state | Medium | No (except ESEM) |

| Cryo-EM | 2-5 Å (single-particle) | Thin Vitrified Layer | Rapid vitrification, no stain | Near-native 3D structure, conformational heterogeneity | Low-Medium | No (but native-like) |

| Fluorescence Microscopy | 200-300 nm (diffraction-limited) | High (confocal: optical sectioning) | Labeled (fluorescent dyes, proteins) | Dynamic tracking, colocalization, pharmacokinetics | High | Yes |

Table 2: AI-Relevant Data Outputs and Challenges

| Modality | Primary Output Format | Key Quantitative Features for DL | Common Artifacts & Preprocessing Needs |

|---|---|---|---|

| TEM | 2D Grayscale Image | Particle diameter, core-shell distinction, lamellarity, shape eccentricity | Stain precipitation, aggregation during drying, beam damage. Requires contrast normalization, denoising. |

| SEM | 2D/3D Topographic Image | Surface roughness, porosity, particle clustering, size distribution | Charging, edge effects, metal coating thickness. Requires segmentation, edge detection. |

| Cryo-EM | 2D Micrograph Projections → 3D Density Map | High-resolution atomic/molecular contours, ligand binding sites, structural variability | Ice contamination, particle orientation bias, low signal-to-noise. Requires extensive particle picking, classification, 3D reconstruction. |

| Fluorescence Microscopy | 2D/3D/4D (Time) Multi-channel Image | Intensity over time (release kinetics), co-localization coefficients (targeting), particle trajectory & diffusion rates | Photobleaching, background autofluorescence, spectral bleed-through. Requires deconvolution, background subtraction, tracking algorithms. |

Integration into AI/Deep Learning Pipelines

The multimodal data feed into different stages of an AI pipeline for nanocarrier quantification and prediction.

Table 3: Mapping Modalities to AI Pipeline Stages

| Pipeline Stage | TEM/SEM Input Role | Cryo-EM Input Role | Fluorescence Microscopy Input Role |

|---|---|---|---|

| Detection & Segmentation | Ground truth for size/shape; trains U-Net/ Mask R-CNN models. | High-fidelity shape prior for model initialization. | Labels for dynamic object detection in complex backgrounds. |

| Classification & Phenotyping | Classifies based on internal structure (e.g., multilamellar vs. unilamellar vesicles). | Classifies conformational states or ligand-binding occupancy. | Classifies behavior (e.g., bound, internalized, free-diffusing). |

| Quantification & Regression | Measures precise nanoscale dimensions (diameter, membrane thickness). | Quantifies binding site occupancy or structural flexibility. | Quantifies release kinetics, targeting efficiency in cells/organs. |

| Predictive Modeling | Provides structural correlates for in vitro performance (e.g., loading capacity). | Informs structure-activity relationships (SAR) at atomic level. | Generates dynamic data for PK/PD and efficacy prediction models. |

Experimental Protocols for Data Generation

Protocol: TEM Sample Preparation and Imaging for Lipid Nanoparticles (LNPs)

Objective: To obtain high-contrast 2D images of LNP internal structure for AI-based segmentation.

Materials (Research Reagent Solutions Toolkit):

- Phosphotungstic Acid (PTA, 2% w/v, pH 7.0): Negative stain; enhances contrast by embedding around particles.

- Formvar/Carbon-coated Copper Grids (300 mesh): Support film for sample deposition.

- Glow Discharger: Creates hydrophilic grid surface for even sample spread.

- Ultrafiltration Devices (e.g., Amicon filters): For buffer exchange/concentration.

- Transmission Electron Microscope (e.g., JEOL JEM-1400Plus, 120kV).

Procedure:

- Grid Preparation: Glow-discharge grids for 30-45 seconds to create a hydrophilic surface.

- Sample Application: Dilute LNP sample in appropriate buffer (e.g., 10 mM HEPES). Pipette 5-10 µL onto the grid. Allow adsorption for 60 seconds.

- Staining: Blot excess liquid with filter paper. Immediately apply 10 µL of 2% PTA stain for 30 seconds. Blot thoroughly.

- Drying: Air-dry the grid completely in a petri dish.

- Imaging: Insert grid into TEM. Image at 80-120 kV. Acquire multiple micrographs at various magnifications (e.g., 20,000x, 50,000x, 100,000x) across the grid.

- Data Export: Save images in lossless formats (TIFF, DM4) retaining metadata (scale, kV).

Protocol: Cryo-EM Single Particle Analysis of Protein-Conjugated Nanocarriers

Objective: To determine the near-native 3D structure and binding site of a targeting moiety on a nanocarrier.

Materials (Research Reagent Solutions Toolkit):

- Quantifoil R1.2/1.3 or UltrauFoil Grids: Holey carbon grids for vitrification.

- Vitrobot (or equivalent plunge freezer): For controlled, rapid vitrification.

- Liquid Ethane: Cryogen for vitrification.

- Cryo-Electron Microscope (e.g., Thermo Fisher Scientific Titan Krios, 300kV, with Gatan K3 detector).

- Relion/CryoSPARC/EMAN2 Software Suites: For computational processing.

Procedure:

- Grid Preparation: Plasma clean grids to ensure uniform hydrophilicity.

- Vitrification: Apply 3 µL of purified sample (~3 mg/mL) to grid. Blot for 3-6 seconds at 100% humidity (4°C) and plunge freeze into liquid ethane. Store in liquid nitrogen.

- Screening & Data Collection: Screen for ice quality. Collect automated movie data at high defocus range (e.g., -1.0 to -2.5 µm) with dose fractionation (40 frames, 50 e-/Ų total dose).

- AI-Driven Processing (Typical CryoSPARC Workflow):

a. Patch-based motion correction & CTF estimation.

b. Template-free particle picking using

Topaz(deep learning) orBlob picker. c. 2D Classification to remove junk particles. d. Ab initio 3D reconstruction and heterogeneous refinement to separate structural classes. e. Non-uniform refinement and Local resolution estimation. - Model Building & Quantification: Fit atomic models (if available) into density. Analyze density maps for ligand occupancy.

Protocol: Live-Cell Fluorescence Microscopy for Nanocarrier Tracking & Drug Release

Objective: To quantify cellular uptake, intracellular trafficking, and payload release kinetics of fluorescently labeled nanocarriers.

Materials (Research Reagent Solutions Toolkit):

- Cell Culture (e.g., HeLa, HepG2): Relevant cell line for study.

- Fluorescent Nanocarrier: Dual-labeled: lipophilic dye (e.g., DiD) in membrane/coat & encapsulated cargo (e.g., FITC-dextran, Doxorubicin).

- Confocal/Spinning Disk Microscope with environmental chamber (37°C, 5% CO₂).

- Image Analysis Software: (e.g., FIJI/ImageJ, Imaris, CellProfiler).

Procedure:

- Cell Seeding: Seed cells on glass-bottom dishes 24h prior to reach 60-70% confluency.

- Incubation & Imaging: Replace medium with pre-warmed medium containing labeled nanocarriers. Immediately place dish on microscope stage.

- Time-Lapse Acquisition: Acquire multi-channel (e.g., DiD: Ex/Em 644/665 nm, FITC: 494/518 nm) z-stacks every 5-10 minutes for 2-24 hours.

- Data Processing & AI Analysis (using FIJI/TrackMate): a. Background Subtraction (Rolling Ball). b. Deconvolution (if using widefield). c. Particle Detection & Tracking: Use TrackMate's deep learning detector or LoG detector. Apply simple LAP tracker to generate trajectories. d. Colocalization Analysis: Calculate Manders' or Pearson's coefficients between channels over time to quantify payload release. e. Trajectory Analysis: Calculate mean squared displacement (MSD) to classify diffusion modes (confined, directed, free).

Visualizations (Diagrams)

Title: TEM Data Pipeline for AI

Title: Cryo-EM SPA AI Analysis Workflow

Title: Multimodal Data Fusion in AI Model

Within the thesis framework of AI-driven nanocarrier quantification for drug development, this protocol details the integrated pipeline from raw biological image acquisition to statistically validated insights. This pipeline is critical for the high-throughput, reproducible analysis of nanocarrier cellular uptake, distribution, and efficacy, replacing subjective manual quantification with objective, scalable deep learning (DL) methods.

The Integrated AI Pipeline: Protocols and Application Notes

Phase 1: Image Acquisition & Preprocessing

Protocol 2.1.1: Standardized Confocal Microscopy for Nanocarrier Imaging

- Objective: Acquire high-quality, consistent z-stack images of cells incubated with fluorescently labeled nanocarriers.

- Materials: Cell culture (e.g., HeLa, MCF-7), fluorescent nanocarriers, confocal microscope (e.g., Zeiss LSM 980), glass-bottom dishes.

- Procedure:

- Seed cells at defined density (e.g., 50,000 cells/dish) and incubate for 24h.

- Treat with nanocarriers at desired concentration for a set time (e.g., 1-24h).

- Fix with 4% PFA for 15 min, stain nuclei (DAPI) and cytoskeleton (Phalloidin-488).

- Acquire z-stacks (0.5 µm step size) using a 63x oil immersion objective, ensuring non-saturating pixel intensity.

- Export images as 16-bit TIFF files, maintaining consistent naming convention (e.g.,

SampleID_Channel_Date.tif).

Protocol 2.1.2: Image Preprocessing and Augmentation

- Objective: Prepare raw images for DL model training by normalizing data and artificially expanding the dataset.

- Procedure:

- Background Subtraction: Apply rolling ball algorithm (50-pixel diameter).

- Channel Alignment: Correct minor shifts between fluorescence channels using landmark registration.

- Normalization: Scale pixel intensities to a 0-1 range per image batch.

- Data Augmentation (On-the-fly during training): Apply random transformations including rotation (±45°), horizontal/vertical flips, and minor brightness/contrast adjustments (±10%).

Phase 2: Model Training & Segmentation

Protocol 2.2.1: U-Net Model Training for Nanocarrier Instance Segmentation

- Objective: Train a deep learning model to precisely identify and outline individual nanocarriers within cellular images.

- Materials: Ground truth dataset (≥200 manually annotated images), GPU workstation (e.g., NVIDIA A100), Python with PyTorch/TensorFlow.

- Procedure:

- Data Preparation: Split image dataset into Training (70%), Validation (15%), Test (15%).

- Model Architecture: Implement a standard U-Net with ResNet-34 encoder pre-trained on ImageNet.

- Training: Use Adam optimizer (lr=1e-4), Dice-BCE loss function, batch size of 8 for 100 epochs.

- Validation: Monitor validation loss and Dice Coefficient to avoid overfitting; implement early stopping.

- Inference: Apply the trained model on new images to generate binary segmentation masks.

Diagram 1: Core AI Image Analysis Pipeline

Phase 3: Quantitative Feature Extraction

Protocol 2.3.1: Extraction of Morphometric and Intensity Features

- Objective: Convert segmentation masks into quantitative tabular data.

- Procedure: Using Python (scikit-image, pandas), for each detected nanocarrier object, extract:

- Morphometric: Area (µm²), Perimeter, Circularity, Feret Diameter.

- Spatial: Centroid coordinates (x, y, z), Distance to Nucleus.

- Intensity: Mean, Max, and Total fluorescence intensity per particle.

Table 1: Example Feature Extraction Output for Nanocarrier Analysis

| Sample ID | Particle ID | Area (px²) | Circularity | Mean Intensity | Distance to Nucleus (px) | Cellular Region |

|---|---|---|---|---|---|---|

| Ctrl_1 | 1 | 45.2 | 0.87 | 1256.7 | 15.3 | Cytoplasm |

| Ctrl_1 | 2 | 38.9 | 0.91 | 1102.4 | 8.7 | Perinuclear |

| Treat_1 | 1 | 52.3 | 0.78 | 4500.5 | 5.1 | Perinuclear |

Phase 4: Statistical Insight & Biological Interpretation

Protocol 2.4.1: Statistical Workflow for Comparative Studies

- Objective: Determine significant differences in nanocarrier parameters between experimental groups.

- Procedure:

- Data Aggregation: Calculate per-sample metrics (e.g., mean particle count/cell, total fluorescence intensity).

- Normality Test: Perform Shapiro-Wilk test on aggregated data.

- Hypothesis Testing: For normal data, use ANOVA with post-hoc Tukey test; for non-normal, use Kruskal-Wallis with Dunn's test.

- Dimensionality Reduction: Apply Uniform Manifold Approximation and Projection (UMAP) to visualize high-dimensional feature clustering.

- Correlation Analysis: Compute Spearman's correlation between uptake metrics and cellular assay results (e.g., viability).

Diagram 2: From Segmentation to Statistical Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for AI-Powered Nanocarrier Quantification Research

| Item | Function/Application | Example Product/Brand |

|---|---|---|

| Fluorescent Nanocarriers | Enable visualization and tracking under microscopy. Liposomes, polymeric NPs with Cy5, FITC, or Rhodamine labels. | Merck (Sigma-Aldridge) Liposomes; Creative PEGWorks PLGA-NPs. |

| Cell Line with Fluorescent Organelles | Provide spatial context for co-localization analysis (e.g., LysoTracker, MitoTracker). | Thermo Fisher Scientific CellLight BacMam 2.0 reagents. |

| High-Resolution Confocal Microscope | Acquire high-quality 3D image stacks for precise segmentation. | Zeiss LSM 980 with Airyscan 2; Nikon A1R HD25. |

| Image Annotation Software | Create ground truth data for model training by manually labeling nanocarriers. | Nikon NIS-Elements AR; MIT's Label Studio. |

| DL Training Platform | User-friendly environment to build, train, and deploy segmentation models without extensive coding. | Aivia Cloud (Leica); DeepCell (van Valen Lab); Ilastik. |

| Statistical Analysis Software | Perform advanced statistical testing and data visualization. | GraphPad Prism; R Studio with ggplot2; Python (SciPy, seaborn). |

Building Your Pipeline: A Step-by-Step Guide to AI-Driven Nanocarrier Analysis

Within the AI pipeline for nanocarrier quantification in drug development, the initial curation and preprocessing of a high-quality training dataset is the foundational step determining model success. This dataset, comprising microscopic or spectral images of nanocarriers (e.g., lipid nanoparticles, polymeric micelles), must be meticulously assembled to train deep learning models for tasks like particle counting, size distribution analysis, and morphology classification.

Data Acquisition & Source Curation

Primary data sources for nanocarrier research include experimental imaging techniques. The following table summarizes key modalities:

Table 1: Primary Imaging Modalities for Nanocarrier Dataset Acquisition

| Modality | Typical Resolution | Key Output | Advantage for AI Training | Common Artifacts to Preprocess |

|---|---|---|---|---|

| Transmission Electron Microscopy (TEM) | < 1 nm | 2D grayscale images | High-resolution, detailed morphology | Sample preparation artifacts, staining variability, agglomeration |

| Cryo-Electron Microscopy (Cryo-EM) | ~3-5 Å | 2D particle projections/3D reconstructions | Near-native state, minimal drying artifacts | Vitrification defects, low signal-to-noise in raw micrographs |

| Atomic Force Microscopy (AFM) | ~1 nm (vertical) | 3D height maps (topography) | Quantitative height data, works in liquid | Tip convolution effects, scan line noise |

| Super-Resolution Fluorescence Microscopy (e.g., STORM) | ~20 nm | 2D localization maps | Specific labeling, dynamic tracking | Labeling density issues, blinking artifacts |

| Dynamic Light Scattering (DLS) | Hydrodynamic diameter distribution | Size distribution plots | Rapid, ensemble measurement in solution | Polydispersity skew, dust contamination peaks |

Detailed Preprocessing Protocol

The following protocol details the standard workflow for preparing a raw image dataset for model training.

Protocol 3.1: Standardized Image Preprocessing Pipeline for Nanocarrier TEM Data

Objective: To convert raw TEM micrographs into a normalized, augmented, and annotated dataset suitable for supervised deep learning.

Materials & Input:

- Raw TEM image files (.tiff, .dm3, .mrc formats)

- Annotation software (e.g., Fiji/ImageJ with LabKit, MITK, or commercial solutions)

- Computational environment (Python with libraries: OpenCV, Scikit-image, NumPy, Albumentations)

Procedure:

- Format Standardization:

- Convert all images to a consistent lossless format (e.g., 16-bit PNG or TIFF).

- Extract and log metadata (scale, magnification, detector type).

Quality Control & Filtering:

- Manually or semi-automatically remove images with critical flaws: severe drift, contamination, incorrect defocus, or overcrowding that prevents unambiguous annotation.

- Establish a minimum acceptance criteria (e.g., >80% of nanoparticles in focus, scale bar present).

Basic Intensity Normalization:

- Apply contrast-limited adaptive histogram equalization (CLAHE) to standardize intensity ranges across batches.

- Subtract uneven background illumination using a rolling-ball or top-hat filter.

Noise Reduction:

- Apply a mild non-local means or Gaussian filter to reduce high-frequency noise, balancing detail preservation.

- (For Cryo-EM) Use patch-based denoising algorithms (e.g., Topaz Denoise) as a preprocessing step.

Annotation & Ground Truth Generation:

- For Instance Segmentation: Manually label the boundary of each distinct nanocarrier using a polygon tool. Export masks in COCO or Pascal VOC format.

- For Object Detection: Draw bounding boxes around each particle. Log coordinates and class (e.g., "intact," "aggregated," "ruptured").

- For Size Distribution: Annotate a known number of particles of a reference standard (e.g., gold nanoparticles) to validate scale accuracy per image.

Data Augmentation:

- Using a library like Albumentations, programmatically generate augmented variants to increase dataset robustness. Apply:

- Spatial: Random rotation (±15°), horizontal/vertical flip, slight scaling (±10%).

- Pixel-level: Random brightness/contrast variation (±10%), addition of Gaussian noise, simulated defocus blur.

- Using a library like Albumentations, programmatically generate augmented variants to increase dataset robustness. Apply:

Dataset Splitting:

- Partition the processed and annotated dataset into stratified subsets:

- Training Set: 70% (for model weight optimization).

- Validation Set: 15% (for hyperparameter tuning and epoch selection).

- Test Set: 15% (held-out, for final unbiased performance evaluation).

- Partition the processed and annotated dataset into stratified subsets:

Visualizing the Preprocessing Workflow

Title: AI Training Dataset Preprocessing Pipeline

The Scientist's Toolkit: Key Reagent & Material Solutions

Table 2: Essential Research Reagents for Generating Nanocarrier Imaging Data

| Item | Function in Dataset Creation | Example/Note |

|---|---|---|

| Reference Standards (e.g., Gold Nanoparticles) | Provides scale calibration and validates imaging system resolution. Critical for deriving quantitative size data. | 10 nm, 50 nm, 100 nm citrate-stabilized AuNPs. |

| Negative Stain Reagents (for TEM) | Enhances contrast of biological or soft-matter nanocarriers by embedding them in a heavy metal salt. | 1-2% Uranyl acetate or phosphotungstic acid. |

| Cryo-EM Grids & Vitrification System | Supports nanocarrier sample in near-native, vitrified ice for Cryo-EM imaging. | Quantifoil or C-flat holey carbon grids; Vitrobot. |

| Specific Fluorescent Labels (for SRM) | Enables super-resolution tracking of nanocarrier components (e.g., lipid, payload). | Alexa Fluor dyes, functionalized quantum dots. |

| Size Exclusion Chromatography (SEC) Columns | Purifies nanocarrier formulations to remove aggregates and free ligand before imaging, ensuring a homogeneous dataset. | Sepharose, Superdex columns for in-line purification. |

| AFM Cantilevers & Calibration Gratings | Essential for generating accurate topographic data. Tip shape affects resolution. | Silicon nitride cantilevers; TGZ1/TGQ1 calibration grids. |

Data Annotation & Quality Assurance Protocol

Protocol 6.1: Multi-Expert Consensus Annotation for Ground Truth

Objective: To establish high-fidelity ground truth labels by mitigating individual annotator bias.

Procedure:

- Expert Panel: Engage a minimum of three domain experts (e.g., experienced microscopists).

- Independent Annotation: Each expert annotates the same subset of images (~100-200) using standardized guidelines.

- Consensus Calculation: Use Intersection-over-Union (IoU) for segmentation or F1-score for bounding boxes to measure pairwise agreement.

- Adjudication: For objects where IoU < 0.7, experts review concurrently to reach a consensus label.

- Guideline Refinement: Update annotation guidelines based on adjudication discussions to improve consistency.

- Final Label Generation: Apply the refined guidelines to the full dataset, with periodic cross-checks.

Title: Multi-Expert Consensus Annotation Workflow

Quantitative Dataset Metrics & Logging

A curated dataset must be documented with key metrics to inform users of its characteristics and limitations.

Table 3: Essential Metadata & Quality Metrics for a Curated Dataset

| Metric Category | Specific Metric | Target/Example Value | Purpose |

|---|---|---|---|

| Basic Statistics | Total number of images | e.g., 5,000 | Indicates dataset scale. |

| Number of annotated instances | e.g., 125,000 particles | Indicates label density. | |

| Average instances per image | e.g., 25 ± 10 | Informs minibatch sampling. | |

| Class Balance | Distribution across labeled classes | e.g., Intact: 85%, Aggregated: 10%, Ruptured: 5% | Highlights potential bias. |

| Annotation Quality | Inter-annotator agreement (Mean IoU) | > 0.85 | Quantifies label reliability. |

| Spatial Resolution | Pixel size (nm/pixel) | e.g., 0.5 nm/px (TEM) | Determines detectable features. |

| Split Composition | Instance count per split (Train/Val/Test) | Respects stratification rules | Ensures representative evaluation. |

In the development of an AI-driven deep learning pipeline for the quantification of therapeutic nanocarriers in biological imaging (e.g., TEM, SEM, fluorescence microscopy), the creation of accurate ground truth data is the critical bottleneck. This step directly dictates model performance. Within the thesis framework, this stage follows sample preparation and imaging, and precedes model architecture selection and training. The choice between manual and semi-automated annotation strategies involves a fundamental trade-off between accuracy, time investment, and scalability, directly impacting the research timeline and the reliability of subsequent quantitative analyses (e.g., particle size distribution, count, and morphology).

Comparative Analysis: Manual vs. Semi-Automated Labeling

Table 1: Strategic Comparison of Annotation Approaches

| Parameter | Manual Labeling | Semi-Automated Labeling |

|---|---|---|

| Core Principle | Human expert visually identifies and delineates each nanocarrier object. | Algorithm proposes candidate objects/contours; human expert reviews and corrects. |

| Primary Tools | ImageJ/FIJI, Labelbox, CVAT, Adobe Photoshop. | Ilastik, CellProfiler, specialized pretrained U-Net models, with review in Labelbox or similar. |

| Time per Image (Estimate) | 15-60 minutes, scales linearly with particle density. | 5-20 minutes (including correction), lower scaling factor. |

| Initial Accuracy | High, subject to expert consistency. | Variable; depends on algorithm suitability and image quality. |

| Consistency | Prone to intra- & inter-observer variability. | High for algorithmic pre-selection; final consistency depends on reviewer. |

| Scalability | Low; prohibitive for large datasets (>1000 images). | High; enables annotation of large-scale datasets. |

| Expertise Required | High domain knowledge (biology/materials). | Dual expertise: domain knowledge + tool proficiency. |

| Best Suited For | Small datasets, complex/unpredictable morphologies, low signal-to-noise images, initial model training sets. | Large datasets, consistent and distinct nanocarrier appearance, high-throughput analysis. |

| Key Risk | Labeler fatigue leading to errors and inconsistency. | Algorithm bias or failure modes propagating into ground truth. |

Detailed Experimental Protocols

Protocol 3.1: Manual Annotation for TEM Nanocarrier Images

Objective: To create pixel-accurate ground truth masks for lipid nanoparticles (LNPs) in Transmission Electron Microscopy (TEM) images. Materials: See Scientist's Toolkit (Section 5.0). Procedure:

- Image Pre-processing: Open raw TEM .tif file in FIJI. Apply minimal contrast stretching (

Image > Adjust > Brightness/Contrast) to clarify particle boundaries without creating artifacts. Duplicate the image (Image > Duplicate) for annotation. - Annotation: Select the

Polygon Selectionstool. Manually trace the boundary of each distinct nanocarrier, ensuring to include the entire particle and exclude background membrane or staining artifacts. For dense clusters, trace individual particles to the best ability. - Mask Creation: With all polygons for one image selected, create a binary mask:

Edit > Selection > Create Mask. This generates a new binary image where annotated pixels are white (255) and background is black (0). - Label Export: Save the mask image with a filename linking it to the original (e.g.,

original_name_mask.tif). For object detection models, use theROI Manager(Add [t] after selection) to export coordinates as.csvfiles. - Quality Control: Have a second, independent expert annotate a random subset (10-20%) of images. Calculate Inter-Observer Agreement metrics (e.g., Dice Coefficient) to establish annotation confidence.

Protocol 3.2: Semi-Automated Annotation Using Ilastik + Manual Correction

Objective: To rapidly generate and refine ground truth for fluorescently labeled polymeric micelles in confocal microscopy stacks. Materials: See Scientist's Toolkit (Section 5.0). Procedure: Part A: Pixel Classification Training (Ilastik)

- Project Setup: Open Ilastik, create a new

Pixel Classificationproject. Import a representative subset of raw image stacks (5-10). - Feature Selection: In the

Feature Selectiontab, select relevant 2D/3D features (e.g., Edge, Texture at scales 1.0, 3.0, 5.0 px). - Interactive Training: In the

Trainingtab, using the brush tool, label pixels asNanocarrier (Signal)andBackground(andAmbiguousif needed) across multiple slices and images. Live feedback shows the probabilistic output. - Model Export: Once predictions are stable, go to

Exporttab. ChooseProbabilitiesand export the predicted probability maps for all images as 32-bit .tif files.

Part B: Segmentation & Correction

- Binary Segmentation: In FIJI, open a probability map. Apply a threshold (

Image > Adjust > Threshold, Otsu method often suitable) to create a preliminary binary mask. UseAnalyze Particlesto convert to object labels. - Review & Correct: Import the original image and the preliminary mask into a platform like Labelbox or CVAT as a new project. Use the built-in correction tools (brush, eraser, polygon edit) to fix false positives (non-particle objects) and false negatives (missed particles).

- Finalization: Export the corrected masks and/or bounding boxes in the required format (e.g., COCO JSON, Pascal VOC).

Visualizations

Annotation Strategy Decision Workflow

Semi-Automated Annotation Two-Phase Pipeline

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Annotation

| Item | Function/Description | Example Product/Software |

|---|---|---|

| Image Analysis Suite | Core platform for manual manipulation, basic segmentation, and batch processing. | FIJI/ImageJ (open source), Adobe Photoshop (commercial). |

| Specialized ML Tool | Interactive machine learning for pixel classification, object prediction, and tracking. | Ilastik (open source), CellProfiler (open source). |

| Annotation Platform | Cloud or local platform for collaborative labeling, versioning, and correction of images/videos. | Labelbox, CVAT, Supervisely. |

| High-Resolution Monitor | Accurate visual identification of nanocarrier boundaries and subtle image features. | 4K/UHD IPS or OLED monitors with accurate color calibration. |

| Graphics Tablet | Provides pressure-sensitive, precise drawing for manual segmentation, reducing fatigue. | Wacom Intuos or Cintiq series. |

| Data Storage Solution | Secure, high-capacity storage for large raw image sets and derived annotation files. | RAID-configurated NAS (Network Attached Storage) with automated backup. |

Within the broader thesis on developing an AI deep learning pipeline for automated nanocarrier quantification in microscopic images, the selection of an appropriate neural network architecture is critical. This stage directly impacts the accuracy, speed, and reliability of detecting and segmenting lipid nanoparticles (LNPs), polymeric micelles, and other drug delivery vehicles from complex biological backgrounds (e.g., tissue sections, cell cultures). U-Net, Mask R-CNN, and YOLO represent three dominant paradigms for image analysis, each with distinct strengths for semantic segmentation, instance segmentation, and real-time detection, respectively. The choice hinges on specific research questions: whether precise pixel-wise segmentation of individual nanocarriers is required (for size/morphology analysis) or if rapid counting and coarse localization suffice.

Architecture Comparison: Application Notes for Nanocarrier Analysis

The following table summarizes the core attributes, quantitative performance benchmarks (where applicable from recent literature), and suitability for nanocarrier research.

Table 1: Comparative Analysis of Architectures for Nanocarrier Image Analysis

| Feature | U-Net | Mask R-CNN | YOLO (v8-Seg) |

|---|---|---|---|

| Primary Task | Semantic / Instance Segmentation | Instance Segmentation | Real-time Detection & Segmentation |

| Core Strength | High-precision pixel-level segmentation, especially with limited data. | Simultaneous object detection, classification, and mask generation. | Extreme inference speed with competitive accuracy. |

| Typical mIoU/Dice Score (on biomedical datasets) | 0.85 - 0.95 | 0.78 - 0.90 (Mask mAP) | 0.75 - 0.85 (Mask mAP) |

| Inference Speed (FPS on 512x512 image) | ~10-20 (CPU), ~50-100 (GPU) | ~5-10 (GPU) | ~50-120 (GPU) |

| Data Efficiency | Excellent; performs well with hundreds of annotated images. | Requires larger datasets (thousands) for robust performance. | Requires large, diverse datasets; benefits from pre-training. |

| Output for Quantification | Pixel-wise segmentation mask. | Bounding box, class label, and segmentation mask per instance. | Bounding box, class label, and optional segmentation mask. |

| Best Suited for Nanocarrier Use-Case | Quantifying nanocarrier area/loading in a region, dense clustering analysis. | Differentiating & quantifying individual nanocarriers in aggregates, morphological classification. | High-throughput screening, real-time analysis in live-cell imaging, initial rapid detection. |

Experimental Protocols for Model Training & Validation

Protocol 3.1: Dataset Preparation for Nanocarrier Instance Segmentation

Objective: To create a standardized dataset from Transmission Electron Microscopy (TEM) or Scanning Electron Microscopy (SEM) images for training segmentation models.

- Image Acquisition: Acquire ≥100 high-resolution (≥1024x1024) TEM/SEM images of nanocarriers under various conditions (e.g., different formulations, incubation times).

- Annotation:

- For U-Net: Use LabelMe or ITK-SNAP to create pixel-perfect binary masks. Annotate all nanocarriers as a single class (e.g., 'nanocarrier' vs. 'background').

- For Mask R-CNN/YOLO: Use VGG Image Annotator (VIA) or COCO Annotator to draw polygons around each individual nanocarrier. Assign instance IDs.

- Pre-processing: Resize images to a uniform size (e.g., 512x512). Apply stain normalization (for TEM) and augmentations: random rotation (±15°), horizontal/vertical flips, mild Gaussian blur, and contrast adjustment.

- Splitting: Split dataset 70:15:15 (Train:Validation:Test), ensuring no data leakage from the same sample across splits.

Protocol 3.2: Transfer Learning Protocol for Mask R-CNN on Nanocarrier Data

Objective: To adapt a pre-trained Mask R-CNN model (on COCO) for nanocarrier instance segmentation.

- Base Model: Initialize with a Mask R-CNN model with a ResNet-50-FPN backbone pre-trained on MS COCO.

- Model Modification: Replace the head classifiers to predict only 2 classes: 'background' and 'nanocarrier'.

- Training Regime:

- Hardware: Single NVIDIA RTX A6000 GPU (or equivalent with ≥24GB VRAM).

- Phase 1 (Frozen Backbone): Train only the head layers for 10 epochs. Use SGD optimizer with LR=0.001, momentum=0.9, weight decay=0.0001.

- Phase 2 (Fine-tuning): Unfreeze all layers. Train for 40 more epochs with a reduced LR=0.0001. Use a batch size of 2-4 depending on image size and memory.

- Loss Function: Combined loss: L = Lclass + Lbox + L_mask.

- Validation: Monitor Mask Average Precision (Mask AP) at IoU threshold 0.5 on the validation set after each epoch. Early stopping if no improvement for 10 epochs.

Protocol 3.3: Quantitative Evaluation Protocol for Segmentation Outputs

Objective: To quantitatively assess model performance on the held-out test set.

- Metrics Calculation (per image, then averaged):

- For Segmentation Quality: Calculate Dice Similarity Coefficient (DSC) = (2 * |Pred ∩ GT|) / (|Pred| + |GT|), where GT is ground truth mask.

- For Detection/Instance Segmentation: Calculate Average Precision (AP) metrics using the COCO evaluation toolkit: AP@[.50:.95], AP@0.50, AP@0.75.

- Nanocarrier-Specific Metrics:

- Particle Count Accuracy: (1 - |PredCount - GTCount| / GT_Count) * 100%.

- Size Distribution Correlation: Calculate Pearson's R between predicted nanocarrier diameter (from mask area) and ground truth diameter.

- Statistical Reporting: Report all metrics as mean ± standard deviation across the entire test set.

Visual Workflows & Architectures

Diagram 1: AI Pipeline for Nanocarrier Image Analysis

Diagram 2: Model Training & Validation Workflow

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 2: Essential Materials for AI-Driven Nanocarrier Quantification Experiments

| Item / Reagent | Function in the Experimental Pipeline | Example Product / Specification |

|---|---|---|

| High-Resolution Microscopy | Generates the primary input data (images) for AI analysis. | TEM (Jeol JEM-1400Flash), SEM with cryo-stage, Super-resolution Confocal Microscopy. |

| Image Annotation Software | Enables creation of accurate ground truth labels for model training. | VGG Image Annotator (VIA) (free), COCO Annotator (web-based), LabelBox (commercial). |

| Deep Learning Framework | Provides libraries and tools to build, train, and evaluate models. | PyTorch (preferred for research flexibility) or TensorFlow/Keras. |

| Specialized Model Code | Pre-implemented architectures for rapid prototyping. | Detectron2 (FAIR) for Mask R-CNN, MMDetection (OpenMMLab), Ultralytics YOLOv8. |

| GPU Computing Resource | Accelerates model training, reducing time from weeks to hours. | NVIDIA GPU (e.g., RTX 4090, A100, H100) with CUDA and cuDNN support. |

| Data Augmentation Library | Artificially expands training dataset to improve model robustness. | Albumentations (optimized for images), Torchvision Transforms. |

| Evaluation Toolkit | Standardized code to compute accuracy metrics for fair comparison. | COCO Evaluation API (for detection/segmentation), custom scripts for Dice Score. |

| High-Performance Workstation | Local machine for development, testing, and small-scale training. | CPU: ≥16 cores (Intel i9/AMD Ryzen 9), RAM: ≥64GB, SSD: ≥2TB NVMe. |

Application Notes

Within the AI pipeline for nanocarrier quantification, the training phase translates curated data into a predictive model. Hyperparameter tuning is the systematic search for the optimal architectural and training parameters that govern the learning process, directly impacting model accuracy, generalizability, and computational efficiency. For drug development professionals, this step is critical to ensure the model reliably quantifies nanocarrier uptake and distribution in biological samples, a prerequisite for pharmacokinetic and biodistribution studies.

Core Hyperparameters in Deep Learning for Image-Based Quantification

The following table summarizes key hyperparameters, their typical search ranges for convolutional neural networks (CNNs) common in image analysis, and their impact on the model and computational load.

Table 1: Key Hyperparameters for Nanocarrier Quantification CNNs

| Hyperparameter | Typical Search Range/Options | Impact on Model Performance | Computational Consideration |

|---|---|---|---|

| Learning Rate | 1e-4 to 1e-2 (log scale) | Controls step size in weight updates. Too high causes divergence; too low leads to slow convergence. | Central to training stability. Requires careful tuning, often via scheduling. |

| Batch Size | 16, 32, 64, 128 | Affects gradient estimation smoothness and memory use. Smaller batches can regularize but increase noise. | Directly determines GPU/CPU memory footprint. Larger batches speed up epochs but may reduce generalization. |

| Number of Epochs | 50 - 500+ | Defines how many times the model sees the entire dataset. Insufficient epochs underfit; too many overfit. | Primary driver of training time. Must be paired with early stopping. |

| Optimizer | Adam, SGD, RMSprop | Algorithm for updating weights. Adam is often default; SGD with momentum can generalize better. | Adam is memory-intensive but typically converges faster. SGD may require more epochs. |

| Network Depth/Width (e.g., # of CNN layers/filters) | 8-50+ layers, 32-512 filters | Determines model capacity. Deeper/wider networks learn complex features but risk overfitting on smaller datasets. | Increases parameters, memory, and compute time quadratically. Requires significant GPU RAM. |

| Weight Decay (L2 Reg.) | 1e-5 to 1e-3 | Penalizes large weights to prevent overfitting. | Adds minor compute overhead. |

| Dropout Rate | 0.2 to 0.5 | Randomly drops neurons during training to prevent co-adaptation and overfitting. | Effectively creates an ensemble of networks; increases training time slightly. |

Computational Considerations for Research Labs

Training state-of-the-art deep learning models requires significant resources. The choice of hardware and parallelization strategy is often dictated by the model's size and dataset.

Table 2: Computational Hardware & Strategy Comparison

| Resource Type | Typical Specs | Pros for Nanocarrier Research | Cons / Limitations |

|---|---|---|---|

| High-End Consumer GPU (e.g., NVIDIA RTX 4090) | 24 GB VRAM | High memory for moderate 3D image batches; cost-effective for single-lab use. | Limited multi-GPU scaling; not ideal for very large 3D volumes. |

| Data Center GPU (e.g., NVIDIA A100) | 40-80 GB VRAM | Massive memory for large 3D datasets; superior FP16 performance; NVLink for multi-GPU scaling. | Prohibitive cost; requires specialized infrastructure (cooling, power). |

| Cloud Computing (AWS, GCP, Azure) | Scalable GPU instances | No upfront capital cost; elastic scaling for hyperparameter sweeps; access to latest hardware. | Recurring costs can be high; data transfer and security protocols for clinical images are crucial. |

| CPU Cluster (Fallback) | High-core count CPUs | Can run any model without GPU dependency; good for preprocessing. | Orders of magnitude slower for deep learning training; not feasible for extensive tuning. |

Experimental Protocols

Protocol: Systematic Hyperparameter Tuning Using Bayesian Optimization

Objective: To efficiently find the optimal combination of hyperparameters (e.g., learning rate, batch size, dropout) for a CNN model quantifying nanocarrier fluorescence in confocal microscopy images.

Materials & Software:

- Trained/validated dataset from Step 3 (Pipeline).

- Deep Learning Framework (PyTorch or TensorFlow).

- Hyperparameter tuning library (Optuna, Ray Tune).

- Computational resource (GPU-enabled workstation or cloud instance).

Procedure:

- Define Search Space: Specify the range for each hyperparameter in the tuning library's syntax (see Table 1 for guidance).

- Set Objective Function: Create a function that, given a set of hyperparameters, (a) instantiates the model, (b) trains it for a predetermined number of epochs, and (c) returns the validation loss or metric (e.g., validation set Dice score for segmentation).

- Configure Sampler: Use a Tree-structured Parzen Estimator (TPE) sampler (Bayesian optimization) to intelligently select the next hyperparameter set based on previous results, maximizing efficiency over grid/random search.

- Execute Trial: Launch the tuning job. The system will automatically run multiple training trials, each with a different hyperparameter combination.

- Implement Pruning: Integrate asynchronous successive halving pruning to terminate poorly performing trials early, saving computational resources.

- Analysis: Upon completion, extract the trial with the best validation performance. Retrain the model using these optimal hyperparameters on the combined training and validation set for final evaluation on the held-out test set.

Protocol: Implementing Mixed-Precision Training to Reduce Memory Footprint

Objective: To train larger models or use larger batch sizes by reducing GPU memory consumption, potentially speeding up training.

Materials & Software: NVIDIA GPU (Pascal architecture or newer), PyTorch with AMP (Automatic Mixed Precision) or TensorFlow with tf.keras.mixed_precision.

Procedure:

- Enable AMP: In PyTorch, initialize a GradScaler and wrap the forward pass and loss computation in

autocast.

- Modify Optimizer: Use the scaled loss to perform optimizer steps.

- Monitor: Ensure no instability (NaN values) appears in the loss. The GradScaler handles scaling the loss to prevent underflow in FP16 gradients.

- Benefit: This allows the use of 16-bit floating-point (FP16) for activations and gradients, halving memory usage and often increasing training throughput.

Visualizations

Diagram 1: Bayesian Hyperparameter Tuning Loop

Diagram 2: Mixed Precision Training Data Flow

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for AI Model Training

| Item | Function in the AI Pipeline | Specification Notes |

|---|---|---|

| GPU-Accelerated Workstation/Server | Provides the parallel computational power required for training deep neural networks on large image datasets (e.g., 3D confocal stacks). | Minimum 8 GB VRAM (e.g., NVIDIA RTX 3070). For larger 3D datasets, 24+ GB VRAM (e.g., RTX 4090, A100) is recommended. |

| Cloud Compute Credits | Enables access to scalable, high-end hardware (multi-GPU, TPU) for large-scale hyperparameter sweaks and training without upfront capital investment. | Available via AWS, Google Cloud, Azure. Budget management and data egress cost controls are essential. |

| Deep Learning Framework | Provides the libraries and APIs to define, train, and evaluate neural network models. | PyTorch or TensorFlow are industry standards. Choose based on research community adoption and deployment needs. |

| Hyperparameter Tuning Library | Automates the search for optimal training parameters, drastically improving research efficiency over manual tuning. | Optuna (user-friendly), Ray Tune (scalable for distributed computing). |

| Experiment Tracking Platform | Logs hyperparameters, code versions, metrics, and model artifacts for reproducibility and comparison. | Weights & Biases (W&B), MLflow, TensorBoard. Critical for collaborative drug development projects. |

| Containerization Software | Packages the complete training environment (OS, libraries, code) into a container for seamless deployment across different compute environments. | Docker, Singularity. Ensures consistent results from a researcher's laptop to a high-performance cluster. |

Within the broader thesis on developing an AI deep learning pipeline for automated nanocarrier quantification in drug delivery research, Step 5 represents the critical transition from model validation to practical utility. This phase involves deploying the trained and validated convolutional neural network (CNN) model to analyze new, unseen experimental microscopy images. The primary objective is to quantify nanocarrier attributes—such as size distribution, concentration, and morphology—from fluorescence or electron microscopy data of novel nanoparticle formulations, enabling rapid assessment for research and development scientists.

Core Deployment Architecture & Workflow

Table 1: Deployment System Components

| Component | Specification | Function in Inference |

|---|---|---|

| Trained Model | TensorFlow/Keras or PyTorch .h5 or .pt file |

Contains learned weights for nanocarrier detection/segmentation. |

| Preprocessing Module | Python script using OpenCV & NumPy | Standardizes new images (resizing, normalization, background subtraction) to match training data. |

| Inference Engine | TensorFlow Serving or ONNX Runtime | High-performance environment for executing model predictions on new data batches. |

| Post-processing Script | Custom Python module | Converts model output (e.g., segmentation masks) into quantitative data (count, size in nm, polydispersity). |

| Results Database | SQLite or PostgreSQL table | Stores quantitative results, image metadata, and timestamps for traceability. |

Title: Inference Pipeline for New Experimental Data

Detailed Experimental Protocol for Inference

Protocol 3.1: Running Analysis on New TEM/Confocal Images

Objective: To use the deployed AI model to automatically quantify nanocarriers from a new batch of Transmission Electron Microscopy (TEM) or confocal microscopy images.

Materials:

- New experimental image set: TEM images (.tif/.nd2) of novel polymeric nanocarriers.

- Deployment workstation: Computer with GPU (e.g., NVIDIA T4) and ≥16 GB RAM.

- Software environment: Docker container with all dependencies (Python 3.9, TensorFlow 2.13, OpenCV 4.8).

Procedure:

- Data Transfer & Organization:

- Transfer new microscopy images to a designated input directory (e.g.,

/data/new_images/). - Ensure images are in a supported format (TIFF, PNG, ND2). Use a Bio-Formats converter if necessary.

- Transfer new microscopy images to a designated input directory (e.g.,

- Configuration:

- Open the configuration file (

config_inference.yaml). - Set the

input_dirpath to the new image directory. - Verify the

model_pathpoints to the correct deployed model file. - Set output directory (

output_dir) for results.

- Open the configuration file (

- Run Inference Batch Script:

- Execute the main inference script from the terminal:

- Execute the main inference script from the terminal:

- Post-processing & Quantification:

- The post-processing module analyzes each mask using connected component analysis.

- For each detected object, it calculates:

- Area (pixels): Converted to nm² using the image's pixel-to-nanometer calibration value (e.g., 0.78 nm/pixel for TEM).

- Equivalent circular diameter (nm).

- Morphological descriptors (e.g., circularity).

- Output Generation:

- Results are compiled into a

results.csvfile in the output directory. - A summary PDF report with overlaid detection masks and histograms of size distribution is generated.

- Results are compiled into a

Table 2: Example Inference Output for a New Image Set (Simulated Data)

| Image ID | Nanocarrier Count | Mean Diameter (nm) | Std Dev (nm) | Polydispersity Index | Analysis Time (s) |

|---|---|---|---|---|---|

| EXPTEM001 | 247 | 112.3 | 18.7 | 0.166 | 3.4 |

| EXPTEM002 | 198 | 108.9 | 22.1 | 0.203 | 3.1 |

| EXPTEM003 | 312 | 115.4 | 25.6 | 0.222 | 3.8 |

| Batch Average | 252.3 | 112.2 | 22.1 | 0.197 | 3.4 |

Validation of Inference Results

Protocol 4.1: Spot-Check Validation Against Manual Analysis

To ensure inference reliability, a subset of new images must be validated against manual quantification.

- Random Sampling: Randomly select 5-10 images from the new experimental set.

- Manual Annotation: An experienced researcher will use ImageJ/Fiji to manually count and measure ≥50 nanocarriers per selected image.

- Comparison: Calculate the percentage error between AI and manual counts, and the correlation coefficient for size measurements.

Title: Inference Validation Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Nanocarrier Experimentation & AI Analysis

| Item | Function in Experiment/Analysis | Example Product/ Specification |

|---|---|---|

| Polymeric Nanoparticle Formulation | The nanocarrier of interest; provides the sample for imaging. | PLGA-PEG nanoparticles, loaded with fluorescent dye (e.g., Cy5) for tracking. |

| TEM Grids (Carbon-coated) | Support film for high-resolution imaging of nanocarrier morphology. | 300-mesh copper grids with continuous carbon film. |

| Negative Stain (e.g., Uranyl Acetate) | Enhances contrast of nanocarriers in TEM imaging. | 2% aqueous uranyl acetate solution. |

| Confocal Microscopy Slide | Chambered slide for imaging fluorescent nanocarriers in solution or cells. | #1.5 cover glass, 8-well chambered slide. |

| Calibration Standard (for size) | Provides reference for pixel-to-nanometer conversion, critical for AI quantification. | TEM grating replica (e.g., 2160 lines/mm) or fluorescent nanosphere size standard (100 nm). |

| Model Deployment Environment | Containerized software to ensure reproducible inference across lab computers. | Docker image with Python, TF, OpenCV, and the trained model. |

| High-Performance Storage | Stores large volumes of raw microscopy images and inference results. | Network-attached storage (NAS) with ≥10 TB capacity. |

Troubleshooting & Best Practices

- Mismatched Image Contrast: If the model performs poorly, ensure the new image preprocessing matches the training data (e.g., use histogram matching).

- Handling Very Large Images: For whole-slide scans, implement a tiling strategy where the image is split into overlapping patches for analysis, then results are stitched.

- Version Control: Always log the model version, preprocessing parameters, and software environment used for each inference batch to ensure reproducibility.

The deployment and inference step operationalizes the AI deep learning pipeline, transforming it from a research project into a practical tool for nanocarrier quantification. By following the protocols outlined, researchers can obtain rapid, reproducible, and quantitative analysis of new experimental formulations, accelerating the iterative design and optimization cycle in nanomedicine development.

Overcoming Obstacles: Solving Common Problems in AI-Based Quantification

Within the AI-driven deep learning pipeline for nanocarrier quantification, a critical bottleneck is the scarcity of high-quality, annotated experimental data. Acquiring labeled transmission electron microscopy (TEM) or cryo-EM images of lipid nanoparticles (LNPs) and polymeric micelles is resource-intensive. This application note details proven and emerging techniques for data augmentation and synthetic data generation to combat data limitations, thereby enhancing model robustness, generalizability, and predictive accuracy in quantitative nanomedicine research.

Core Techniques & Quantitative Comparison

Data Augmentation Techniques

Data augmentation applies label-preserving transformations to existing datasets to increase their effective size and variability.

Table 1: Common Image-Based Augmentation Techniques for Nanocarrier Imaging

| Technique | Typical Parameter Range | Primary Benefit | Risk for Nanocarrier Data |

|---|---|---|---|

| Geometric: Rotation | ±10–30° | Invariance to orientation | May distort anisotropic structures |

| Geometric: Flipping | Horizontal/Vertical | Doubles dataset | Can create non-physical orientations |

| Geometric: Scaling | 0.8–1.2x | Size invariance | May confuse size distribution analysis |

| Photometric: Brightness/Contrast | Δ ±20% | Robustness to staining variations | Can obscure low-contrast particles |

| Photometric: Gaussian Noise | σ: 0.01–0.05 | Robustness to sensor noise | Excessive noise hides morphological detail |

| Elastic Deformations | Alpha: 10–50, Sigma: 4–8 | Realistic membrane/texture variation | Computationally intensive |

Synthetic Data Generation Techniques

Synthetic generation creates entirely new, annotated data samples from models or simulations.

Table 2: Synthetic Data Generation Methods for Nanocarrier Quantification

| Method | Principle | Data Fidelity | Annotation Cost | Suitability |

|---|---|---|---|---|

| Physics-Based Simulation (e.g., TEM simulator) | Simulates imaging physics (e.g., electron scattering). | High (if calibrated) | Automatic | High-fidelity structural analysis |

| 3D Model Rendering | Renders 3D models of nanocarriers with realistic materials. | Medium-High | Automatic | Morphology & aggregation studies |

| Generative Adversarial Networks (GANs) | AI model learns data distribution and generates new samples. | Medium (needs large seed data) | Automatic | Expanding heterogeneous populations |

| Diffusion Models | Progressive denoising to generate data from noise. | High (needs large seed data) | Automatic | Generating high-resolution images |

| Style Transfer | Imposes image "style" (e.g., staining) on synthetic structures. | Medium | Automatic | Domain adaptation (e.g., lab-to-lab variance) |

Experimental Protocols

Protocol 3.1: Physics-Based Synthetic TEM Image Generation

Objective: Generate synthetic TEM images of LNPs for training a segmentation model. Materials: 3D structural models of LNPs (from MD simulations or idealized shapes), TEM simulation software (e.g., abTEM, TEMUL, or custom MATLAB/Python with CTF models).

Procedure:

- Model Preparation: Define LNP core-shell geometry (core diameter, lipid bilayer thickness) using coordinate files or parametric equations.

- Potential Map Calculation: Convert the structural model into an electrostatic potential map. For lipid membranes, use a constant potential value for the lipid headgroup and tail regions.

- Microscope Parameter Setting: Configure simulation parameters:

- Acceleration Voltage: 80-120 kV

- Spherical Aberration (Cs): 1.0 mm

- Defocus: -1 to -3 µm (underfocus)

- Pixel Size: 0.5-2.0 Å/pixel

- Dose: 20-40 e⁻/Ų

- Wave Propagation: Use the Multislice algorithm to simulate the interaction of the electron wave with the potential map.

- Contrast Transfer Function (CTF) Application: Apply the CTF to the exit wave to simulate lens aberrations and defocus.

- Noise Injection: Add Poisson noise proportional to the electron dose and Gaussian readout noise to mimic detector noise.

- Dataset Curation: Generate 5,000-50,000 images with randomized parameters (defocus, particle orientation, aggregation state, background impurities). Automatically save corresponding ground truth masks.

Protocol 3.2: Advanced Augmentation Pipeline for Cryo-EM Particle Stacks

Objective: Augment a limited set of cryo-EM particle images to improve 3D classification and reconstruction. Materials: Extracted particle image stacks (.mrc or .star files), Relion, CryoSPARC, or custom Python scripts (NumPy, scikit-image, Albumentations).

Procedure:

- Base Data Load: Load 2D particle images and their associated alignment parameters (Euler angles, shifts).

- Label-Preserving Augmentation (On-the-Fly):