Beyond Imaging: How AI Quantifies Nanocarrier Biodistribution for Smarter Drug Delivery

This article explores the transformative role of artificial intelligence in precisely quantifying the biodistribution of nanocarriers—a critical bottleneck in nanomedicine development.

Beyond Imaging: How AI Quantifies Nanocarrier Biodistribution for Smarter Drug Delivery

Abstract

This article explores the transformative role of artificial intelligence in precisely quantifying the biodistribution of nanocarriers—a critical bottleneck in nanomedicine development. We first establish the fundamental challenge of tracking nanocarriers in complex biological systems. We then detail current AI methodologies, from image analysis to pharmacokinetic modeling, for analyzing distribution data. The discussion addresses common pitfalls and optimization strategies for data acquisition and algorithm training. Finally, we evaluate the validation of AI models against gold-standard techniques and compare different computational approaches. This guide equips researchers and drug developers with the knowledge to leverage AI for accelerating the design and clinical translation of targeted nanotherapeutics.

The Biodistribution Bottleneck: Why Quantifying Nanocarrier Fate is Crucial for Nanomedicine

Accurately quantifying nanocarrier biodistribution is critical for assessing therapeutic efficacy and safety. The primary challenges stem from biological complexity and technical limitations. The following table summarizes key quantitative hurdles and current methodological detection limits.

Table 1: Key Quantitative Challenges in In Vivo Nanocarrier Tracking

| Challenge Category | Specific Parameter | Typical Range/Issue | Impact on Quantification |

|---|---|---|---|

| Sensitivity & Limit of Detection | Minimum detectable # of particles per gram tissue | 10^9 - 10^12 particles/g (optical methods); 10^6 - 10^8 particles/g (radiometric) | Misses low-efficiency targeting; overestimates clearance. |

| Spatial Resolution | In vivo imaging resolution (macroscopic) | 1-3 mm (MRI, PET); 2-5 mm (Fluorescence/ Bioluminescence) | Cannot resolve cellular or subcellular distribution; aggregates appear as single signal. |

| Signal-to-Noise (S/N) Ratio | Background autofluorescence (optical) | Noise can be 50-90% of total signal in deep tissue. | Obscures true nanocarrier signal, leading to false positives. |

| Quantification Linearity | Signal vs. nanocarrier concentration | Nonlinear beyond 10^11 particles/mL due to quenching/absorption. | Requires complex calibration models; absolute quantification unreliable. |

| Temporal Resolution | Time for full-body 3D quantification | Minutes to hours per time point. | Misses rapid pharmacokinetic phases (e.g., initial distribution). |

Core Experimental Protocols for Benchmarking Tracking Modalities

Protocol 2.1: Ex Vivo Gamma Counting for Radiolabeled Nanocarrier Biodistribution (Gold Standard)

This protocol establishes the baseline quantitative dataset for training AI models.

- Nanocarrier Formulation & Radiolabeling: Prepare lipid nanoparticles (LNPs) via microfluidics. Use a hydrophobic chelator (e.g., DOTA) to incorporate gamma-emitting radioisotope ^111In or ^89Zr. Purify via size-exclusion chromatography (SEC).

- Dosing & Animal Model: Administer a known dose (e.g., 50 µCi, 1 mg/kg nanocarrier) intravenously to Balb/c mice (n=5 per time point). Maintain under specific pathogen-free (SPF) conditions.

- Tissue Harvest & Processing: Euthanize at predetermined times (e.g., 1, 4, 24, 72h). Perfuse with 10 mL saline. Collect organs of interest (blood, liver, spleen, kidneys, lungs, tumor). Weigh each organ precisely.

- Gamma Counting: Place each tissue in a gamma counter (e.g., PerkinElmer Wizard2). Count radioactivity for each sample for 60 seconds. Use a standard curve of diluted injectate to convert counts per minute (CPM) to percentage of injected dose per gram of tissue (%ID/g).

- Data Analysis: Calculate mean and standard deviation for each organ/time point. This dataset serves as the "ground truth" for validating AI-enhanced imaging analyses.

Protocol 2.2: Fluorescent Imaging-Based Biodistribution with Spectral Unmixing

This protocol details steps to improve quantitative accuracy for optical imaging, a common but noisy modality.

- Dual-Labeled Nanocarrier Preparation: Formulate polymeric NPs (e.g., PLGA) encapsulating a near-infrared (NIR) dye (e.g., DiR, emission 790 nm) and a reference fluorophore (e.g., Cy5.5, emission 710 nm) for rationetric analysis.

- In Vivo Imaging: Anesthetize mice and image using a calibrated IVIS Spectrum or similar system at each time point post-injection. Acquire images at multiple excitation/emission filters to capture full spectral signatures.

- Spectral Unmixing: Using instrument software (e.g., Living Image), acquire an autofluorescence reference spectrum from a non-injected mouse. Apply linear unmixing algorithms to separate the DiR, Cy5.5, and autofluorescence signals in each pixel.

- Region-of-Interest (ROI) Analysis: Draw ROIs over organs based on a white light reference image. Quantify total radiant efficiency ([p/s/cm²/sr] / [µW/cm²]) for the unmixed DiR signal in each ROI.

- Calibration to Absolute Amount: Sacrifice a subset of animals, harvest organs, and homogenize. Use a plate reader to measure extracted fluorescence against a standard curve of known NP concentrations. Create a correlation model between in vivo ROI signal and ex vivo absolute quantity.

AI-Enhanced Workflow for Integrating Multi-Modal Data

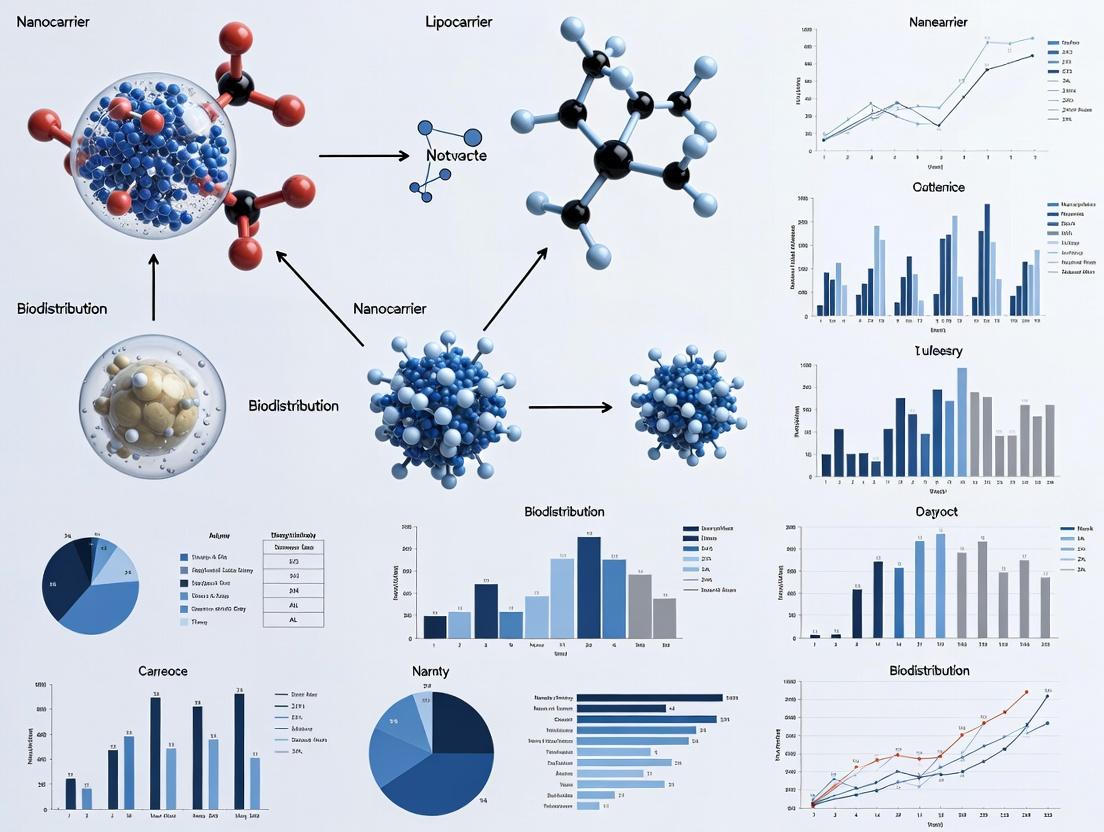

Diagram 1: AI-Powered Multi-Modal Data Integration Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents and Materials for Advanced Nanocarrier Tracking

| Item | Function & Relevance to Quantification |

|---|---|

| Near-Infrared (NIR) Fluorophores (e.g., IRDye 800CW, DiR) | Emit light in the 750-900 nm range where tissue autofluorescence is lower, improving signal-to-noise ratio for optical imaging. |

| Long-Lived Radioisotopes (e.g., ^89Zr, t1/2=78.4h; ^64Cu, t1/2=12.7h) | Allow tracking over several days to match nanocarrier pharmacokinetics, enabling quantitative PET imaging and ex vivo counting. |

| Cherenkov Luminescence Reporters (e.g., ^18F, ^68Ga) | Enable optical imaging of radiolabeled nanocarriers using standard IVIS systems without fluorescence, correlating optical and nuclear signals. |

| Matrix Metalloproteinase (MMP)-Cleavable Peptide Linkers | Used in activatable "smart" probes. Fluorescence/quenching is activated only upon cleavage by target tissue enzymes, reducing background. |

| Lanthanide-Doped Upconversion Nanoparticles (UCNPs) | Convert NIR light to visible emissions, avoiding autofluorescence and allowing deep-tissue quantitative imaging with zero background. |

| Size-Exclusion Chromatography (SEC) Columns (e.g., Sepharose CL-4B) | Critical for purifying labeled nanocarriers from unincorporated dyes or radioisotopes, ensuring accurate dosing and interpretation. |

| Tissue Homogenization Kits (e.g., with protease inhibitors) | For complete lysis of organs to extract nanocarriers/ labels for absolute quantitative validation via HPLC, plate reader, or mass spec. |

| Phantom Materials (e.g., Intralipid solutions, tissue-mimicking gels) | Used to create calibration curves that simulate light scattering in tissue, essential for converting optical signals to quantitative concentrations. |

Within the paradigm of AI-based quantification for nanocarrier biodistribution research, a critical evaluation of traditional analytical techniques is essential. These methods, while foundational, present significant limitations in specificity, sensitivity, spatial resolution, and data richness, which constrain the development of predictive pharmacokinetic models. This document details the procedural and quantitative limitations of gamma counting and fluorescence imaging, providing detailed protocols and a comparative analysis to underscore the necessity for advanced, AI-integrated analytical platforms.

Gamma Counting: Protocol and Limitations

Experimental Protocol: Ex-Vivo Tissue Gamma Counting for Radiolabeled Nanocarriers

Objective: To quantify the percentage of injected dose (%ID) of a radiolabeled nanocarrier (e.g., with ^99mTc, ^111In, ^125I) accumulated in various organs at a predetermined time point post-administration.

Materials & Reagents:

- Radiolabeled Nanocarrier: Nanocarrier conjugated with a gamma-emitting radioisotope.

- Animal Model: Typically mice or rats.

- Gamma Scintillation Counter: Equipped with appropriate energy windows for the isotope.

- Tissue Digest Solution: Soluene-350 or similar tissue solubilizer.

- Scintillation Cocktail: For homogeneous counting of digested tissues.

- Counting Vials & Disposables.

- Dose Standard: A known aliquot of the injected dose for calibration.

Procedure:

- Administration: Inject a known activity (e.g., 5 µCi/animal) of the radiolabeled nanocarrier via the intended route (e.g., intravenous).

- Termination & Dissection: At the terminal time point (e.g., 24h), euthanize the animal. Perfuse with saline via cardiac puncture to clear blood from organs. Dissect and weigh all organs of interest (liver, spleen, kidneys, heart, lungs, tumor, etc.).

- Tissue Processing: Place each whole organ or a representative portion (e.g., 100 mg) into a pre-weighed scintillation vial. Add 1 mL of tissue digest solution. Incubate at 50°C with agitation until fully dissolved (typically 24-48 hours).

- Neutralization & Cocktail Addition: Cool vials. Add 100 µL of glacial acetic acid to neutralize the digest. Add 10 mL of appropriate scintillation cocktail. Vortex thoroughly.

- Gamma Counting: Count each sample in the gamma counter using a pre-defined energy window for the isotope. Count the prepared Dose Standard (a 1:100 or 1:1000 dilution of the injected dose) under identical conditions.

- Calculation:

- Correct all counts for background and isotopic decay.

- %ID/organ = (Counts in organ / Counts in Dose Standard) * (Dilution Factor of Standard) * 100.

- %ID/g = %ID/organ / weight of organ (g).

Limitations Summary (Table 1):

Table 1: Quantitative Limitations of Gamma Counting

| Parameter | Typical Performance | Limitation Impact |

|---|---|---|

| Spatial Resolution | None (Whole-organ homogenate) | No intra-organ distribution data. Cannot differentiate perivascular vs. deep tissue penetration. |

| Signal Specificity | Moderate | Measures total radioactivity; cannot distinguish intact nanocarrier from free radioisotope or metabolic fragments without coupled chromatography. |

| Multiplexing Capacity | Low | Typically limited to 2-3 isotopes with non-overlapping energy peaks (e.g., ^111In & ^125I). |

| Temporal Resolution | Terminal (Single time point per animal) | Requires large cohort sizes for pharmacokinetic curves, increasing variability and cost. |

| Data Dimensionality | 1D (Scalar %ID/g value) | Provides no contextual morphological or cellular data. Insufficient for complex AI model training. |

Title: Gamma Counting Workflow & Key Limitations

Fluorescence Imaging: Protocol and Limitations

Experimental Protocol: In Vivo Fluorescence Imaging (IVIS) of Fluorophore-Labeled Nanocarriers

Objective: To non-invasively monitor the real-time whole-body distribution and relative accumulation of a fluorescently labeled nanocarrier (e.g., with Cy5.5, ICG, DiR) over time.

Materials & Reagents:

- Fluorophore-Labeled Nanocarrier: Nanocarrier conjugated with a near-infrared (NIR) fluorophore.

- Animal Model: Typically immunodeficient mice (to reduce autofluorescence).

- In Vivo Imaging System (IVIS): Equipped with appropriate excitation/emission filters.

- Anesthesia Setup: Isoflurane vaporizer and induction chamber.

- Depilatory Cream: For hair removal to reduce signal attenuation.

- Imaging Black Box.

- Fluorescence Reference Standard.

Procedure:

- Animal Preparation: Shave or depilate the animal 24 hours prior to imaging. Fast for 4-6 hours to reduce gut autofluorescence.

- System Calibration: Power on the IVIS and allow lamps to warm up. Set appropriate imaging parameters: exposure time (auto or fixed), binning, f/stop, and select filter sets (e.g., 745nm ex / 820nm em for DiR).

- Baseline Imaging: Anesthetize the animal with isoflurane (2-3% induction, 1-2% maintenance). Place the animal in the imaging chamber in a prone position. Acquire a baseline pre-injection image.

- Administration & Imaging: Inject the fluorescent nanocarrier (e.g., 2 nmol of fluorophore per mouse) via tail vein. Return the animal to the imaging chamber. Acquire serial images at defined time points (e.g., 5 min, 1h, 4h, 24h). Maintain consistent animal positioning and anesthesia depth.

- Ex Vivo Imaging: At the final time point, euthanize the animal, dissect organs, and image them ex vivo under the same settings for quantitative organ-level data.

- Data Analysis: Use imaging software (e.g., Living Image) to define regions of interest (ROIs) over organs/tumor. Quantify signal as Total Radiant Efficiency ([p/s]/[µW/cm²]). Subtract background from a similar ROI on a control animal or pre-injection image.

Limitations Summary (Table 2):

Table 2: Quantitative Limitations of Planar Fluorescence Imaging

| Parameter | Typical Performance | Limitation Impact |

|---|---|---|

| Penetration Depth | < 1 cm (for NIR) | Signal is heavily attenuated in deep tissues. Obscures quantification in large animals or deep-seated organs. |

| Spatial Resolution | 1-3 mm (In Vivo) | Cannot resolve cellular or sub-cellular localization. |

| Quantitative Accuracy | Low to Moderate | Signal is non-linear and affected by tissue absorption, scattering, and quenching. Difficult to calibrate to absolute nanocarrier mass. |

| Multiplexing Capacity | Moderate (Spectral Unmixing) | Limited by broad emission spectra and crosstalk. Typically 2-3 fluorophores. |

| Background & Autofluorescence | High | Tissue autofluorescence (especially in green spectrum) creates noise, reducing signal-to-noise ratio. |

Title: Fluorescence Imaging Signal Path & Artifacts

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents for Traditional Biodistribution Studies

| Reagent/Material | Primary Function | Key Consideration for Limitation |

|---|---|---|

| ^125I or ^111In Isotopes | Gamma-emitting labels for long-term tissue tracking. | Requires specialized licensing, generates radioactive waste, and label instability can confound data. |

| Cyanine Dyes (Cy5.5, DiR) | NIR fluorophores for in vivo optical imaging. | Prone to photobleaching; fluorescence is environment-sensitive (quenching in acidic organelles like lysosomes). |

| Tissue Solubilizer (Soluene) | Digests whole organs for homogeneous gamma counting. | Destroys all spatial information. Harsh chemicals preclude subsequent analysis on the same sample. |

| Isoflurane Anesthetic | Maintains animal immobility for longitudinal imaging. | Can alter cardiovascular physiology, indirectly affecting nanocarrier pharmacokinetics. |

| Matrigel | Used for subcutaneous tumor cell implantation. | Introduces variability in tumor model morphology and vasculature, impacting nanocarrier EPR effect. |

| Phosphate Buffered Saline (PBS) | Standard vehicle for nanocarrier formulation and injection. | Lack of biological proteins may cause aggregation upon injection, altering biodistribution versus clinical formulations. |

In AI-based quantification for nanocarrier biodistribution research, "AI" encompasses specific, distinct computational methodologies. This document clarifies the core concepts of Machine Learning (ML) and Deep Learning (DL), framing them within the workflow of quantifying nanocarrier localization and concentration from complex biological imaging data.

Conceptual Definitions & Application Scope

Machine Learning (ML)

ML involves algorithms that parse data, learn from that data, and then apply learned patterns to make informed decisions or predictions. In biodistribution studies, traditional ML often requires manual feature engineering—researchers define relevant quantifiable characteristics (features) from data, such as particle size, shape, or intensity statistics from microscopy images, which the algorithm then uses for classification or regression.

Primary Applications in Biodistribution:

- Classification: Categorizing nanocarriers as "in tumor" vs. "in liver" based on extracted tissue texture features.

- Regression: Predicting organ-specific accumulation levels from physicochemical nanocarrier properties.

- Clustering: Identifying distinct biodistribution patterns across a cohort without pre-defined labels.

Deep Learning (DL)

DL is a subset of ML based on artificial neural networks with multiple layers (deep architectures). These models automatically learn hierarchical feature representations directly from raw data (e.g., entire images or spectral sequences), eliminating the need for manual feature engineering.

Primary Applications in Biodistribution:

- Semantic Segmentation: Pixel-wise labeling of whole-slide histological or MRI images to identify nanocarrier locations.

- Object Detection: Counting and localizing individual nanocarriers in complex tissue backgrounds.

- Image Regression: Directly predicting a pharmacokinetic parameter (like AUC) from a time-series of imaging data.

Table 1: ML vs. DL for AI-Based Biodistribution Quantification

| Aspect | Machine Learning (ML) | Deep Learning (DL) |

|---|---|---|

| Data Dependency | Effective with smaller datasets (100s-1000s of samples). | Requires very large datasets (1000s-millions of samples). |

| Feature Engineering | Mandatory. Domain expertise required to define and extract relevant features. | Automatic. Models learn optimal features from raw data. |

| Interpretability | Generally higher; model decisions can often be traced to specific features. | Often a "black box"; complex to interpret why a specific decision was made. |

| Computational Load | Lower; can often be run on high-performance CPUs. | Very high; typically requires GPUs/TPUs for training. |

| Typical Input Data | Tabular data of extracted features, summarized statistics. | Raw, high-dimensional data (images, spectra, time-series signals). |

| Example Model Types | Random Forest, Support Vector Machines (SVM), Gradient Boosting. | Convolutional Neural Networks (CNN), U-Nets, Vision Transformers. |

Experimental Protocols for AI-Based Quantification

Protocol: ML Workflow for Organ-Specific Accumulation Prediction

Aim: Predict percentage of injected dose (%ID) in the liver from nanocarrier zeta potential and hydrodynamic diameter.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Data Preparation:

- For N nanocarrier formulations, measure zeta potential (mV) and hydrodynamic diameter (nm). Standardize each feature to zero mean and unit variance.

- Quantify in vivo %ID in the liver via ICP-MS or fluorescence imaging at a fixed time point (e.g., 24h post-injection).

- Feature-Target Pairing: Create a dataset where each row is a formulation, with columns:

Zeta_Potential,Diameter,%ID_Liver. - Model Training & Validation:

- Split data 80/20 into training and held-out test sets.

- On the training set, train a Support Vector Regressor (SVR) using a radial basis function (RBF) kernel. Optimize hyperparameters (C, gamma) via 5-fold cross-validation.

- Apply the optimized model to the test set to generate predictions.

- Quantification & Analysis:

- Calculate the Root Mean Square Error (RMSE) and R² score between predicted and actual %ID on the test set.

- Use the trained model to predict %ID for new, untested formulation properties.

Protocol: DL Workflow for Automated Nanocarrier Segmentation in Histology

Aim: Automatically segment and quantify fluorescently-labeled nanocarriers within tumor tissue sections.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Dataset Curation:

- Acquire high-resolution fluorescent microscopy images of tumor sections from animals treated with labeled nanocarriers.

- Manually annotate (label) pixels corresponding to nanocarriers to create ground truth masks. Requires ~1000s of annotated image patches.

- Model Architecture & Training:

- Implement a U-Net convolutional neural network architecture.

- Split image data into training (70%), validation (15%), and test (15%) sets. Apply data augmentation (rotation, flipping) to training images.

- Train the model using the Dice loss function and the Adam optimizer to minimize loss on the training set. Monitor performance on the validation set.

- Quantification & Analysis:

- Apply the trained model to the held-out test set images to generate segmentation masks.

- Calculate performance metrics: Dice Coefficient (F1 score) and Intersection over Union (IoU) comparing predicted vs. ground truth masks.

- Use the model to process new images. The output mask allows direct quantification of nanocarrier area (%) and particle count (via connected components analysis).

Visualizing Methodological Pathways & Workflows

Title: ML Workflow with Feature Engineering

Title: DL End-to-End Learning Workflow

Title: Decision Flow: ML vs. DL Selection

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for AI-Based Biodistribution Quantification Experiments

| Item | Function in Context | Example/Note |

|---|---|---|

| Fluorescently-Labeled Nanocarriers | Enables visualization and pixel-wise annotation for DL segmentation tasks. | Cy5.5, DiR, or quantum dot labels for in vivo imaging. |

| Inductively Coupled Plasma Mass Spectrometry (ICP-MS) | Provides gold-standard quantitative elemental data (e.g., Au, Si) for organ-level biodistribution, used as ground truth for ML regression models. | Critical for validating imaging-based AI predictions. |

| High-Resolution Whole-Slide Scanner | Digitizes tissue sections for high-throughput, quantitative analysis, creating the raw image dataset for DL models. | Enables creation of large-scale training datasets. |

| Image Annotation Software | Allows researchers to generate pixel-accurate ground truth labels (masks) for training supervised DL models. | e.g., QuPath, ImageJ, commercial platforms. |

| Cloud GPU/TPU Compute Credits | Provides the necessary computational infrastructure for training complex DL models, which is often beyond typical local server capacity. | e.g., AWS, GCP, Azure credits. |

| Automated Tissue Processing Systems | Increases throughput and consistency of sample preparation for imaging, reducing noise and variability in the input data for AI models. | Standardizes the "raw data" generation step. |

| Curated Public Datasets | Pre-existing, labeled imaging datasets (e.g., from similar studies) can be used for transfer learning, reducing the need for massive private data collection. | Useful for initial model pretraining. |

Within the framework of an AI-based quantification thesis for nanocarrier biodistribution research, three pharmacokinetic parameters are paramount: Area Under the Curve (AUC), Tumor Accumulation (%ID/g), and Clearance Rates. These metrics provide a quantitative foundation for training and validating machine learning models that predict in vivo performance. Accurate measurement of these parameters is critical for optimizing nanocarrier design and accelerating oncological drug development.

Core Parameter Definitions & Data Synthesis

| Parameter | Full Name | Typical Measurement Method | Key Interpretation in Nanocarrier Research | Representative Value Range (Literature) |

|---|---|---|---|---|

| AUC | Area Under the Curve | Non-compartmental analysis of plasma concentration vs. time data. | Total systemic exposure to the nanocarrier or its payload. Reflects bioavailability and circulation longevity. | 50-500 µg·h/mL (varies widely with formulation) |

| %ID/g | Percent Injected Dose per gram of tissue | Ex vivo gamma counting, fluorescence imaging, or LC-MS of homogenized tissue at terminal time points. | Targeting efficiency and specific localization in the tumor microenvironment. | 1-10 %ID/g for targeted nanocarriers at peak accumulation (24-72h). |

| Clearance Rate | Systemic Clearance (CL) or Elimination Rate Constant (Ke) | Pharmacokinetic modeling from serial blood sampling. | Rate of removal from systemic circulation (total body clearance) or rate constant from terminal phase. | CL: 0.1-1.0 mL/h for long-circulating particles; Ke: 0.05-0.3 h⁻¹. |

Experimental Protocols

Protocol 1: Determining AUC and Clearance from Blood Pharmacokinetics

Objective: Quantify systemic exposure (AUC) and clearance (CL) of a radiolabeled or fluorescently tagged nanocarrier.

Materials:

- Radiolabeled (e.g., ⁹⁹ᵐTc, ¹¹¹In, ⁶⁴Cu) or dye-loaded (e.g., DiR, Cy5.5) nanocarrier.

- Animal model (e.g., tumor-bearing mouse).

- Serial blood collection system (e.g., retro-orbital or submandibular).

- Gamma counter, fluorescence plate reader, or LC-MS/MS.

- Pharmacokinetic analysis software (e.g., PK Solver, WinNonlin).

Procedure:

- Administer nanocarrier via intravenous injection at a standardized dose (e.g., 5 mg/kg, 100 µCi).

- Collect blood samples (20-30 µL) at pre-determined time points (e.g., 2 min, 15 min, 1h, 4h, 24h, 48h, 72h).

- Process samples: Weigh, lyse, and measure radioactivity/fluorescence intensity.

- Convert signals to concentration values (µg/mL or %ID/mL).

- Plot plasma concentration (%ID/mL) vs. time.

- AUC Calculation: Use the trapezoidal rule to calculate AUC from zero to the last measured time point (AUC₀–t). Extrapolate to infinity (AUC₀–∞) by adding Ct/Ke, where Ct is the last concentration and Ke is the terminal elimination rate constant.

- Clearance Calculation: Calculate total systemic clearance using CL = Dose / AUC₀–∞.

Protocol 2: Quantifying Tumor Accumulation (%ID/g)

Objective: Precisely measure the amount of nanocarrier localized in the tumor and major organs at a terminal time point.

Materials:

- Dosed animals from Protocol 1.

- Dissection tools.

- Tissue homogenizer.

- Pre-weighed scintillation vials or microcentrifuge tubes.

- Balance and gamma counter/fluorescence spectrometer.

Procedure:

- At a selected time point post-injection (e.g., 24h or 48h), euthanize animals humanely.

- Excise tumor and relevant organs (liver, spleen, kidneys, heart, lung).

- Weigh each tissue sample accurately.

- Homogenize tissues in a known volume of appropriate buffer (e.g., PBS, 1% Triton X-100).

- For radiolabels: Count homogenate aliquots in a gamma counter alongside a diluted standard of the injected dose.

- For fluorescence: Measure fluorescence intensity in homogenate supernatants and compare to a standard curve.

- Calculation: %ID/g = (Activity or signal in tissue sample / Total injected activity or signal) / Weight of tissue (g) × 100%.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Biodistribution Studies |

|---|---|

| Near-Infrared (NIR) Fluorophores (e.g., DiR, Cy7) | Enables in vivo longitudinal imaging and ex vivo tissue quantification with low background autofluorescence. |

| Chelators for Radiometals (e.g., DOTA, NOTA) | Covalently linked to nanocarriers to enable stable binding of diagnostic radionuclides (⁶⁴Cu, ¹¹¹In) for quantitative SPECT/PET and gamma counting. |

| Fluorescence Microsphere Standards | Used for calibration and normalization of fluorescence imaging systems to ensure quantitative accuracy across experiments. |

| ICP-MS Standard Solutions | Essential for quantifying inorganic nanoparticle components (e.g., Au, Si) in tissue digests via Inductively Coupled Plasma Mass Spectrometry. |

| Perfusion Buffer (e.g., 1x PBS) | Used for vascular perfusion prior to tissue harvest to remove blood-pool signal, isolating specifically accumulated nanocarriers. |

Visualizing Data Integration for AI Modeling

AI-Driven Prediction of Nanocarrier Pharmacokinetics

Workflow for AUC and Clearance Determination

Protocol for Ex Vivo %ID/g Quantification

In the development of nanocarrier-based therapeutics, pharmacokinetic/pharmacodynamic (PK/PD) models are indispensable for predicting efficacy and toxicity. However, their predictive accuracy is fundamentally constrained by the quality and granularity of the biodistribution data used to parameterize them. This application note argues that comprehensive, spatially-resolved biodistribution data is not merely an input but the foundational scaffold for reliable PK/PD modeling, especially within the emerging paradigm of AI-based quantification. AI and machine learning (ML) models can identify complex, non-linear relationships between nanocarrier properties, in vivo behavior, and pharmacological outcomes, but they are profoundly "garbage-in, garbage-out" systems. Without high-fidelity biodistribution data across multiple organs, cell types, and time points, even the most sophisticated AI-driven PK/PD model will fail.

The following tables consolidate critical quantitative metrics derived from recent literature on nanocarrier biodistribution, which are essential for populating PK/PD models.

Table 1: Typical Biodistribution Profile of Common Nanocarriers (% Injected Dose per Gram of Tissue, 24h Post-IV Administration)

| Nanocarrier Type | Liver | Spleen | Kidneys | Tumor | Lungs | Blood | Primary PK Model Used |

|---|---|---|---|---|---|---|---|

| PEGylated Liposome (100nm) | 15-25% ID/g | 5-10% ID/g | 2-5% ID/g | 3-8% ID/g* | 1-3% ID/g | 10-15% ID/g | Two-compartment with RES uptake |

| Polymeric NP (PLGA, 80nm) | 30-50% ID/g | 8-15% ID/g | 3-7% ID/g | 1-5% ID/g* | 2-5% ID/g | 2-5% ID/g | Physiologically-based PK (PBPK) |

| Lipid Nanoparticle (LNP) | 40-60% ID/g | 10-20% ID/g | 1-3% ID/g | 0.5-2% ID/g | 1-4% ID/g | 1-4% ID/g | PBPK with hepatocyte-specific uptake |

| Mesoporous Silica NP (MSN) | 20-35% ID/g | 10-18% ID/g | 5-12% ID/g | 2-6% ID/g* | 3-8% ID/g | <2% ID/g | Non-compartmental analysis (NCA) |

| Peptide-Conjugated NP | 10-20% ID/g | 3-8% ID/g | 4-9% ID/g | 8-15% ID/g* | 1-3% ID/g | 5-10% ID/g | Target-mediated drug disposition (TMDD) |

*Tumor accumulation is highly dependent on the Enhanced Permeability and Retention (EPR) effect and active targeting.

Table 2: Key Rate Constants Derived from Biodistribution Data for PBPK Modeling

| Parameter | Symbol | Typical Range (for 100nm NP) | Source Experiment | Impact on PD Endpoint |

|---|---|---|---|---|

| Systemic Clearance | CL | 0.1 - 0.5 mL/h | Blood PK profile | Directly impacts systemic exposure & efficacy |

| RES Uptake Rate (Liver) | Kup,liver | 0.05 - 0.3 h⁻¹ | Dynamic quantitative imaging | Governs elimination and potential hepatotoxicity |

| Tumor Extravasation Rate | Kextra,tumor | 0.01 - 0.05 h⁻¹ | Tumor PK vs. Plasma PK | Critical for predicting intratumoral drug levels |

| Interstitial Diffusion Coefficient | Dint | 0.1 - 1.0 μm²/s | FRAP or similar in tissue slices | Determines penetration depth from vasculature |

| Cell Internalization Rate | Kint | 0.001 - 0.02 h⁻¹ | In vitro cell uptake + in vivo validation | Links carrier biodistribution to intracellular drug release |

Experimental Protocols for Generating Foundational Biodistribution Data

Protocol 3.1: Quantitative Whole-Body Biodistribution via Radiolabeling

Objective: To obtain absolute, organ-level quantitative biodistribution data over multiple time points for PK model parameterization.

Materials & Workflow:

- Radiolabel Nanocarrier: Incorporate a gamma-emitting radioisotope (e.g., ¹¹¹In via DOTA chelation, ⁶⁴Cu, or ⁸⁹Zr) or a beta-emitting isotope (e.g., ³H, ¹⁴C) into the nanocarrier structure. Validate labeling stability (>95%) via size-exclusion chromatography.

- Dosing & Sacrifice: Administer a known dose (ID, injected dose) to animal models (e.g., tumor-bearing mice) intravenously. Use n ≥ 5 animals per time point.

- Tissue Harvest: At predetermined time points (e.g., 1, 4, 24, 72h), euthanize animals. Perfuse with saline via cardiac puncture to clear blood from organs. Excise all major organs (blood, heart, lungs, liver, spleen, kidneys, tumor, muscle, bone, etc.), weigh, and place in pre-weighed tubes.

- Quantification:

- For Gamma Emitters: Count each organ in a gamma counter (e.g., PerkinElmer Wizard2). Apply decay correction and background subtraction. Calculate %ID/g = (counts in organ / counts in injected standard) * (weight of standard / organ weight) * 100.

- For Beta Emitters: Digest tissues (e.g., with Soluene), add scintillation cocktail, and count in a liquid scintillation counter.

- Data Analysis: Plot %ID/g vs. time for each organ. Calculate area under the curve (AUC) for blood and tissues. These values are direct inputs for non-compartmental PK analysis and initial estimates for compartmental/PBPK models.

Protocol 3.2: Spatially-Resolved Biodistribution via Quantitative Fluorescence Imaging

Objective: To generate spatially-resolved, cellular-level biodistribution data for informing tissue-scale PK parameters and validating AI-based image analysis pipelines.

Materials & Workflow:

- Fluorescent Nanocarrier Preparation: Load nanocarriers with a near-infrared (NIR) dye (e.g., DiR, Cy7.5) or a fluorescent quantum dot at a controlled, reproducible ratio. Characterize fluorescence intensity per particle.

- In Vivo Imaging: Image anesthetized animals at multiple time points using a calibrated quantitative fluorescence imager (e.g., PerkinElmer IVIS, LI-COR Pearl). Use identical exposure settings, illumination intensity, and animal positioning. Include a reference standard with known dye concentration in the field of view.

- Ex Vivo Validation: After the final in vivo time point, harvest and image organs ex vivo as in Protocol 3.1. This provides higher resolution and validates the in vivo region-of-interest (ROI) analysis.

- AI-Driven Image Analysis:

- Segmentation: Use a pre-trained U-Net or similar convolutional neural network (CNN) to automatically segment organs from white-light or autofluorescence images.

- Quantification: Within segmented ROIs, quantify total radiant efficiency (

[photons/s/cm²/sr] / [μW/cm²]). Convert to pmol of dye or particles per gram using the calibration standard curve. - Spatial Mapping: Apply pixel-by-pixel quantification to generate heatmaps of nanocarrier density within organs (e.g., distinguishing cortical vs. medullary kidney, or perivascular vs. diffuse tumor distribution). This spatial heterogeneity data is critical for advanced PBPK models.

Protocol 3.3: Correlative LC-MS/MS-Based Biodistribution of Payload

Objective: To directly quantify the active pharmaceutical ingredient (API) released from the nanocarrier in tissues, linking carrier biodistribution to pharmacodynamic (PD) activity.

Materials & Workflow:

- Dosing: Administer nanocarrier loaded with a quantifiable API (e.g., a chemotherapeutic like doxorubicin or a novel small molecule).

- Tissue Processing: Homogenize weighed tissue samples in an appropriate buffer (e.g., PBS:MeOH, 1:1). Spike with a known amount of internal standard (IS), a structurally analogous stable isotope-labeled compound.

- Sample Extraction: Perform protein precipitation or solid-phase extraction (SPE) to isolate the API from the tissue matrix.

- LC-MS/MS Analysis: Separate the API using liquid chromatography (LC) and detect/quantify it via tandem mass spectrometry (MS/MS) in Multiple Reaction Monitoring (MRM) mode.

- Quantification: Generate a standard curve for the API spiked into blank tissue homogenates. Calculate the concentration of API in each sample by comparing the API/IS peak area ratio to the standard curve. Express results as ng of API per gram of tissue. This data provides the direct link between nanocarrier PK (where the carrier goes) and PD (where the active drug is released).

Visualizing the Data-to-Model Pipeline

Diagram Title: AI-Driven PK/PD Modeling Workflow from Biodistribution Data

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for Advanced Biodistribution Studies

| Item | Function & Rationale | Example Product / Vendor |

|---|---|---|

| Near-Infrared (NIR) Lipophilic Tracers (DiR, DiD) | Stable incorporation into lipid bilayers for long-term, sensitive in vivo tracking with minimal tissue autofluorescence. | Thermo Fisher Scientific Vybrant DiI/DiD/DiO/DiR Cell-Labeling Solutions |

| DOTA-NHS Ester & Radioisotopes (¹¹¹In, ⁶⁴Cu) | Enables covalent, stable chelation of gamma-emitting isotopes to proteins or surface-modified nanoparticles for quantitative SPECT/PET and gamma counting. | CheMatech DOTA-NHS-ester; Isotopes from Curium or Orano |

| Matrix-Matched Calibration Standards | Essential for accurate LC-MS/MS quantification of payload in tissues; corrects for variable ion suppression/enhancement across different organ matrices. | Cerilliant Certified Reference Materials (spiked into blank tissue homogenate) |

| Fluorescent Microspheres (100nm, PEGylated) | Critical size and surface charge controls for benchmarking nanocarrier behavior in in vivo biodistribution and in vitro flow experiments. | Thermo Fisher Scientific FluoSpheres Carboxylate-Modified Microspheres |

| Tissue Dissociation Kits (for single-cell biodistribution) | Gentle enzymatic dissociation of organs to single-cell suspensions for flow cytometry analysis of cell-type-specific nanoparticle uptake (e.g., hepatocytes vs. Kupffer cells). | Miltenyi Biotec GentleMACS Dissociator with associated enzyme kits |

| AI-Ready Imaging Datasets & Annotation Tools | Pre-labeled datasets of organ ROIs for training segmentation models; software for efficient manual annotation of novel imaging data. | Kaggle BioImage Datasets; MITK or 3D Slicer software |

From Pixels to Predictions: AI Tools and Pipelines for Biodistribution Analysis

Application Notes: AI-Augmented Modalities for Nanocarrier Biodistribution

Integrating multimodal imaging with AI transforms nanocarrier biodistribution research from descriptive to predictive. This synergy enables high-throughput, spatially resolved quantification of pharmacokinetic and pharmacodynamic relationships.

Table 1: Comparative Overview of AI-Enhanced Imaging Modalities for Nanocarrier Research

| Modality | Primary Data | AI-Enhanced Quantification | Key Nanocarrier Insight | Throughput |

|---|---|---|---|---|

| IVIS (Optical) | 2D/3D bioluminescent/ fluorescent radiance | Semantic segmentation of organs; unmixing of multiple fluorophores. | Real-time whole-body trafficking & initial organ uptake. | High |

| PET/CT | Volumetric radiotracer concentration & anatomical CT. | Atlas-based automated organ segmentation; kinetic modeling (e.g., Patlak). | Absolute quantitative biodistribution; metabolic fate. | Medium |

| MS Imaging (MALDI) | Spatial m-/z intensity maps. | Deep learning for ion image denoising & co-localization analysis. | Label-free, multiplexed detection of nanocarrier & payload. | Low |

Detailed Experimental Protocols

Protocol 1: AI-Segmented, Multi-Fluorophore IVIS for Longitudinal Trafficking Objective: Quantify temporal organ accumulation of dual-labeled (lipid & payload) nanocarriers. Materials:

- Mice administered with nanocarriers tagged with DiR (lipophilic dye) and a Cy5-labeled payload.

- IVIS Spectrum or equivalent in vivo imaging system.

- AI Segmentation Software (e.g., DeepLab, U-Net models trained on mouse atlases). Procedure:

- Anesthetize mice and image at t=1, 4, 24, 48h post-injection.

- Acquire sequential scans at excitation/emission filters for DiR (745/800 nm) and Cy5 (640/680 nm).

- Apply spectral unmixing algorithm (built-in) to separate fluorescence signals.

- Export unmixed images and input into pre-trained AI segmentation model.

- The model outputs masks for liver, spleen, kidneys, tumors, etc.

- For each mask, extract total radiant efficiency ([p/s/cm²/sr] / [µW/cm²]) for both channels.

- Calculate organ-specific DiR:Cy5 ratio over time as a metric of carrier integrity.

Protocol 2: Atlas-Based PET/CT for Absolute Nanocarrier Pharmacokinetics Objective: Determine the time-activity curve and standard uptake value (SUV) of ⁸⁹Zr-labeled nanocarriers. Materials:

- ⁸⁹Zr-labeled nanocarriers.

- Micro-PET/CT scanner.

- Digital Mouse Atlas (e.g., CIVM Atlas).

- Pharmacokinetic modeling software (e.g., PMOD). Procedure:

- Administer ⁸⁹Zr-nanocarrier IV. Acquire dynamic PET scan for first 60min, then static scans at 4h, 24h, 48h.

- Perform low-dose CT for each time point for anatomy.

- Co-register all PET data to the CT reference.

- Non-rigidly register the Digital Mouse Atlas to the subject's CT scan using AI-driven registration tools.

- Apply atlas-derived organ ROIs to the co-registered PET data.

- Extract decay-corrected activity (kBq) and volume for each ROI.

- Calculate SUV = (tissue activity [kBq/g] / (injected dose [kBq] / body weight [g])).

- Fit ROI data to a two-tissue compartmental model for estimation of K₁ (influx) and k₃ (retention) rate constants.

Protocol 3: AI-Denoised MALDI-MS Imaging for Multiplexed Spatial Biodistribution Objective: Map the unlabeled nanocarrier lipid, its encapsulated drug, and a endogenous biomarker (e.g., a phospholipid) simultaneously. Materials:

- Fresh-frozen tissue sections (10 µm) on conductive slides.

- MALDI matrix (e.g., DHB for lipids, α-CHCA for drug).

- High-resolution MALDI-TOF/TOF or FT-ICR mass spectrometer equipped with an imaging source.

- Denoising AI software (e.g., convolutional neural network like CARE). Procedure:

- Apply matrix uniformly using a robotic sprayer.

- Acquire MS imaging data in positive/negative ion mode with a spatial resolution of 50 µm.

- Pre-process data (baseline correction, normalization to TIC) using spectrometer software.

- Export ion images for specific m/z values: nanocarrier lipid (e.g., m/z 780.6), drug (e.g., m/z 408.2), and a tissue-specific lipid (e.g., m/z 886.6 for PI(38:4)).

- Apply a pre-trained denoising CNN to each ion image to enhance signal-to-noise while preserving spatial features.

- Use AI-based colocalization algorithms (e.g., pixel-wise correlation clustering) to analyze spatial relationships between the three channels.

- Generate probability maps of nanocarrier-drug co-localization within specific histological regions.

Visualizations

Title: AI-Driven IVIS Quantitative Workflow

Title: PET Compartmental Model for Nanocarriers

Title: AI-Enhanced MS Imaging Pipeline

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for AI-Enhanced Biodistribution Studies

| Item Name | Function in Research | Specific Application Example |

|---|---|---|

| ⁸⁹Zr-Desferrioxamine (DFO) | Chelator for radioisotope labeling of nanocarriers. | Enables long-term PET tracking of nanocarrier pharmacokinetics over days. |

| Near-IR Fluorophore Conjugates (DiR, Cy5.5) | Provides optical contrast for in vivo imaging. | Dual-labeling of carrier structure and payload for IVIS integrity studies. |

| MALDI Matrix (DHB, α-CHCA) | Co-crystallizes with analyte, enables laser desorption/ionization. | Applied to tissue for label-free detection of nanocarrier lipids & drugs via MSI. |

| AI Model Weights (Pre-trained U-Net) | Software file containing learned parameters for image segmentation. | Enables immediate, accurate organ segmentation from IVIS/CT without manual ROI drawing. |

| Digital Mouse Atlas | Standardized 3D map of mouse anatomy with organ labels. | Serves as a template for AI-driven registration and analysis of PET/CT data. |

| Kinetic Modeling Software (PMOD) | Performs compartmental modeling on dynamic PET data. | Converts time-activity curves into quantitative rate constants (K₁, k₃). |

This document provides detailed application notes and protocols for employing Convolutional Neural Networks (CNNs) in the segmentation of organs and quantification of nanocarrier signals from biomedical images. This work is a core methodological pillar within a broader thesis on AI-based quantification of nanocarrier biodistribution. Accurate, high-throughput analysis of in vivo imaging data (e.g., from fluorescence, bioluminescence, MRI, or CT) is critical for evaluating targeting efficiency, pharmacokinetics, and safety profiles of novel drug delivery systems. CNNs automate and significantly enhance the reproducibility of extracting quantitative biodistribution metrics, moving beyond subjective manual region-of-interest (ROI) analysis.

Core CNN Architectures for Biomedical Image Segmentation

Current literature and tools favor encoder-decoder architectures that capture context and enable precise localization.

Table 1: Key CNN Architectures for Organ Segmentation

| Architecture | Key Innovation | Typical Use Case in Biodistribution | Strengths for this Field |

|---|---|---|---|

| U-Net | Symmetric skip connections between encoder and decoder. | Segmenting organs from CT/MRI for anatomical context. | Excellent with limited training data; precise boundaries. |

| nnU-Net | Self-configuring framework; automates preprocessing and training. | Out-of-the-box robust segmentation of diverse organ sets. | State-of-the-art performance; eliminates architecture search. |

| DeepLabv3+ | Atrous Spatial Pyramid Pooling (ASPP) & Decoder. | Segmenting organs & lesions in high-resolution whole-body scans. | Captures multi-scale contextual information effectively. |

| Mask R-CNN | Two-stage: proposes regions then generates masks. | Isolating specific, often sparse, regions like tumors. | Excellent for instance segmentation of discrete targets. |

Application Note: Multi-Modal Workflow for Nanocarrier Signal Co-localization

Objective: To quantify nanocarrier-derived signal (e.g., near-infrared fluorescence, NIRF) intensity within precisely segmented organ volumes.

Logical Workflow:

Diagram Title: CNN Pipeline for Signal Quantification in Organs

Detailed Experimental Protocols

Protocol 4.1: Training a U-Net for Murine Organ Segmentation from CT

- Objective: Train a CNN to segment major organs (liver, spleen, kidneys, heart, lungs) in murine micro-CT scans.

- Input Data: 3D micro-CT volumes (DICOM format). Minimum dataset: 30 annotated volumes.

- Preprocessing:

- Resampling: Isotropically resample all volumes to a common resolution (e.g., 100µm³).

- Intensity Normalization: Clip intensities to the 0.5th and 99.5th percentiles, then scale to [0, 1].

- Data Augmentation: Apply on-the-fly 3D affine transformations (rotation ±15°, scaling ±10%, elastic deformations).

- Model & Training:

- Implement a 3D U-Net with 4 resolution levels, 32 initial filters.

- Loss Function: Use a combination of Dice Loss and Cross-Entropy Loss (weighted 0.7:0.3).

- Optimizer: Adam with initial learning rate of 3e-4, halved on validation loss plateau.

- Training: Train for 1000 epochs with batch size 2. Use 80/10/10 train/validation/test split.

- Validation: Monitor Dice Similarity Coefficient (DSC) on the held-out validation set.

Protocol 4.2: Quantifying Nanocarrier Fluorescence within CNN-Generated Masks

- Objective: Extract total fluorescence radiant efficiency from NIRF images within segmented organ regions.

- Prerequisites: Trained organ segmentation model (Protocol 4.1) and co-registered CT/NIRF image sets.

- Procedure:

- Inference: Process the CT volume through the trained U-Net to generate a multi-label organ mask volume.

- Registration: Using software (e.g., AMIRA, 3D Slicer), rigidly register the in vivo NIRF surface reconstruction or tomographic data to the CT coordinate space. Verify alignment.

- Mask Application: For each organ label

iin the mask, create a binary volumeM_i. Isolate the NIRF signal within each organ:Signal_i = NIRF_volume * M_i. - Quantification: For each

Signal_i, compute:- Total Flux: Sum of all pixel values within the mask (

Σ pixel values). - Mean Intensity: Average pixel value within the mask.

- Signal-to-Background Ratio (SBR): (Mean Intensity in Organ) / (Mean Intensity in a reference background region).

- Total Flux: Sum of all pixel values within the mask (

- Normalization (Optional): Normalize Total Flux per organ by the organ's segmented volume (from mask voxel count) to get signal density.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for AI-Driven Biodistribution Studies

| Item | Function & Relevance |

|---|---|

| Near-Infrared (NIR) Fluorophores (e.g., ICG, DIR, Cy7) | Labels for nanocarriers; enable deep-tissue in vivo fluorescence imaging with minimal background autofluorescence. |

| IVIS Spectrum or MS FX Pro Imaging System | Preclinical in vivo imaging system for acquiring 2D/3D bioluminescent and fluorescent whole-body data. |

| Micro-CT Scanner (e.g., SkyScan, Quantum FX) | Provides high-resolution 3D anatomical data for organ segmentation and anatomical context for signal co-localization. |

| 3D Slicer / AMIRA / ITK-SNAP Software | Open-source/commercial platforms for manual image annotation, 3D visualization, and multi-modal image registration. |

| PyTorch / TensorFlow with MONAI Framework | Core deep learning libraries. MONAI provides domain-specific tools (loss functions, metrics, networks) for medical imaging. |

| nnU-Net Framework | Self-configuring segmentation pipeline; the benchmark tool for robust organ segmentation without extensive parameter tuning. |

| High-Performance GPU (NVIDIA, ≥12GB VRAM) | Essential for training 3D CNN models on medical image volumes within a reasonable timeframe. |

Data Output and Quantification

Table 3: Exemplar Biodistribution Data Output from CNN Analysis

| Animal ID | Organ | Segmented Volume (mm³) | Total NIRF Flux (p/s/cm²/sr) | Mean NIRF Intensity | % of Injected Dose/g* |

|---|---|---|---|---|---|

| M1 | Liver | 987.2 | 5.67e+09 | 5.74e+06 | 12.5 |

| M1 | Spleen | 89.5 | 8.92e+08 | 9.97e+06 | 25.3 |

| M1 | Left Kidney | 132.1 | 3.21e+08 | 2.43e+06 | 4.8 |

| M1 | Lung | 168.3 | 1.05e+08 | 6.24e+05 | 1.2 |

| M1 | Heart | 85.6 | 4.88e+07 | 5.70e+05 | 0.9 |

| M2 | Liver | 1021.5 | 6.01e+09 | 5.88e+06 | 13.1 |

| ... | ... | ... | ... | ... | ... |

Calculated using a standard curve from *ex vivo organ homogenates.

Critical Pathway: From Raw Images to Thesis Insights

Diagram Title: AI Quantification Drives Thesis Research Cycle

Application Notes

The integration of multi-omic data with spatiotemporal biodistribution profiles represents a paradigm shift in nanocarrier research. By moving "beyond pixels" of traditional imaging, this approach enables the deconvolution of complex biological responses to nanotherapeutics, linking pharmacokinetics to pharmacodynamic outcomes. Within an AI-based quantification thesis, this integration provides the high-dimensional, multi-modal data required for training predictive models of nanocarrier efficacy and toxicity.

Key Insights:

- Predictive Modeling: AI/ML algorithms, such as graph neural networks (GNNs) and multimodal deep learning, can identify non-linear relationships between omics signatures (e.g., liver proteome changes) and biodistribution hotspots (e.g., RES organ accumulation).

- Mechanistic Elucidation: Correlating transcriptomic data from tumor sites with nanocarrier accumulation levels can reveal genes and pathways involved in enhanced permeability and retention (EPR) or, conversely, in clearance mechanisms.

- Safety Profiling: Integrative analysis of metabolomic data from blood and biodistribution patterns can early-predict off-target effects and organ-specific toxicities.

Table 1: Representative Multi-Omic Data Metrics Correlated with Biodistribution

| Omic Layer | Typical Measured Features | Analysis Platform | Correlation Target with Biodistribution | Exemplary p-value Range |

|---|---|---|---|---|

| Transcriptomics | 20,000+ gene expression counts | RNA-Seq (Illumina) | Tumor vs. Liver accumulation ratio | 1e-5 to 1e-10 |

| Proteomics | ~5,000 quantified proteins | LC-MS/MS (TMT labeling) | Opsonin protein levels vs. Plasma AUC | 1e-3 to 1e-8 |

| Metabolomics | 500+ metabolites | GC-MS / LC-MS | Lipid metabolites vs. Hepatic clearance rate | 1e-2 to 1e-6 |

| Lipidomics | 1,000+ lipid species | Shotgun LC-MS | Serum lipid profile vs. PEGylated carrier half-life | 1e-3 to 1e-7 |

Table 2: AI Model Performance on Integrated Data Prediction Tasks

| Prediction Task | Model Architecture | Input Data Modalities | Mean Absolute Error (MAE) / AUC | Key Integrated Feature |

|---|---|---|---|---|

| Liver Accumulation (%ID/g) | Graph Neural Network (GNN) | Imaging, Proteomics, Metabolomics | MAE: 2.8 %ID/g | Complement C3 protein level |

| Tumor Targeting Specificity | Multimodal Deep Learning | Imaging, Transcriptomics | AUC: 0.94 | Hypoxia-inducible gene signature |

| Renal Clearance Rate | Random Forest Regression | Imaging, Metabolomics, Lipidomics | MAE: 0.15 mL/min | Tryptophan metabolite ratio |

Experimental Protocols

Protocol 1: Integrated Biodistribution and Transcriptomic Profiling from Tissue Samples

Objective: To correlate nanocarrier biodistribution with whole-transcriptome gene expression in target and off-target organs.

Materials:

- Fluorescently or radio-labeled nanocarrier

- Animal model (e.g., tumor-bearing mouse)

- IVIS Spectrum or PET/CT imaging system

- RNAlater stabilization solution

- Tissue homogenizer

- RNA extraction kit (e.g., RNeasy Plus Mini Kit)

- Next-generation sequencing platform

Procedure:

- Administration & Biodistribution: Administer the nanocarrier intravenously. At predetermined time points (e.g., 1, 4, 24, 48h), euthanize animals (n=5 per group).

- Quantitative Ex-Vivo Imaging: Excise organs of interest (liver, spleen, kidneys, tumor, heart, lungs). Image organs using an ex-vivo imaging system (IVIS) to quantify fluorescence intensity or use a gamma counter for radiolabels. Calculate % injected dose per gram (%ID/g) for each organ.

- Paired Tissue Processing: Immediately after imaging, bisect each organ. Place one half in RNAlater for RNA sequencing. Snap-freeze the other half for potential proteomic analysis.

- RNA Sequencing: Extract total RNA from RNAlater-preserved tissues following kit protocols. Assess RNA integrity (RIN > 8). Prepare cDNA libraries (e.g., using poly-A selection) and sequence on an Illumina platform (minimum 30M paired-end reads per sample).

- Data Integration: Align RNA-seq reads to the reference genome. Generate gene count matrices. Using AI/ML pipelines (e.g., in Python/R), perform multi-variate regression or canonical correlation analysis (CCA) between gene expression vectors and the %ID/g values across all organs and time points.

Protocol 2: Serum Metabolomic Profiling for Predictive Pharmacokinetic Modeling

Objective: To identify serum metabolic signatures predictive of nanocarrier clearance and biodistribution.

Materials:

- Serum collection tubes (without anticoagulant for metabolomics)

- Cold methanol, acetonitrile (LC-MS grade)

- Centrifugal filters (3 kDa MWCO)

- LC-MS system with reversed-phase and HILIC columns

- Stable isotope-labeled internal standards

Procedure:

- Serial Blood Collection: Collect blood (e.g., via submandibular vein) at multiple time points post-nanocarrier injection (e.g., 5 min, 1h, 6h, 24h). Allow blood to clot, centrifuge (2000 x g, 10 min, 4°C), and aliquot serum.

- Metabolite Extraction: For each serum sample, mix 50 µL serum with 200 µL cold methanol containing internal standards. Vortex vigorously for 1 min and incubate at -20°C for 1 hour. Centrifuge at 14,000 x g for 15 min at 4°C.

- Sample Clean-up: Transfer supernatant to a 3 kDa MWCO filter. Centrifuge at 12,000 x g for 30 min at 4°C. Collect filtrate and dry under nitrogen stream. Reconstitute in appropriate LC-MS solvent.

- LC-MS Analysis: Analyze samples using both reversed-phase (for lipids, non-polar metabolites) and HILIC (for polar metabolites) chromatography coupled to a high-resolution mass spectrometer in both positive and negative ionization modes.

- Integrative AI Analysis: Process raw MS data (peak picking, alignment, annotation). Integrate the time-series metabolomic data with pharmacokinetic parameters (e.g., AUC, clearance) derived from concurrent in vivo imaging. Use a time-aware neural network (e.g., LSTM) to model the relationship between early metabolic shifts (e.g., 1h timepoint) and terminal biodistribution outcomes (24h).

Visualization Diagrams

Multi-Omic Biodistribution Integration Workflow

Inflammatory Pathway Linking Omics to Imaging

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Integrated Studies

| Item | Function | Key Consideration for Integration |

|---|---|---|

| Multimodal Nanocarrier | Carries drug, contains contrast agent (e.g., NIRF dye, radionuclide) for tracking, and is compatible with omics analysis. | Label must not interfere with omics assays (e.g., lanthanide labels for MS over fluorescent dyes for RNA-seq). |

| RNAlater Stabilization Solution | Preserves RNA integrity in tissues post-excision for accurate transcriptomics. | Allows same-tissue analysis: one half for imaging/%ID/g, adjacent half for RNA-seq. |

| Isobaric Tagging Reagents (e.g., TMTpro 16plex) | Enables multiplexed quantitative proteomics from multiple organs/time points in a single MS run. | Reduces batch effects, directly correlating protein abundance across all biodistribution samples. |

| Stable Isotope-Labeled Internal Standards (for Metabolomics) | Enables absolute quantification of metabolites in serum/plasma. | Critical for generating consistent metabolic data for AI training across longitudinal studies. |

| Data Integration Software (e.g., Python Pandas, R tidyverse, KNIME) | Harmonizes disparate data types (images, counts, concentrations) into a unified analysis table. | Preprocessing (normalization, scaling) is essential before AI model input. |

| AI/ML Platform (e.g., PyTorch, TensorFlow, scikit-learn) | Provides algorithms for multimodal learning, regression, and feature importance ranking. | Graph Neural Networks (GNNs) are particularly suited for organ-network data. |

This document serves as a foundational Application Note within a broader thesis on AI-based quantification of nanocarrier biodistribution. The central hypothesis posits that Quantitative Structure-Property Relationship (QSPR) modeling, powered by modern machine learning (ML) and artificial intelligence (AI), can accurately predict the in vivo fate of nanocarriers from their physicochemical descriptors. This protocol details the experimental and computational pipeline to develop, validate, and deploy such predictive models, aiming to accelerate the rational design of targeted drug delivery systems.

Core Predictive Modeling Workflow

The following diagram illustrates the integrated experimental-computational workflow essential for building a robust AI-QSPR model for biodistribution prediction.

Diagram Title: AI-QSPR Model Development Pipeline for Nanocarrier Biodistribution

Key Experimental Protocols

Protocol 3.1: High-ThroughputEx VivoBiodistribution Profiling

Objective: To generate quantitative organ-level biodistribution data for a library of varied nanocarriers.

Materials: See "Scientist's Toolkit" (Section 6). Procedure:

- Nanocarrier Labeling & Administration: Label nanocarriers with a near-infrared (NIR) dye (e.g., DiR) or radiolabel (¹²⁵I). Purify to remove free label. Inject intravenously into animal models (e.g., mice, n=5-6 per formulation) at a standardized dose (e.g., 5 mg/kg nanoparticle weight).

- Time-Point Sacrifice & Organ Harvest: Euthanize animals at pre-defined time points (e.g., 1, 4, 24, 48 h). Perfuse with saline via cardiac puncture. Harvest target organs: heart, lungs, liver, spleen, kidneys, and tumor (if applicable). Weigh each organ precisely.

- Fluorescence/Radioactivity Quantification: For NIR labels: Image organs using an in vivo imaging system (IVIS). Homogenize organs in PBS and measure fluorescence intensity with a plate reader. Generate a standard curve from spiked control organs for quantification. For radiolabels: Count radioactivity in each organ using a gamma counter.

- Data Normalization: Calculate percentage of injected dose per gram of tissue (%ID/g) using the formula:

(Signal in organ / Organ weight) / (Total injected signal) * 100%.Calculate Area Under the Curve (AUC) for each organ across time points.

Protocol 3.2: Comprehensive Nanocarrier Physicochemical Characterization

Objective: To generate input feature data (descriptors) for QSPR modeling. Procedure:

- Hydrodynamic Size & Zeta Potential: Dilute nanocarriers in relevant buffer (e.g., PBS, pH 7.4). Perform triplicate measurements using Dynamic Light Scattering (DLS) and Electrophoretic Light Scattering.

- Surface Chemistry & Conjugation Density: Quantify surface PEG density via ¹H NMR or colorimetric assays (e.g., iodine complex for PEG). Determine targeting ligand density using methods like fluorescence labeling or ELISA.

- Morphology & Core Properties: Analyze shape by Transmission Electron Microscopy (TEM). Determine core crystallinity by X-ray Diffraction (XRD). Measure drug loading efficiency and encapsulation efficiency via HPLC/UV-Vis.

Data Presentation: Model Performance & Biodistribution

Table 1: Exemplar Biodistribution Data (%ID/g, 24h) for a Model Library of Polymeric NPs (Mean ± SD, n=5)

| NP Formulation ID | Size (nm) | Zeta (mV) | % PEG Density | Liver | Spleen | Kidneys | Lungs | Tumor |

|---|---|---|---|---|---|---|---|---|

| NP-A | 80 ± 5 | -3 ± 1 | 0% | 35.2 ± 4.1 | 12.5 ± 1.8 | 8.1 ± 0.9 | 5.2 ± 0.7 | 0.5 ± 0.1 |

| NP-B | 85 ± 4 | -10 ± 2 | 30% | 18.7 ± 2.3 | 6.8 ± 1.1 | 6.5 ± 0.8 | 3.1 ± 0.5 | 3.2 ± 0.4 |

| NP-C (Targeted) | 90 ± 6 | -8 ± 1 | 25% | 15.3 ± 2.1 | 5.9 ± 0.9 | 7.2 ± 1.0 | 2.8 ± 0.4 | 8.9 ± 1.2 |

Table 2: Performance Metrics of Different AI/ML Models in Predicting Liver AUC (5-fold Cross-Validation)

| Model Type | Key Features Used | R² (Training) | R² (Validation) | Mean Absolute Error (MAE, %ID/g*h) |

|---|---|---|---|---|

| Linear Regression | Size, Zeta, %PEG | 0.65 | 0.58 | 45.2 |

| Random Forest | Size, Zeta, %PEG, Ligand Density, PDI | 0.92 | 0.81 | 18.7 |

| Graph Neural Net | Molecular graph of polymer, surface motifs | 0.98 | 0.88 | 12.3 |

| Support Vector Machine | All physicochemical descriptors | 0.89 | 0.79 | 21.5 |

Model Interpretation & Biological Pathway Mapping

A critical output of AI-QSPR is identifying key properties that govern organ-specific uptake, often linked to biological pathways. The diagram below maps how model-identified features correlate with the dominant cellular clearance pathways.

Diagram Title: Key Nanocarrier Properties and Their Dominant Clearance Pathways

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Example Product/Brand | Primary Function in Protocol |

|---|---|---|

| NIR Fluorescent Dyes | DiR, DiD, Cy7.5 NHS Ester (Lumiprobe) | Stable, hydrophobic dyes for in vivo and ex vivo tracking of nanocarrier biodistribution via fluorescence imaging. |

| Radiolabeling Kits | ¹²⁵I-Bolton-Hunter Reagent (PerkinElmer) | Provides a reliable method for covalent radiolabeling of nanocarrier surfaces for highly quantitative gamma counting. |

| PEGylation Reagents | mPEG-NHS (5kDa, 10kDa) (Creative PEGWorks) | Standardized reagents for introducing stealth properties; key variable for QSPR feature set. |

| Targeting Ligands | cRGDfK Peptide, Trastuzumab (Bio-Synthesis, Inc.) | Well-characterized ligands for active targeting; used to model the impact of surface functionalization. |

| In Vivo Imaging System | IVIS Spectrum (PerkinElmer) | Enables longitudinal whole-body imaging and quantitative ex vivo organ fluorescence measurement. |

| DLS/Zeta Potential Analyzer | Zetasizer Ultra (Malvern Panalytical) | Provides core physicochemical descriptors (size, PDI, zeta potential) with high accuracy and reproducibility. |

| AI/ML Development Platform | Python with RDKit, Scikit-learn, PyTorch Geometric | Open-source libraries for molecular descriptor calculation, traditional ML, and graph-based neural network modeling. |

Application Notes

The integration of artificial intelligence (AI) with advanced imaging modalities is revolutionizing the quantitative analysis of nanocarrier biodistribution in preclinical oncology models. This case study focuses on AI-driven methodologies for quantifying the spatiotemporal distribution of liposomal and polymeric nanoparticle formulations, critical for optimizing targeted drug delivery systems.

Core AI Integration: Convolutional Neural Networks (CNNs), particularly U-Net and ResNet architectures, are employed for the semantic segmentation of nanoparticles within high-resolution ex vivo tissue micrographs (e.g., from fluorescence, dark-field, or mass spectrometry imaging). Recurrent Neural Networks (RNNs) can model temporal distribution kinetics from longitudinal in vivo imaging data (e.g., IVIS, PET/CT). AI models are trained on manually annotated datasets to recognize nanoparticle-specific signals against complex tissue backgrounds, achieving superior accuracy and throughput compared to traditional thresholding techniques.

Key Quantitative Insights: AI analysis provides multi-parametric quantification beyond simple intensity measurements. This includes particle count per tissue area, cluster size distribution, penetration depth from vasculature, and co-localization coefficients with specific cellular markers (e.g., tumor-associated macrophages, endothelial cells). This granular data is essential for establishing structure-activity relationships (SAR) linking nanoparticle physicochemical properties to in vivo performance.

Table 1: AI-Enhanced Quantitative Biodistribution Data from a Representative Study

| Nanoparticle Type | Targeting Ligand | Tumor Model | Primary Metric | Control Group Mean ± SD | Test Formulation Mean ± SD | AI Model Used | P-value |

|---|---|---|---|---|---|---|---|

| PEGylated Liposome | None | Murine 4T1 | % Injected Dose/g Tumor | 2.1 ± 0.5 %ID/g | 5.8 ± 1.2 %ID/g | 3D U-Net | <0.01 |

| PLGA Nanoparticle | Anti-EGFR Fab' | Patient-Derived Xenograft | Particles per mm² in Tumor Core | 120 ± 35 /mm² | 450 ± 89 /mm² | Mask R-CNN | <0.001 |

| Polymeric Micelle | iRGD peptide | Transgenic RIP-Tag2 | Penetration Depth (µm) from Vessel | 40 ± 12 µm | 85 ± 18 µm | Custom CNN | <0.01 |

| Key AI-Derived Insight | Cluster Analysis: Test formulation showed 60% higher dispersion (lower cluster size). | Spatial Correlation: Strong correlation (R²=0.78) with perfused vasculature. | Temporal Pattern: Peak accumulation shifted 12h earlier vs. control. |

Experimental Protocols

Protocol 1: AI-Assisted Analysis of Nanoparticle Distribution in Ex Vivo Tissue Sections

Objective: To quantify nanoparticle localization and cluster morphology in frozen tumor sections using fluorescence microscopy and AI-based image segmentation.

Materials: See "Research Reagent Solutions" table. Procedure:

- Tissue Processing: 24h post-injection, euthanize model, perfuse with PBS, and harvest tumors. Snap-freeze in OCT. Cryosection at 10 µm thickness.

- Immunofluorescence Staining: Fix sections in 4% PFA (10 min), permeabilize (0.1% Triton X-100, 5 min), block (5% BSA, 1h). Incubate with primary antibodies (e.g., CD31 for endothelium) overnight at 4°C. Wash and incubate with fluorescent secondary antibodies and DAPI (1 µg/mL) for 1h at RT. Mount.

- Image Acquisition: Acquire high-resolution tiled z-stack images using a confocal or epifluorescence microscope with a 20x or higher objective. Ensure consistent exposure across samples.

- AI Model Training/Application:

- Ground Truth Creation: Manually annotate 50-100 representative images, labeling pixels as "nanoparticle," "background," or "vasculature."

- Model Training: Train a U-Net architecture using the annotated dataset. Use data augmentation (rotation, flipping). Split data 70/15/15 for training/validation/test.

- Inference & Analysis: Apply the trained model to new images. Use post-processing scripts to calculate: (a) Nanoparticle area fraction, (b) Number of discrete clusters, (c) Mean cluster size, (d) Minimum distance of each particle to nearest CD31+ vessel.

Protocol 2: LongitudinalIn VivoDistribution Kinetics via AI-Powered Image Analysis

Objective: To model the time-dependent biodistribution of nanoparticles using longitudinal in vivo optical imaging. Procedure:

- Imaging: Inject tumor-bearing mice with fluorescently labeled nanoparticles. Acquire whole-body fluorescence images (e.g., IVIS Spectrum) at predetermined time points (e.g., 1, 4, 8, 24, 48h) under isoflurane anesthesia. Maintain identical imaging parameters (exposure, f-stop).

- Image Pre-processing: Use software to define consistent regions of interest (ROIs) for tumor, liver, spleen, and muscle. Extract total radiant efficiency values.

- Kinetic Modeling with AI: Input the time-series radiant efficiency data for each ROI into a Long Short-Term Memory (LSTM) network. Train the LSTM to predict the full kinetic curve from early time points or to classify formulations based on their temporal distribution profile (e.g., rapid vs. sustained tumor uptake).

- Validation: Compare AI-predicted terminal time point values with experimentally measured ex vivo organ digestion data.

Visualizations

AI-Driven Ex Vivo Biodistribution Analysis Workflow

AI Models Decode NP Delivery Pathways

Research Reagent Solutions

Table 2: Essential Materials for AI-Enhanced Biodistribution Studies

| Item | Function/Description | Example Product/Catalog Number |

|---|---|---|

| Fluorescent Liposome (DiR-labeled) | Near-infrared liposome for deep-tissue in vivo and ex vivo imaging. Enables longitudinal tracking. | DiR Liposome, 100 nm, FormuMax (F60103) |

| PLGA-PEG-COOH Nanoparticles | Versatile polymeric nanoparticle core for conjugating targeting ligands (e.g., peptides, antibodies). | PLGA-PEG-COOH, 50:5k, Nanosoft (NS-PLGA-50) |

| Anti-Mouse CD31 Antibody | Labels vascular endothelium for spatial analysis of nanoparticle localization relative to tumor vasculature. | BioLegend, clone 390 (102414) |

| DAPI (4',6-diamidino-2-phenylindole) | Nuclear counterstain for cell localization and tissue morphology reference in segmentation. | Thermo Fisher Scientific (D1306) |

| Mounting Medium (Antifade) | Preserves fluorescence signal during microscopy; essential for quantitative image analysis. | Vector Laboratories, VECTASHIELD (H-1000) |

| IVIS SpectrumCT In Vivo Imaging System | For non-invasive, longitudinal 2D/3D quantification of fluorescent nanoparticle biodistribution. | PerkinElmer (CLS136345) |

| High-Speed Confocal Microscope | For high-resolution ex vivo tissue imaging. Essential for generating training data for AI models. | Nikon A1R HD or equivalent |

| Python with AI Libraries | Software environment for developing and running custom AI models (U-Net, ResNet, LSTM). | TensorFlow, PyTorch, scikit-image |

| Image Analysis Software | For manual annotation (ground truth creation) and basic pre-processing of image data. | Fiji/ImageJ, QuPath |

Navigating the Black Box: Overcoming Data and Algorithm Challenges in AI Quantification

Within the field of AI-based quantification of nanocarrier biodistribution, a primary research bottleneck is the scarcity of high-quality, labeled experimental data. Acquiring in vivo biodistribution data through techniques like quantitative imaging (e.g., PET, SPECT, fluorescence) and mass spectrometry is costly, time-intensive, and ethically constrained. This application note details two pivotal computational strategies—Synthetic Data Generation and Transfer Learning—to overcome data scarcity, enabling robust AI model development for predicting and analyzing nanocarrier fate in biological systems.

Synthetic Data Generation for Biodistribution Modeling

Synthetic data generation creates artificial datasets that mimic the statistical properties of real experimental data. In biodistribution research, this involves simulating the complex relationships between nanocarrier properties (size, charge, surface ligand) and their in vivo pharmacokinetic (PK) and biodistribution profiles.

Protocol: Physics-Informed Generative Adversarial Network (PI-GAN) for Synthetic Biodistribution Curves

Objective: To generate synthetic time-concentration curves for nanocarriers in target organs (e.g., tumor, liver, spleen) and blood.

Materials & Workflow:

- Seed Data Preparation: Compile a limited real dataset of time-concentration profiles from historical or pilot studies. Minimum: 50-100 profiles per organ of interest.

- Physics-Based Constraint Formulation: Incorporate ordinary differential equation (ODE) models of basic compartmental PK (e.g., two-compartment model) as soft constraints.

- PI-GAN Architecture Setup:

- Generator: A neural network (e.g., U-Net) that takes random noise and nanocarrier property vectors (size, PEG density) as input and outputs a multi-organ time-concentration matrix.

- Discriminator: A CNN-based classifier that evaluates whether an input matrix is from real data or generated.

- Physics-Informed Loss Layer: Computes the residual of the ODEs using the generator's output, penalizing physically implausible curves.

- Training: Iteratively train the generator and discriminator while minimizing the combined adversarial loss and physics loss.

- Validation: Use statistical metrics (e.g., Frechet Distance) and domain expert evaluation to ensure synthetic data distributions align with known biological principles (e.g., >70% hepatic accumulation for particles >100 nm).

Diagram: PI-GAN Workflow for Synthetic Biodistribution Data

Key Quantitative Comparisons of Synthetic Data Generation Methods

Table 1: Comparison of Synthetic Data Generation Techniques for Biodistribution Research

| Method | Principle | Best For | Data Efficiency | Fidelity Metric (Typical Range) | Computational Cost |

|---|---|---|---|---|---|

| PI-GAN (Physics-Informed GAN) | Combines GANs with PK/PD ODE constraints | Generating plausible PK time-series data | High (can bootstrap from <100 samples) | Frechet Distance: 15-25 | High |

| Gaussian Mixture Models (GMM) | Fits data to a mix of Gaussian distributions | Augmenting heterogeneous organ accumulation data | Medium (requires ~200 samples) | KL Divergence: 0.05-0.1 | Low |

| Diffusion Models | Iterative denoising process | High-resolution synthetic tissue imaging data | Low (requires large seed dataset) | SSIM: 0.85-0.95 | Very High |

| Rule-Based Simulation | Deterministic PK/PD modeling (e.g., PBPK) | Generating "what-if" scenario data | N/A (model-driven) | Mean Absolute Error: 10-20% | Medium |

Transfer Learning Strategies for Predictive Biodistribution Modeling

Transfer learning repurposes a model developed for a data-rich source task (e.g., general image classification) to a data-scarce target task (e.g., quantifying nanocarriers in histological slides).

Protocol: Two-Phase Transfer Learning for Histology Image Analysis

Objective: To fine-tune a pre-trained convolutional neural network (CNN) to segment and quantify nanocarrier clusters in liver histology slides stained with metallic probes.

Phase 1: Source Model Preparation

- Select Pre-trained Model: Choose a model trained on a large, general image dataset (e.g., ResNet50 or VGG16 on ImageNet).

- Remove Classifier Head: Discard the final fully connected classification layers.

- Add New Task-Specific Head: Append new layers tailored for pixel-wise segmentation (e.g., a U-Net style decoder with skip connections).

Phase 2: Targeted Fine-Tuning

- Freeze Early Layers: Keep the weights of the initial CNN layers (which detect generic features like edges) frozen.

- Gradual Unfreezing: Sequentially unfreeze and train deeper layers with a low learning rate (e.g., 1e-5).

- Train on Target Data: Use a small dataset of annotated histology slides (e.g., 30-50 images). Employ heavy data augmentation (rotation, flipping, color jitter).

- Validation: Use Dice Coefficient on a held-out validation set to monitor performance, targeting >0.75.

Diagram: Transfer Learning Workflow for Histology Analysis

Key Quantitative Impact of Transfer Learning

Table 2: Performance Gains from Transfer Learning in Biodistribution Tasks

| Target Task | Base Model | Source Task | Target Data Size | Performance (Without TL) | Performance (With TL) | Relative Improvement |

|---|---|---|---|---|---|---|

| Liver SINAP Quantification | ResNet34 | ImageNet Classification | 45 images | mIoU: 0.52 | mIoU: 0.81 | +55.8% |

| Tumor Accumulation Prediction | DenseNet121 | Cancer Genome Atlas | 120 profiles | R²: 0.41 | R²: 0.73 | +78.0% |

| Renal Clearance Classification | MobileNetV2 | General Object Detection | 80 samples | F1-Score: 0.66 | F1-Score: 0.88 | +33.3% |